Introduction

This lesson introduces concurrency and provides motivational examples to further our understanding of concurrent systems.

Introduction

Understanding of concurrency and its implementation models using either threads or coroutines exhibits maturity and technical depth in a candidate, which can be an important differentiator in landing a higher level software engineering job at a company. More importantly, grasp over concurrency lets us write performant and efficient programs. In fact, you'll inevitably run into the guts of the topic when working on any sizeable project.

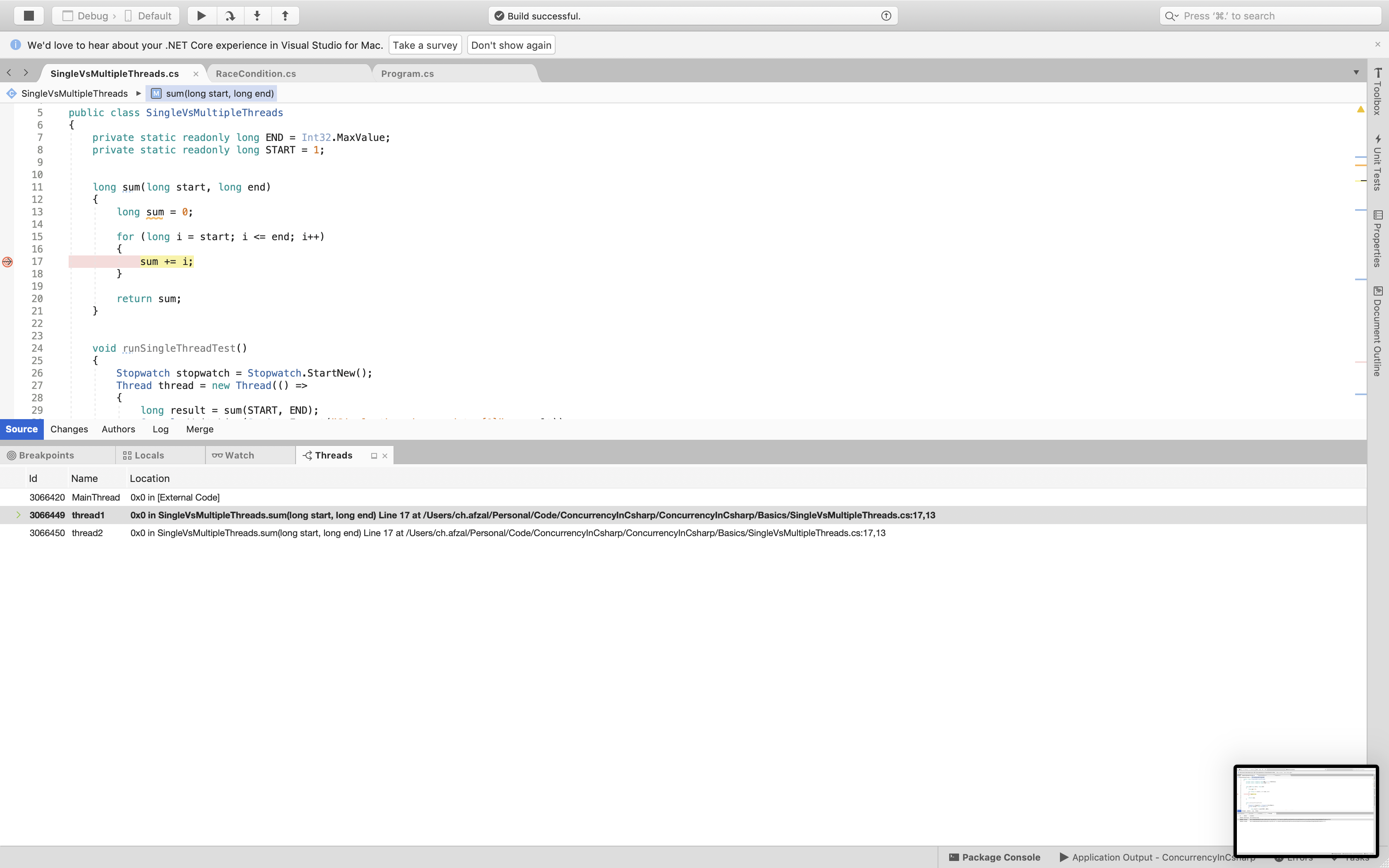

We'll start with threads as they are the most well-known and familiar concepts in the context of concurrency. Threads, like most computer science concepts, aren't physical objects. The closest tangible manifestation of a thread can be seen in a debugger. The screen-shot below shows the threads of an example program suspended in the debugger.

The simplest example of a concurrent system is a single-processor machine running your favorite IDE. Say you edit one of your code files and click save. Clicking of the button will initiate a workflow which will cause bytes to be written out to the underlying physical disk. However, IO is an expensive operation, and the CPU will be idle while bytes are being written out to the disk.

Whilst IO takes place, the idle CPU could work on something useful. Here is where threads come in - the IO thread is switched out and the UI thread gets scheduled on the CPU so that if you click elsewhere on the screen, your IDE is still responsive and does not appear hung or frozen.

Threads can give the illusion of multitasking even though the CPU is executing only one thread at any given point in time. Each thread gets a slice of time on the CPU and then gets switched out either because it initiates a task which requires waiting and not utilizing the CPU or it completes its time slot on the CPU. There are many nuances and intricacies in thread scheduling, but what we just described forms the basis of it.

With advances in hardware technology, it is now common to have multi-core machines. Applications can take advantage of these architectures and have a dedicated CPU run each thread.

Benefits of Threads

Higher throughput, though in some pathetic scenarios it is possible to have the overhead of context switching among threads steal away any throughput gains and result in worse performance than a single-threaded scenario. However, such cases are unlikely and are exceptions rather than the norm.

Responsive applications that give the illusion of multitasking.

Efficient utilization of resources. Note that thread creation is lightweight in comparison to spawning a brand new process. Web servers that use threads instead of creating new processes when fielding web requests consume far fewer resources.

All other benefits of multi-threading are extensions of or indirect benefits of the above.

Performance Gains via Multi-Threading

As a concrete example, consider the example code below. The task is to compute the sum of all the integers from 0 to Int32.MaxValue. In the first scenario, we have a single thread doing the summation while in the second scenario, we split the range into two parts and have one thread sum for each range. Once both the threads are complete, we add the two half sums to get the combined sum. The code widget below presents the results:

using System;using System.Threading;using System.Diagnostics;class Demonstration{static void Main(){new SingleVsMultipleThreads().runTest();}}public class SingleVsMultipleThreads{private static readonly long END = Int32.MaxValue;private static readonly long START = 1;long sum(long start, long end){long sum = 0;for (long i = start; i <= end; i++){sum += i;}return sum;}void runSingleThreadTest(){Stopwatch stopwatch = Stopwatch.StartNew();Thread thread = new Thread(() =>{long result = sum(START, END);Console.WriteLine(String.Format("Single thread summed to {0}", result));});thread.Start();thread.Join();stopwatch.Stop();Console.WriteLine(String.Format("Single thread took {0} milliseconds to complete", stopwatch.ElapsedMilliseconds));}void runMultiThreadTest(){Stopwatch stopwatch = Stopwatch.StartNew();long result1 = 0;long result2 = 0;Thread thread1 = new Thread(() =>{result1 = sum(START, END / 2);});Thread thread2 = new Thread(() =>{result2 = sum(1 + (END / 2), END);});thread1.Start();thread2.Start();thread1.Join();thread2.Join();stopwatch.Stop();Console.WriteLine(String.Format("Multiple threads summed to {0}", result1 + result2));Console.WriteLine(String.Format("Multiple threads took {0} milliseconds to complete", stopwatch.ElapsedMilliseconds));}public void runTest(){runSingleThreadTest();runMultiThreadTest();}}

When I run the above program on my Macbook, the multiple threads take 1829 milliseconds to calculate the sum while a single thread takes 3536 milliseconds. You may observe different numbers but the time taken by two threads would always be less than the time taken by a single thread, assuming of course your machine has multiple cores. The performance gains can be many folds depending on the availability of multiple CPUs and the nature of the problem being solved. However, there will always be problems that don't yield well to a multi-threaded approach and may very well be solved efficiently using a single thread.

However, in general, tasks involving blocking operations such as network and disk I/O, can see significant performance gains when migrated to a multithreaded or multiprocessor architecture.

Problems with Threads

There's no free lunch in life. The premium for using threads manifests in the following forms:

- It's usually very hard to find bugs, some that may only rear their heads in production environments.

- Higher cost of code maintenance since the code inherently becomes harder to reason about.

- Increased utilization of system resources. Creation of each thread consumes additional memory, CPU cycles for book-keeping and waste of time in context switches.

- Programs may experience slowdown as coordination amongst threads comes at a price. Acquiring and releasing locks adds to program execution time. Threads fighting over acquiring locks cause lock contention.

With this backdrop lets delve into more details of concurrent programming which you are likely to be quizzed about in an interview.