What are Support Vector Machines (SVM)?

Support Vector Machine (SVM) is a simple, supervised machine algorithm used for classification and regression purposes.

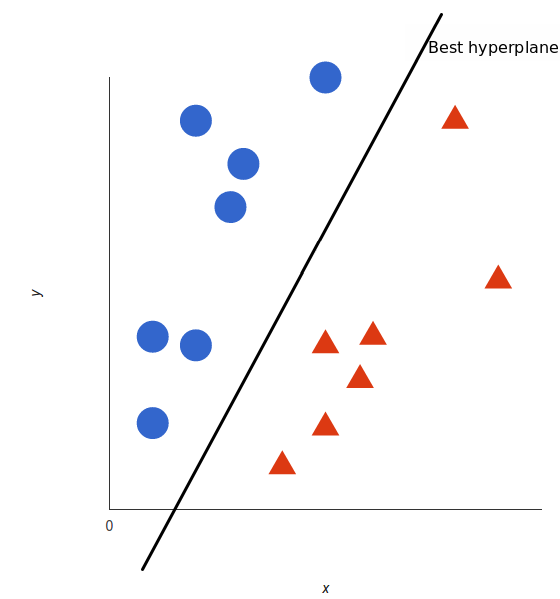

SVM finds a hyperplane that creates a boundary between two classes of data to classify them.

In 2-D space, this hyperplane is a line.

SVM plots each data item in an N-dimensional space. Dimension depends on the features or attributes of data. Then, it finds a hyper-plane to separate the data.

SVM mostly classifies data between only two classes. For multi-classes, the mechanism is a little different.

SVM and Multi-Classes

This method creates a binary classifier for each different class of data. It returns a boolean result whether the data belongs to that class or not.

For example, in a class of chocolates, a multi-class classification will be performed for each chocolate. For example, ‘Hersheys’ class will use a binary classification to predict if the chocolate is Hersheys or not.

SVM Methodology

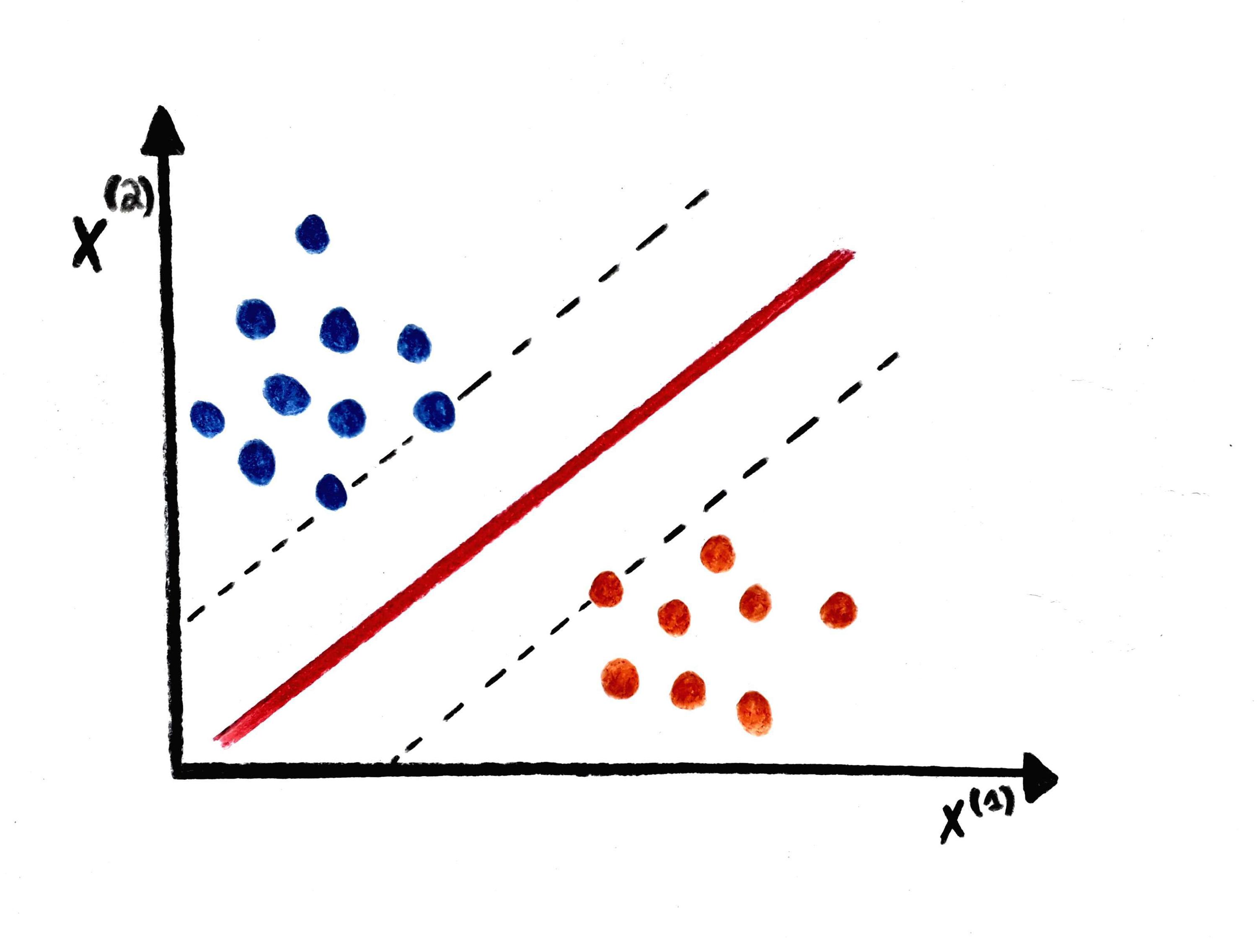

SVM uses a simple technique. First, it identifies two data points, also called “support vectors.” Then, the model creates a line between the points that is also equidistant between both the points. This line is called the “best hyper-plane.”

Next, it creates two imaginary lines passing through these “support vectors,” which are parallel to the best hyper-plane and are also called positive and negative hyper-planes. All the data points are then validated to see which hyper-plane they are closest to. Hence, the entire data is classified.

SVM for complex (non-linearly separable)

It is easy to separate linear separable data. Data can be classified using a straight line. However, for non-linearly separable data, Kernelized SVM is used.

Suppose there is a piece of non-linear data in one-dimension. The kernel will map each point in one dimension to an ordered pair in two-dimensions and transform it into two-dimensions. As a result, this data becomes linearly separable in two-dimensions. Data can be easily mapped in a higher dimension to make it linearly separable in that corresponding dimension.

Free Resources