Join 3 million developers at

Join 3 million developers at

LEARNING OBJECTIVES

- An understanding of Google BERT’s architecture, pre-training tasks (MLM, NSP), and transformer fundamentals like self-attention and multi-head attention

- The ability to apply and fine-tune pretrained BERT models for NLP tasks such as sentiment analysis, NER, question answering, and domain-specific applications

- Familiarity with BERT variants (ALBERT, RoBERTa, ELECTRA) and lightweight models using knowledge distillation (DistilBERT, TinyBERT)

- The ability to utilize advanced BERT applications, including text summarization (BERTSUM), multilingual models (M-BERT), and multimodal tools like VideoBERT

- The ability to build real-world projects using BERT libraries like Hugging Face Transformers and apply domain-specific models like BioBERT and FinBERT

Learning Roadmap

3.

A Primer on Transformers

A Primer on Transformers

18 Lessons

18 Lessons

Work your way through the transformer architecture, including encoder-decoder components and self-attention mechanisms.

4.

Understanding the BERT Model

Understanding the BERT Model

14 Lessons

14 Lessons

Grasp the fundamentals of the BERT model's architecture, training, and tokenization methods.

5.

Getting Hands-On with BERT

Getting Hands-On with BERT

11 Lessons

11 Lessons

Solve problems in applying pre-trained BERT for various NLP tasks using embeddings.

7.

Different BERT Variants

Different BERT Variants

12 Lessons

12 Lessons

Practice using ALBERT, RoBERTa, ELECTRA, and SpanBERT for task-specific NLP improvements.

8.

BERT Variants—Based on Knowledge Distillation

BERT Variants—Based on Knowledge Distillation

14 Lessons

14 Lessons

Try out knowledge distillation in BERT variants, including DistilBERT and TinyBERT.

10.

Exploring BERTSUM for Text Summarization

Exploring BERTSUM for Text Summarization

8 Lessons

8 Lessons

Examine text summarization and fine-tuning BERTSUM for extractive and abstractive summaries.

11.

Applying BERT to Other Languages

Applying BERT to Other Languages

18 Lessons

18 Lessons

Grasp the fundamentals of utilizing multilingual and monolingual BERT models in various languages.

12.

Exploring Sentence and Domain-Specific BERT

Exploring Sentence and Domain-Specific BERT

10 Lessons

10 Lessons

Dig into Sentence-BERT enhancements and domain-specific adaptations like ClinicalBERT and BioBERT.

13.

Working with VideoBERT, BART, and More

Working with VideoBERT, BART, and More

10 Lessons

10 Lessons

See how VideoBERT integrates video and language, and explore BART's text, document summation.

Certificate of Completion

Showcase your accomplishment by sharing your certificate of completion.

Complete more lessons to unlock your certificate

Developed by MAANG Engineers

ABOUT THIS COURSE

This comprehensive course dives into Google’s BERT architecture, exploring its revolutionary role in natural language processing (NLP). Starting with BERT’s architecture and pre-training methods, you’ll uncover the mechanics of transformers, including encoder-decoder components and self-attention mechanisms. Gain hands-on experience fine-tuning BERT for NLP tasks like sentiment analysis, question-answering, and named entity recognition.

Discover BERT variants such as ALBERT, RoBERTa, and DistilBERT alongside domain-specific adaptations like ClinicalBERT and BioBERT. Explore applications in text summarization, multilingual tasks, and advanced models like VideoBERT and BART. With practical coding exercises and quizzes, you’ll master embeddings, tokenization, and BERT libraries, equipping you to build cutting-edge NLP solutions.

Whether you’re new to Google BERT or enhancing your expertise, this course is your guide to state-of-the-art NLP innovations.

ABOUT THE AUTHOR

Packt

A tech learning platform that provides online courses, eBooks, videos, and other resources to help individuals and organizations stay ahead of emerging and popular technologies.

Trusted by 3 million developers working at companies

A

Anthony Walker

@_webarchitect_

E

Evan Dunbar

ML Engineer

S

Software Developer

Carlos Matias La Borde

S

Souvik Kundu

Front-end Developer

V

Vinay Krishnaiah

Software Developer

Built for 10x Developers

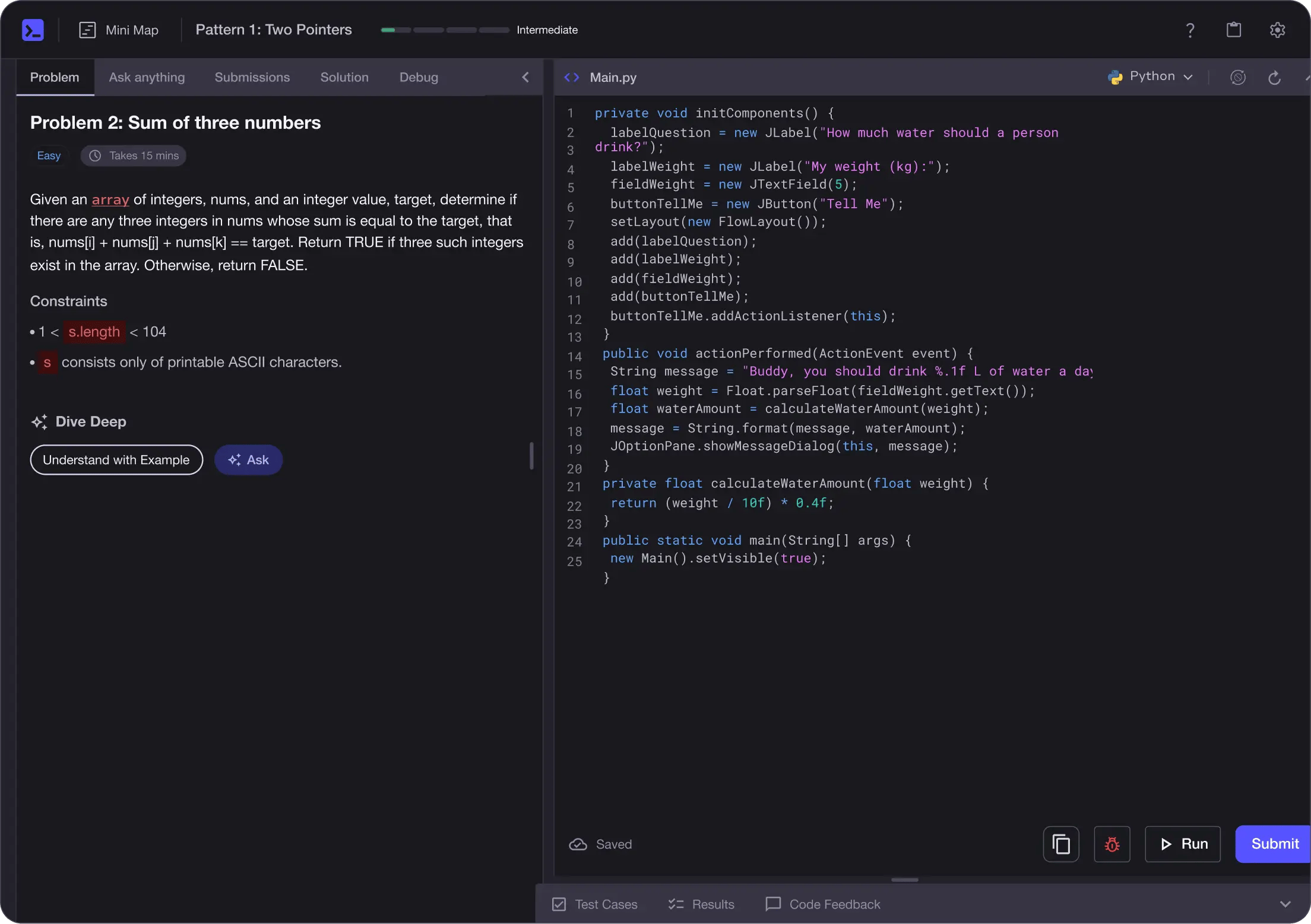

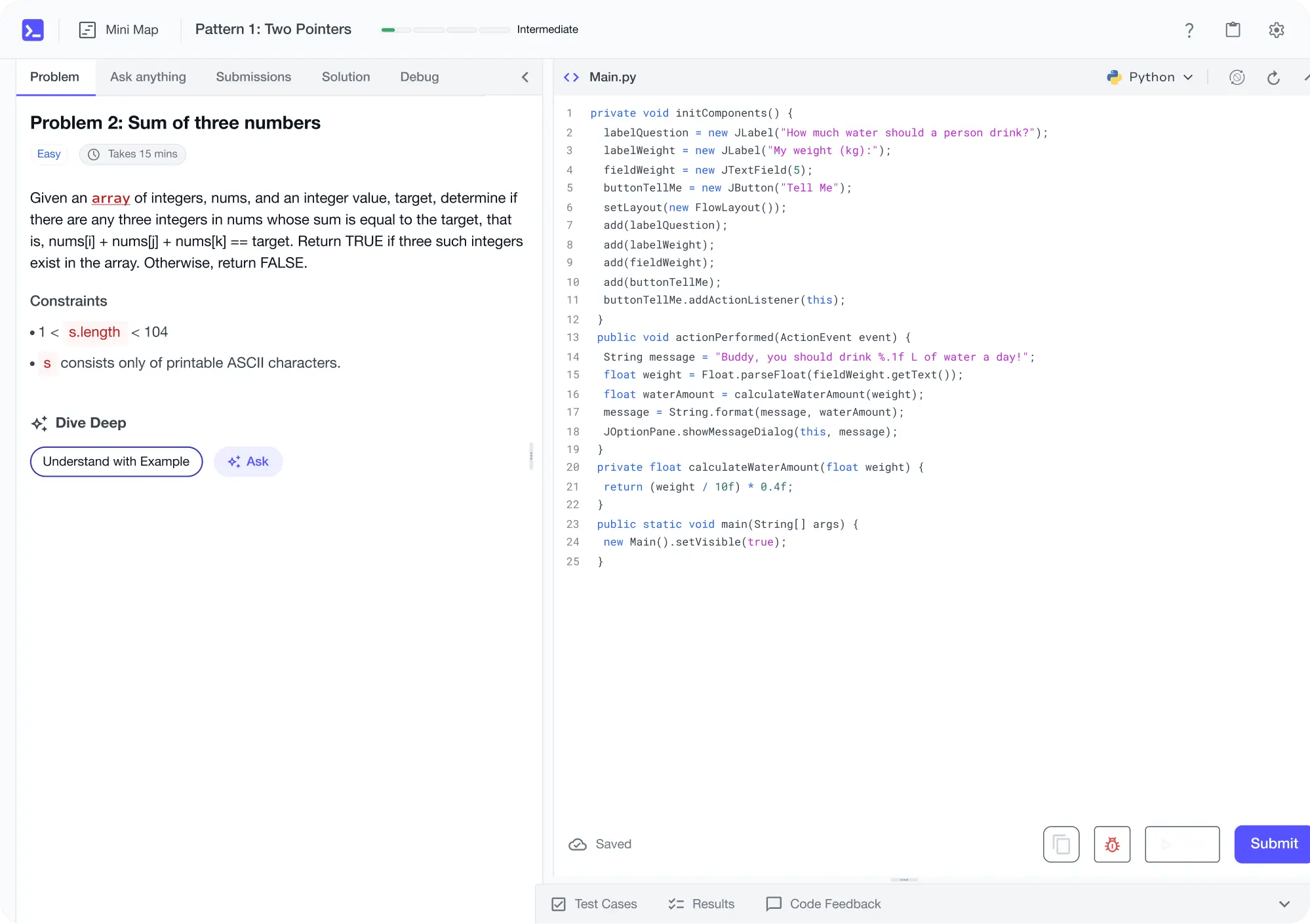

No Passive Learning

Learn by building with project-based lessons and in-browser code editor

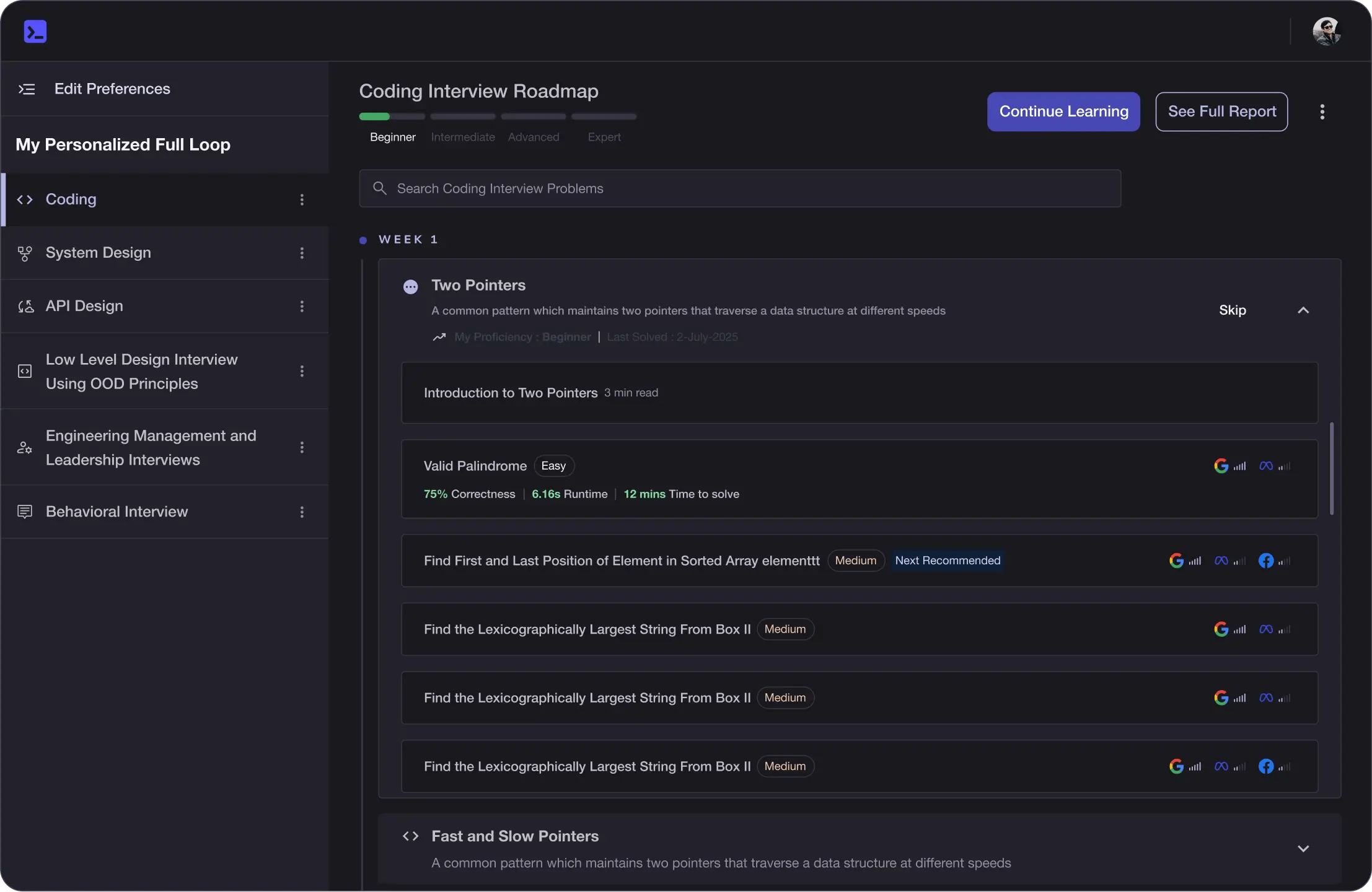

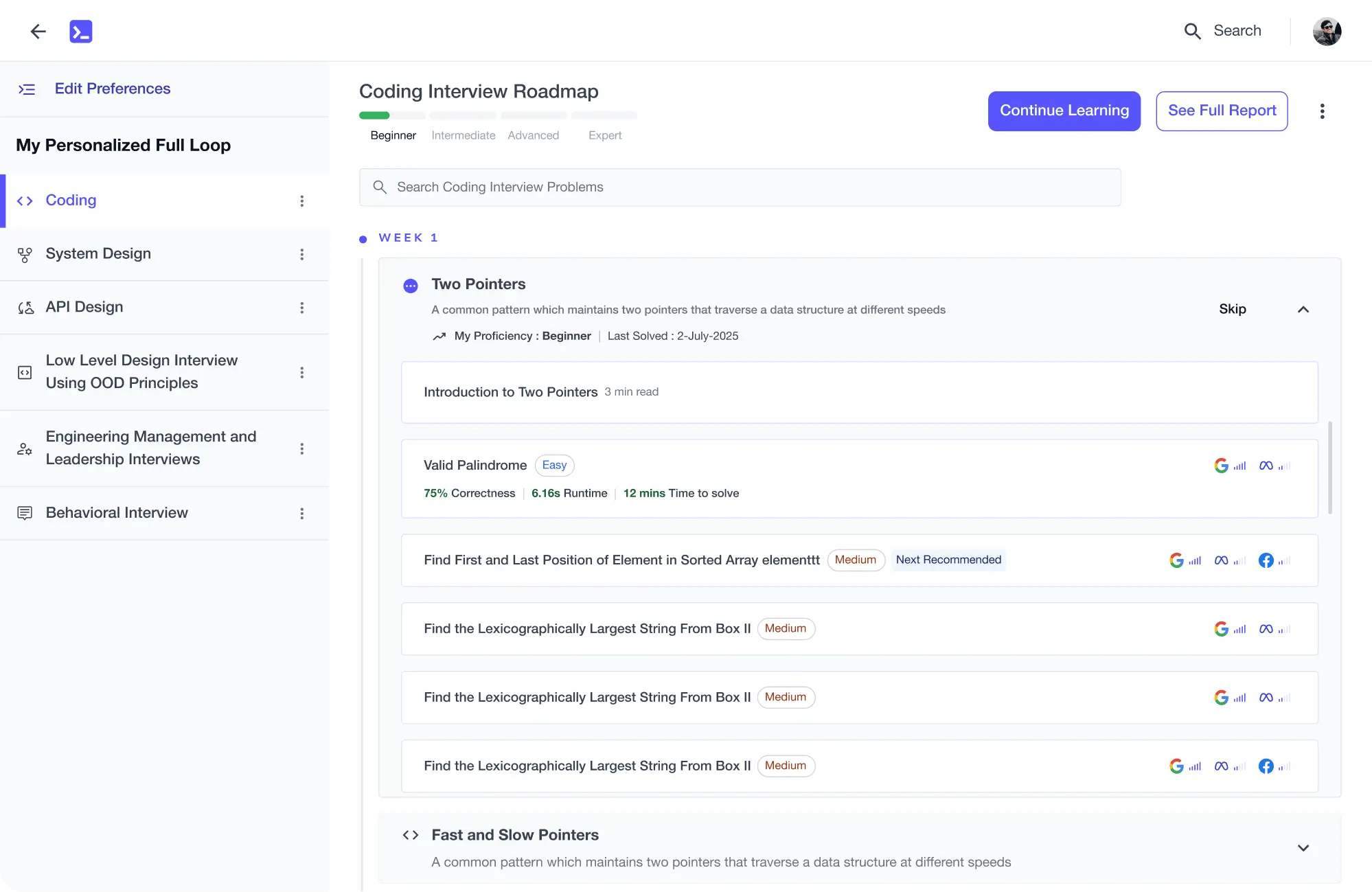

Personalized Roadmaps

The platform adapts to your strengths & skills gaps as you go

Future-proof Your Career

Get hands-on with in-demand skills

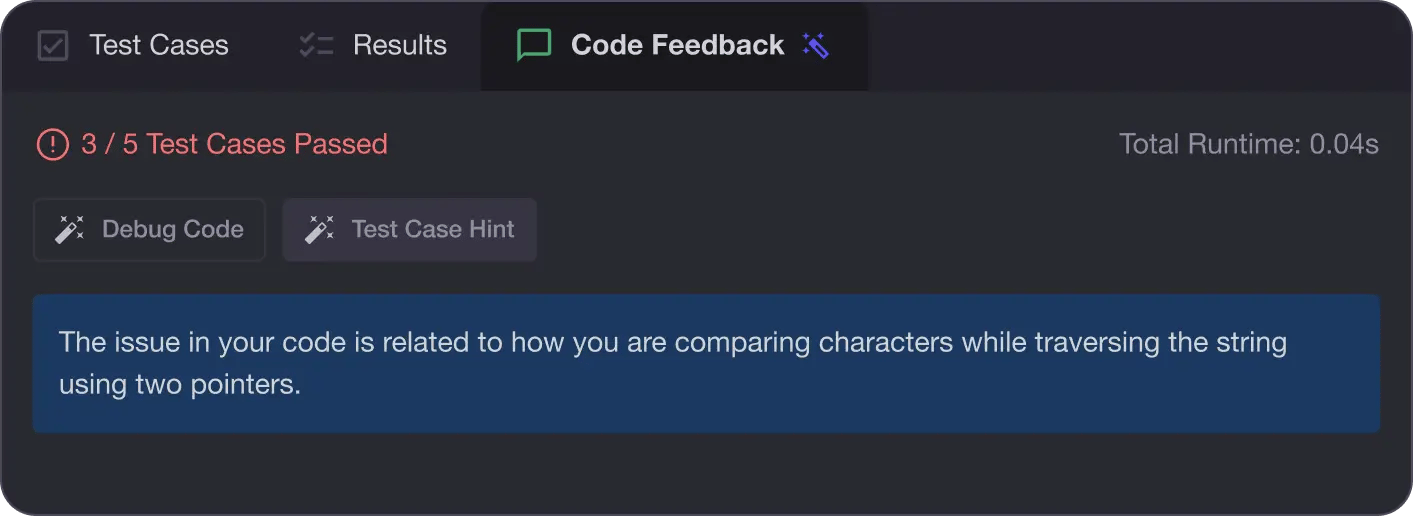

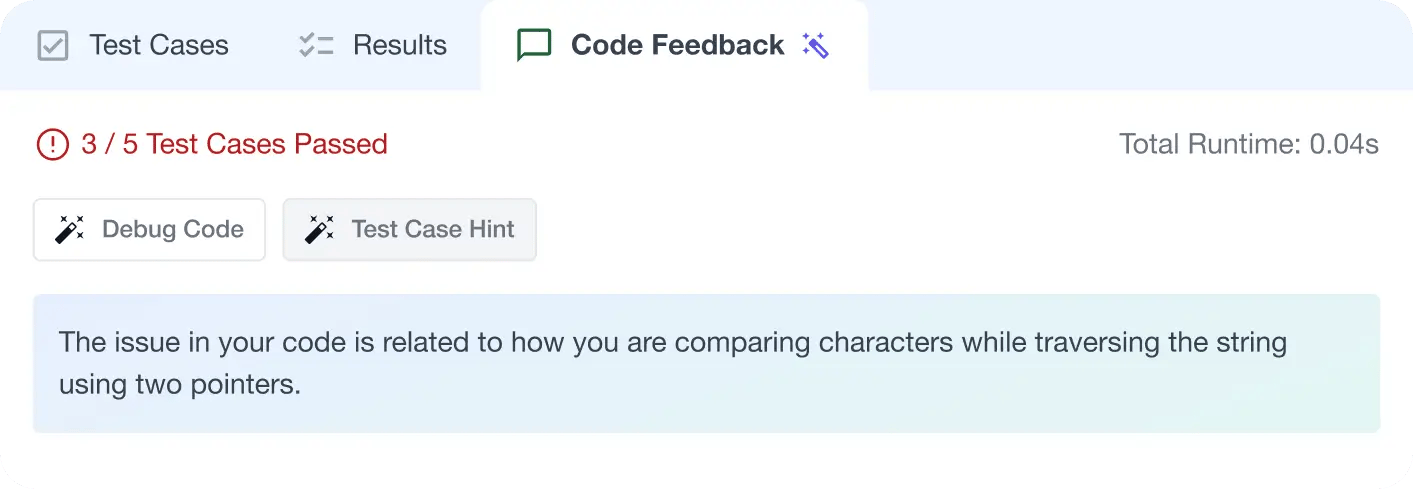

AI Code Mentor

Write better code with AI feedback, smart debugging, and "Ask AI"

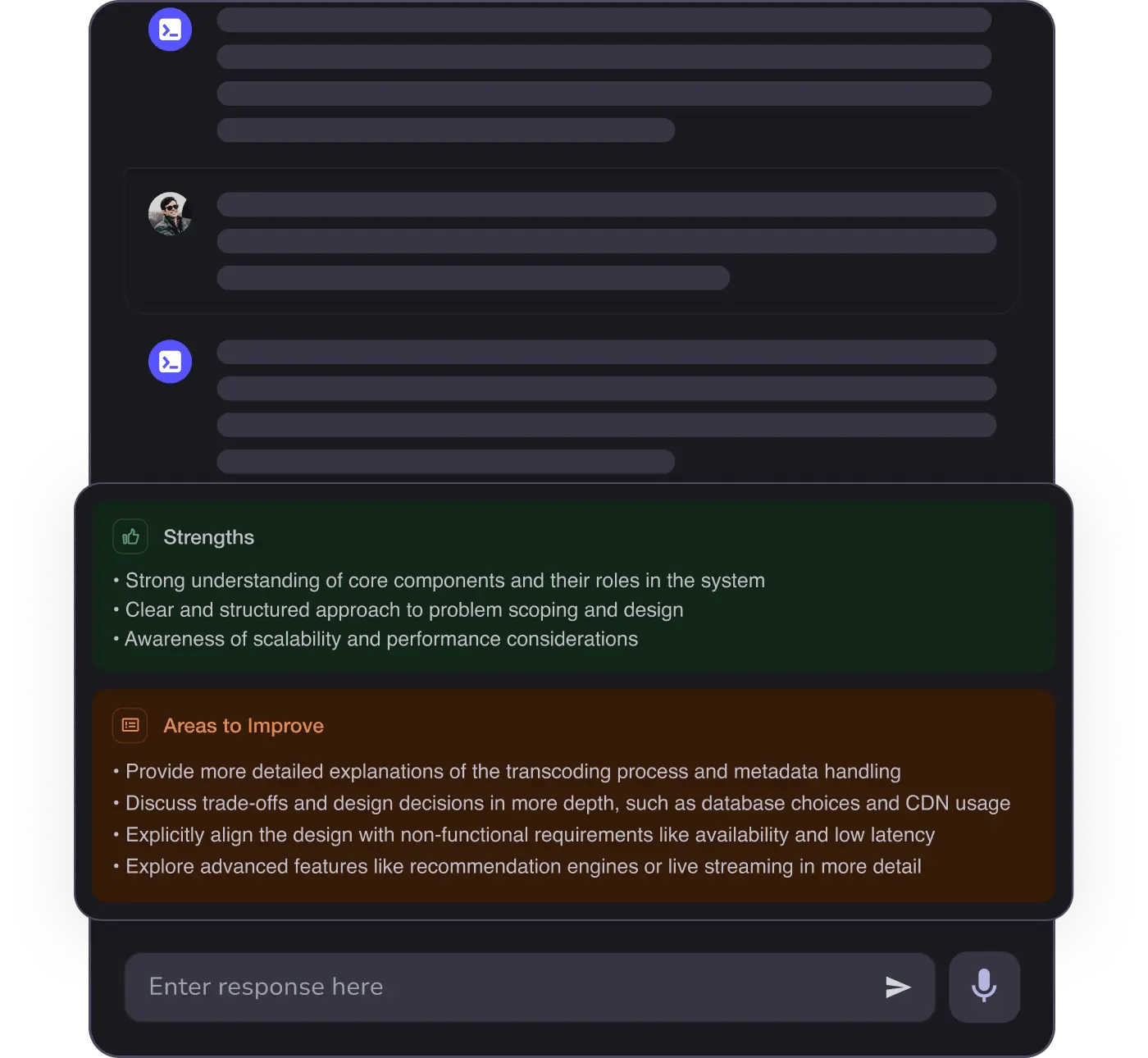

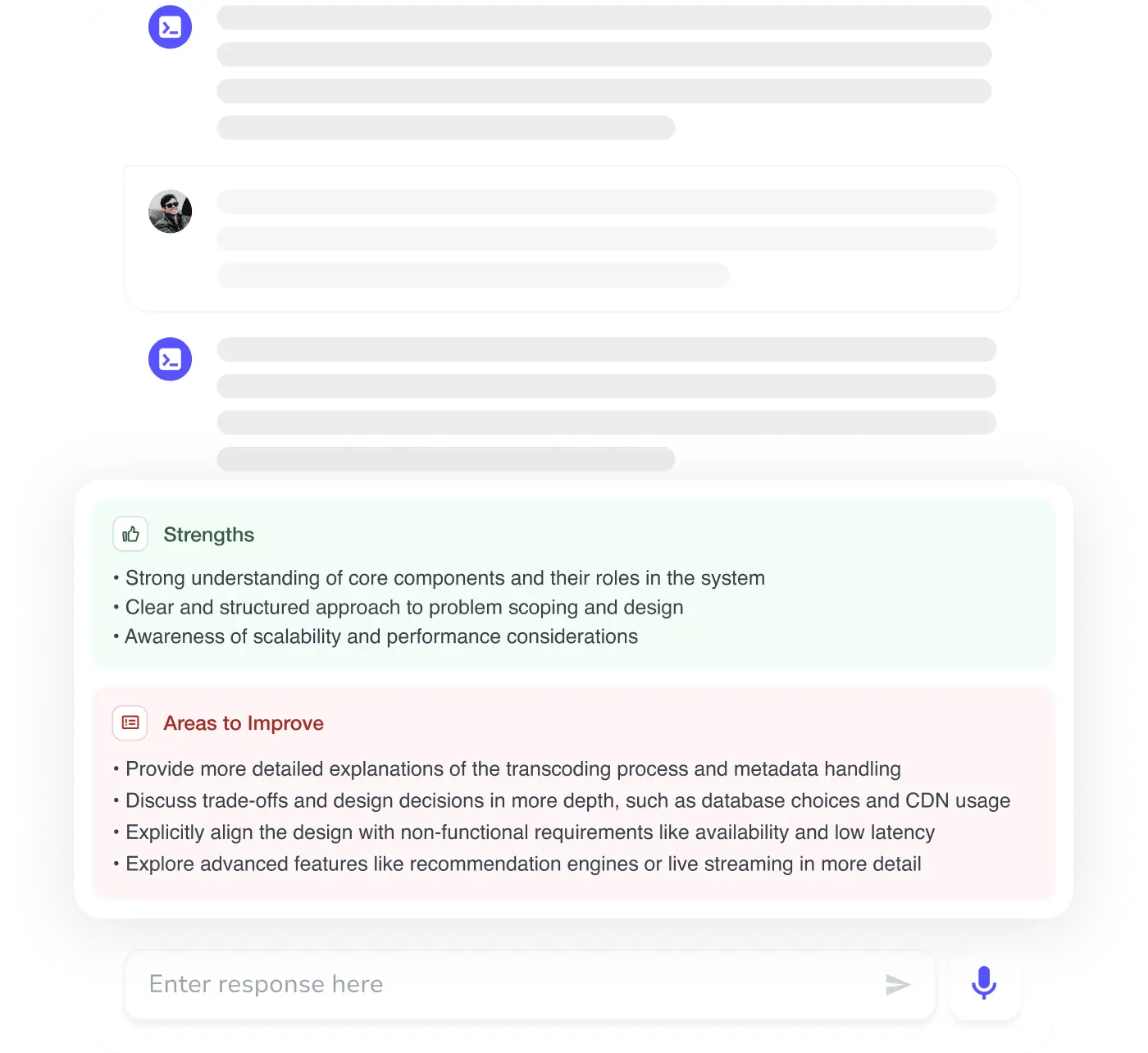

MAANG+ Interview Prep

AI Mock Interviews simulate every technical loop at top companies

Free Resources