LLM Evaluation: Building Reliable AI Systems at Scale

Learn to capture traces, generate synthetic data, evaluate agents and RAG systems, and build production-ready testing workflows so your LLM apps stay reliable and scalable.

- Evaluate the limitations of common model benchmarks for real-world AI products and differentiate between model and system evaluation.

- Design and implement various LLM evaluation methods, including automated checks, human reviews, and A/B tests, to ensure system quality.

- Analyze LLM traces and conduct systematic error analysis to identify and categorize failures for improved system reliability.

- Capture and review end-to-end LLM traces effectively, utilizing best practices for data storage and annotation to support reliable evaluations.

- Generate structured synthetic data to expose diverse behaviors and edge cases in LLM systems, guiding targeted testing and evaluation.

- Implement pass/fail evaluation methods to enhance clarity and accelerate model improvement, focusing on actual system behavior.

Demonstrate your ability to design and implement effective LLM evaluation strategies during technical interviews.

Apply systematic evaluation techniques to ensure your AI systems behave predictably and reliably in production environments.

Conduct thorough error analysis and trace evaluations to identify failure points and improve AI system performance.

Embed evaluation processes into continuous integration pipelines, ensuring ongoing reliability as AI systems evolve.

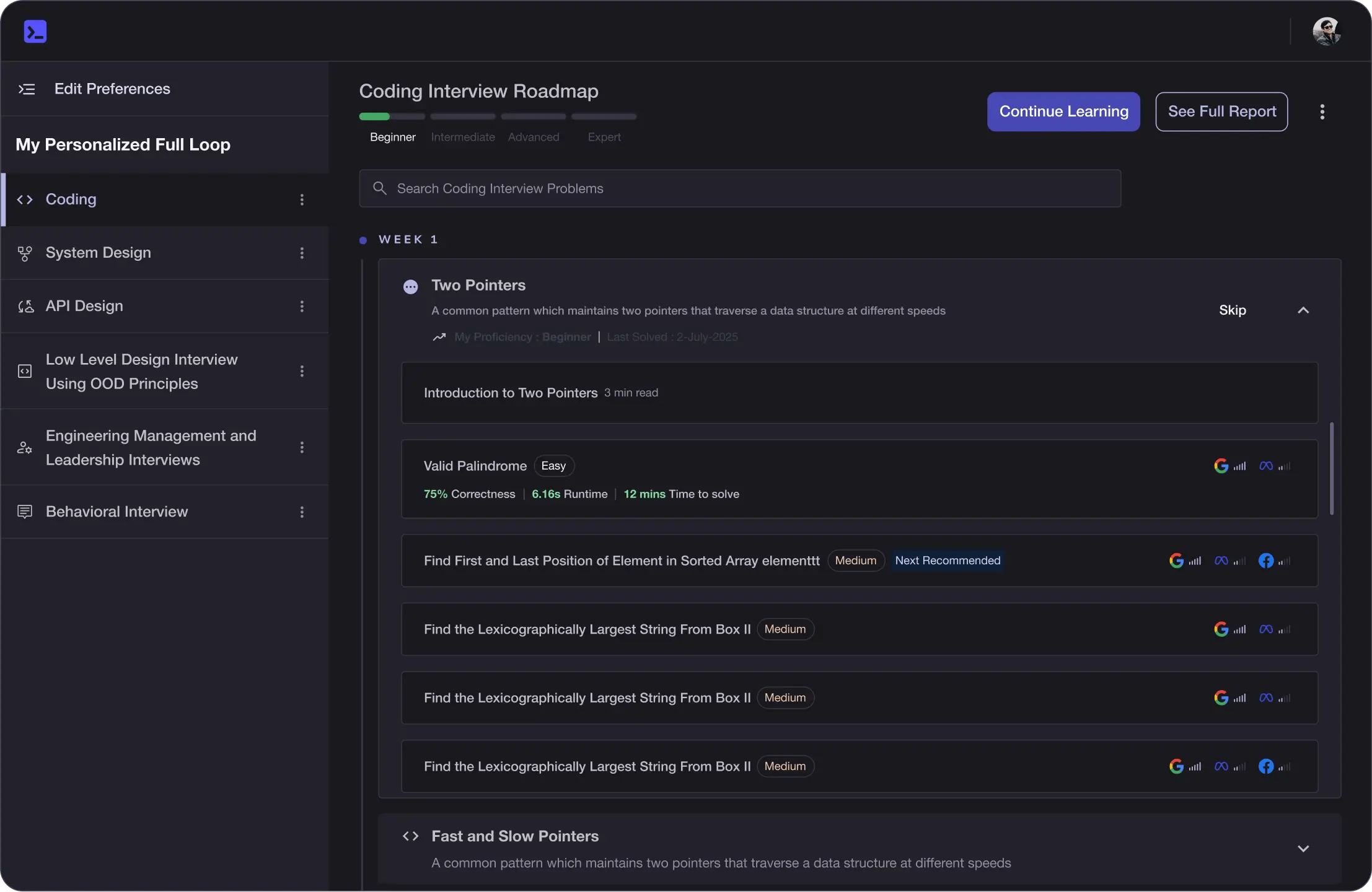

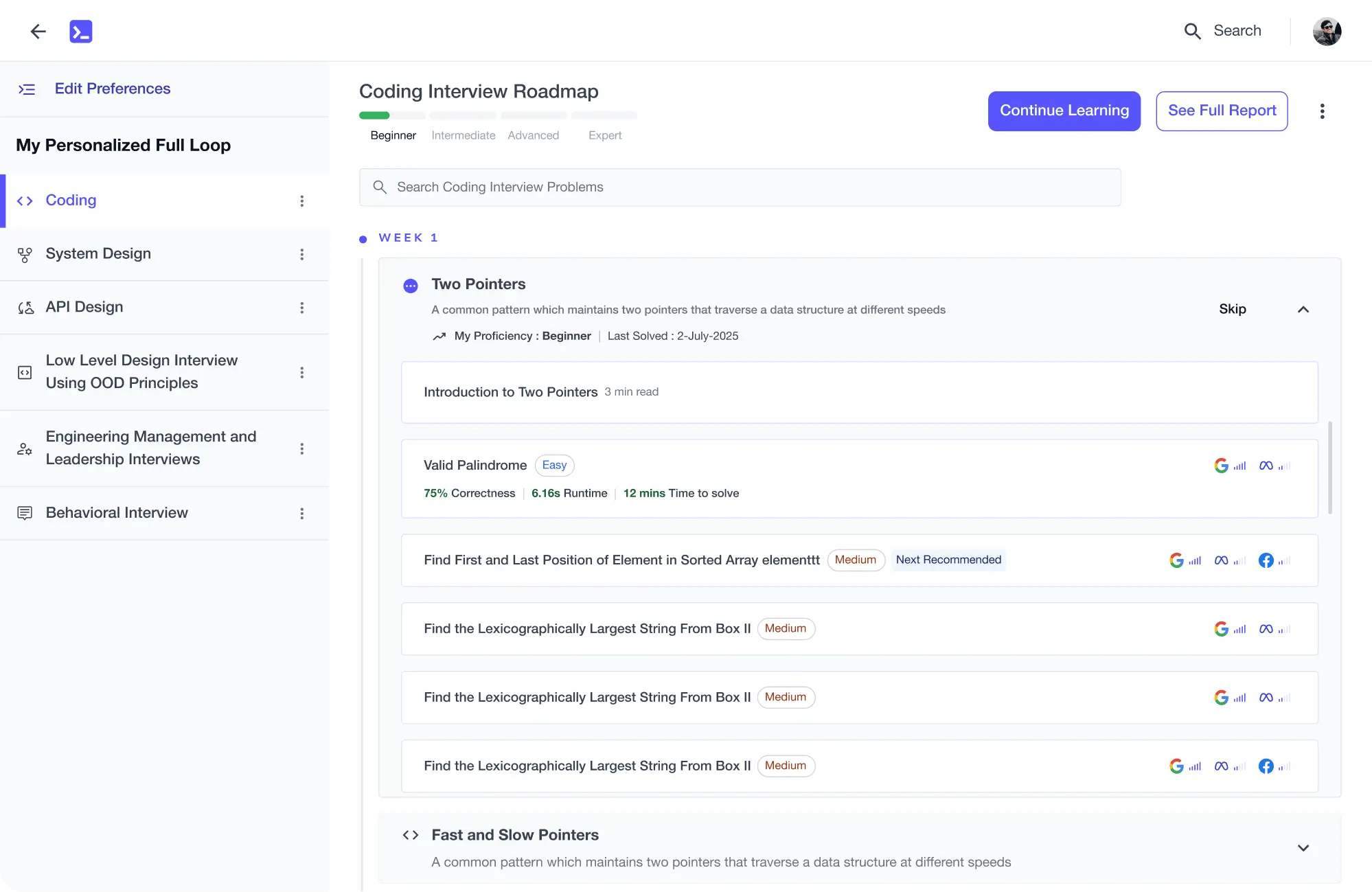

Learning Roadmap

1.

Foundations of AI Evaluation

Foundations of AI Evaluation

2.

Building the Evaluation Workflow

Building the Evaluation Workflow

3.

Scaling Evaluation Beyond the Basics

Scaling Evaluation Beyond the Basics

3 Lessons

3 Lessons

4.

Evaluating Real Systems in Production

Evaluating Real Systems in Production

3 Lessons

3 Lessons

5.

Wrap Up

Wrap Up

4 Lessons

4 Lessons

Khayyam Hashmi

Computer scientist and Generative AI and Machine Learning specialist. VP of Technical Content @ educative.io.

Trusted by 3 million developers working at companies

Anthony Walker

@_webarchitect_

Evan Dunbar

ML Engineer

Software Developer

Carlos Matias La Borde

Souvik Kundu

Front-end Developer

Vinay Krishnaiah

Software Developer

Built for 10x Developers

Free Resources