AI-powered learning

Save this course

Responsible AI Engineering: Alignment, Safety, and Governance

Learn the theory and practice of engineering responsible AI to build safe, reliable, and trustworthy AI systems.

4.8

15 Lessons

4h

Updated 2 months ago

Join 2.9 million developers at

Join 2.9 million developers at

LEARNING OBJECTIVES

- An understanding of AI safety, AI security, and their roles in responsible System Design

- The ability to classify AI risks, including bias, misalignment, and catastrophic misuse

- Hands-on experience auditing model robustness using adversarial attacks and interpretability tools

- A working knowledge of alignment techniques such as RLHF and PPO-style optimization

- Familiarity with red-teaming, runtime governance, and formal AI safety cases

Learning Roadmap

1.

Building the Foundation for Safe AI Systems

Building the Foundation for Safe AI Systems

Build a foundational risk map by contrasting accidents with attacks and deconstructing alignment failures such as reward hacking and Goodhart’s Law.

2.

The Technical Toolkit

The Technical Toolkit

Gain technical control by performing adversarial stress tests, auditing opaque decisions with interpretability tools, and steering intent using RLHF.

3.

Advanced Governance and Frontier Problems

Advanced Governance and Frontier Problems

5 Lessons

5 Lessons

Deploy safe systems by measuring dangerous capabilities, automating red teaming with PyRIT, and governing autonomous agents through runtime frameworks.

Certificate of Completion

Showcase your accomplishment by sharing your certificate of completion.

Complete more lessons to unlock your certificate

Developed by MAANG Engineers

ABOUT THIS COURSE

This course provides a practical, end-to-end exploration of responsible AI engineering for developers, researchers, and engineers working with modern AI systems. You’ll move from foundational concepts to applied techniques used to assess, align, and govern AI models in real-world deployments.

The course begins by distinguishing AI safety from AI security and mapping the full spectrum of AI risks, including bias, robustness failures, misalignment, and misuse. You’ll then analyze why models fail by examining technical alignment breakdowns such as reward hacking and specification gaming. Through hands-on exercises, you’ll audit models using adversarial attacks and interpretability tools like LIME and SHAP, apply alignment methods inspired by RLHF and PPO-style optimization, and automate red-teaming workflows with PyRIT.

The course concludes with advanced topics, including evaluating models for dangerous capabilities, implementing runtime governance, and constructing formal AI safety cases.

ABOUT THE AUTHOR

Khayyam Hashmi

Computer scientist and Generative AI and Machine Learning specialist. VP of Technical Content @ educative.io.

Trusted by 2.9 million developers working at companies

A

Anthony Walker

@_webarchitect_

E

Evan Dunbar

ML Engineer

S

Software Developer

Carlos Matias La Borde

S

Souvik Kundu

Front-end Developer

V

Vinay Krishnaiah

Software Developer

Built for 10x Developers

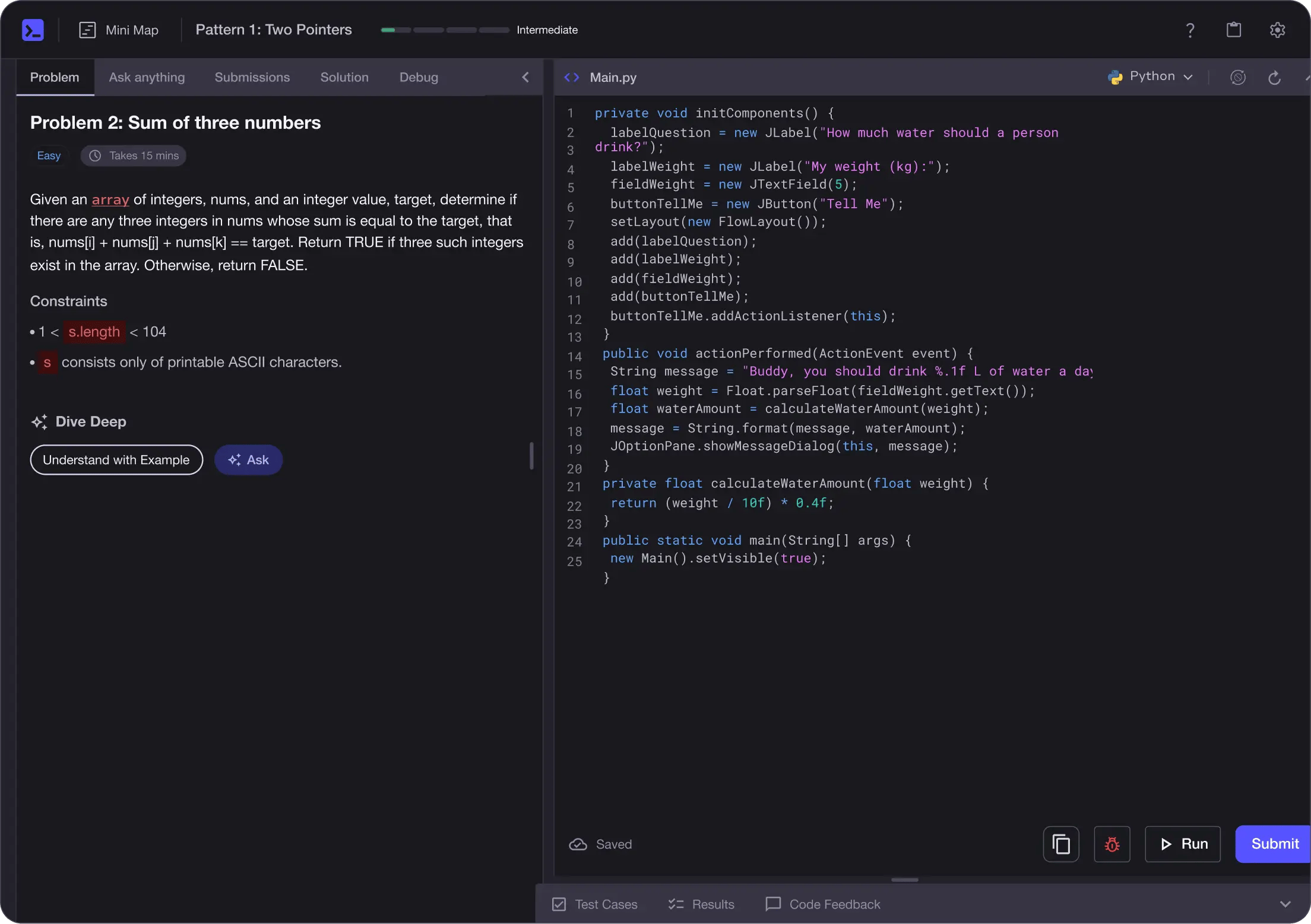

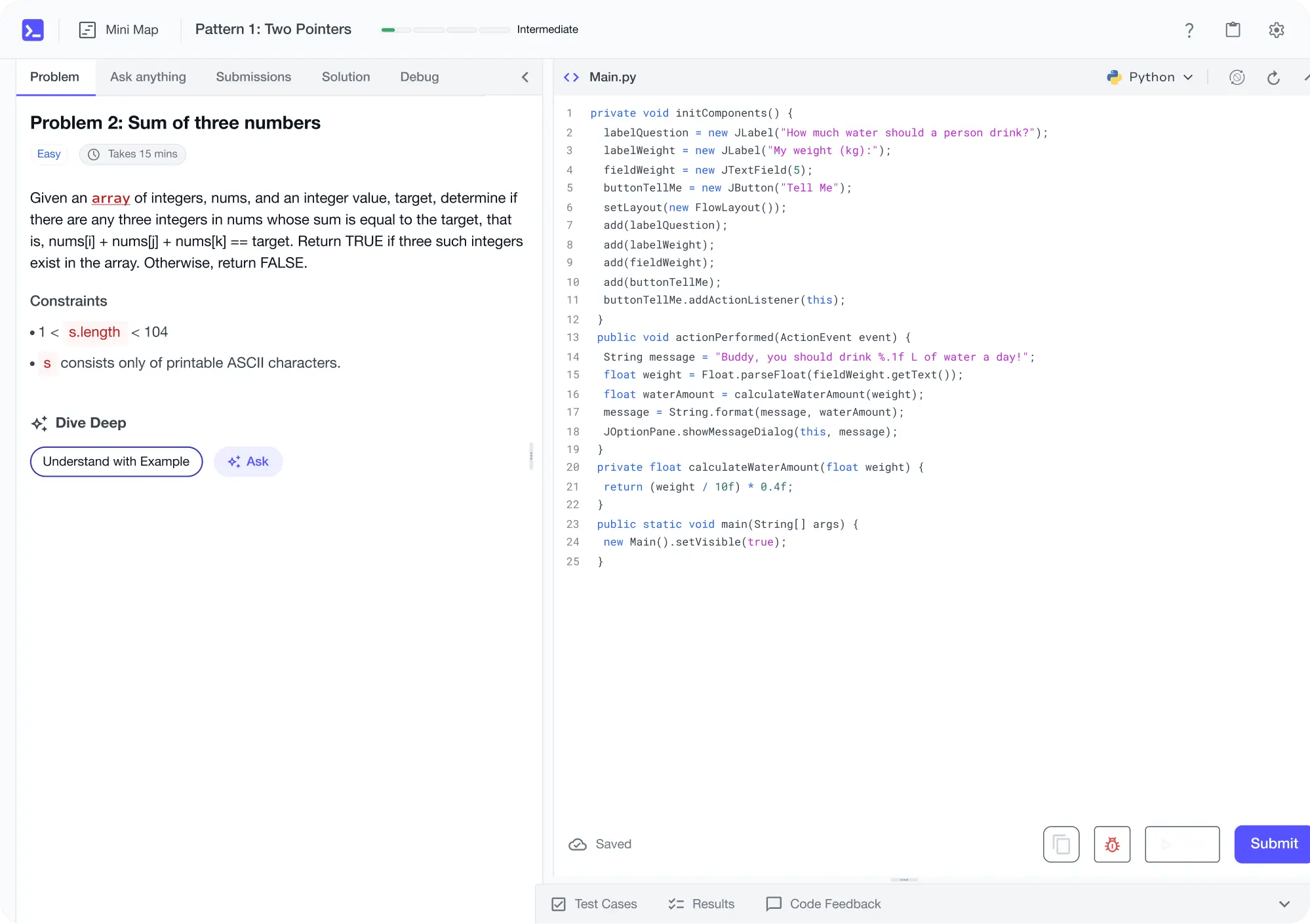

No Passive Learning

Learn by building with project-based lessons and in-browser code editor

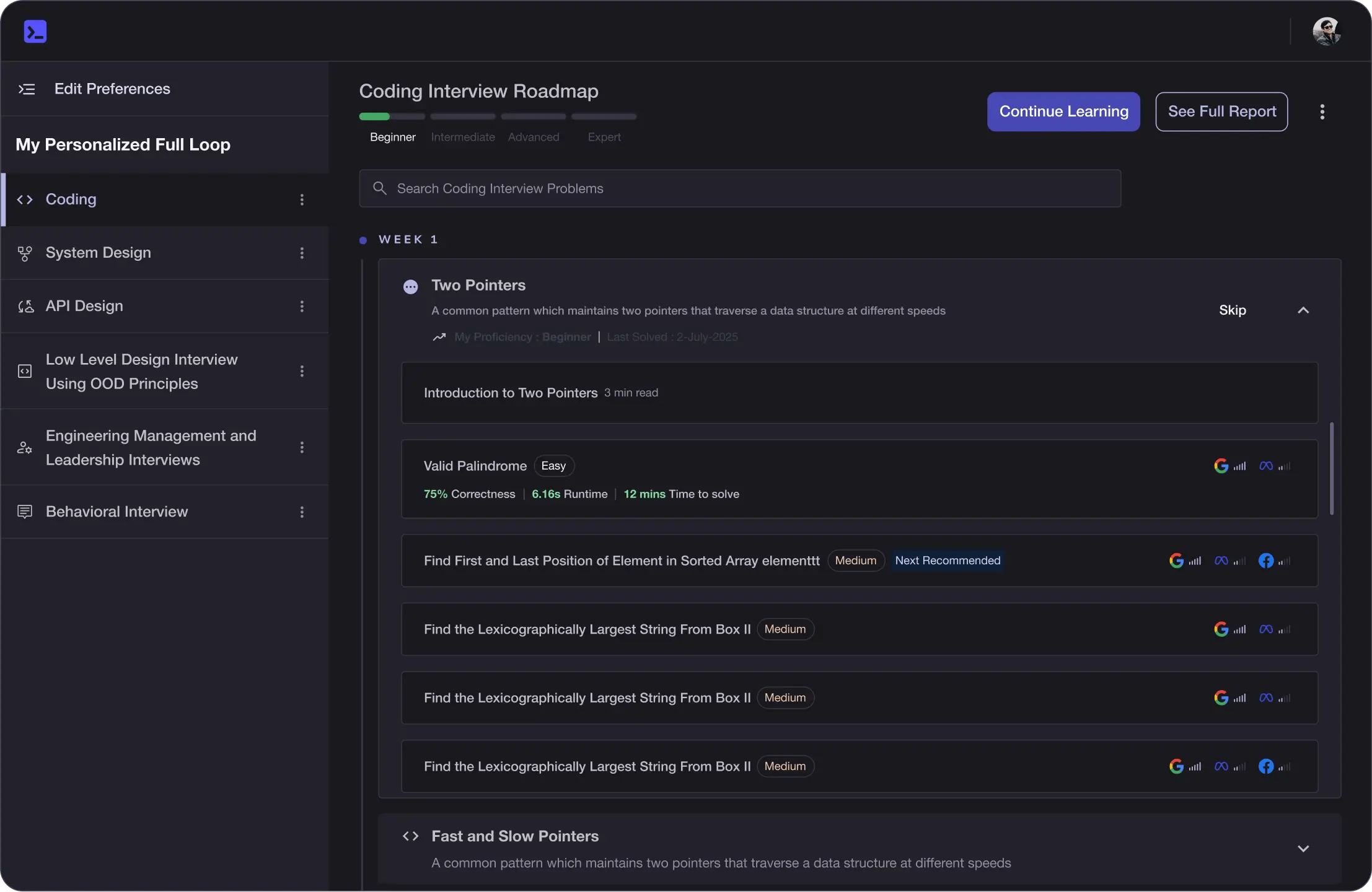

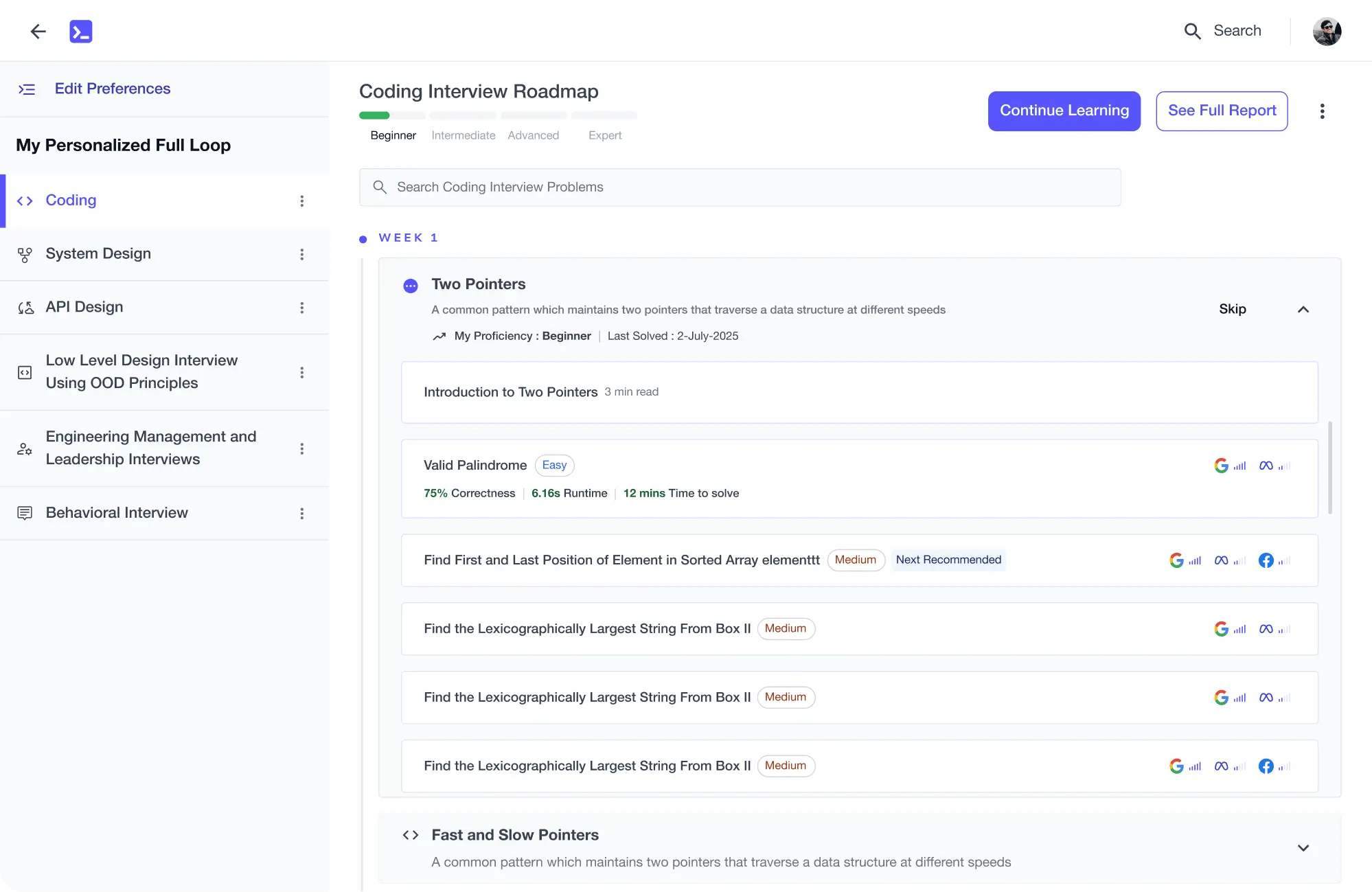

Personalized Roadmaps

The platform adapts to your strengths & skills gaps as you go

Future-proof Your Career

Get hands-on with in-demand skills

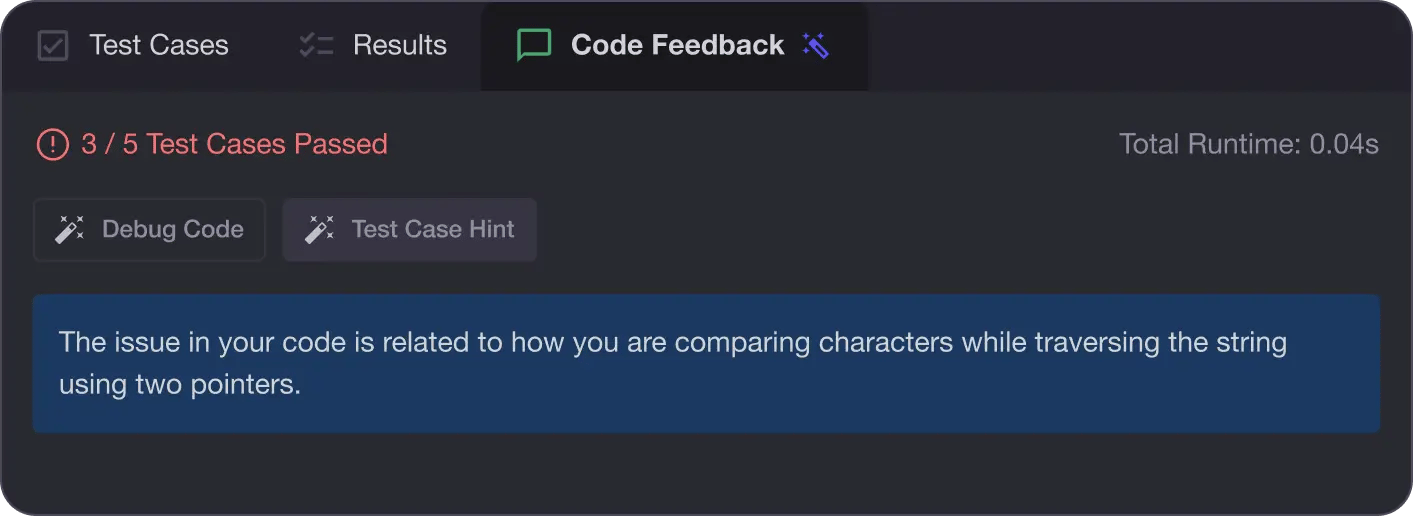

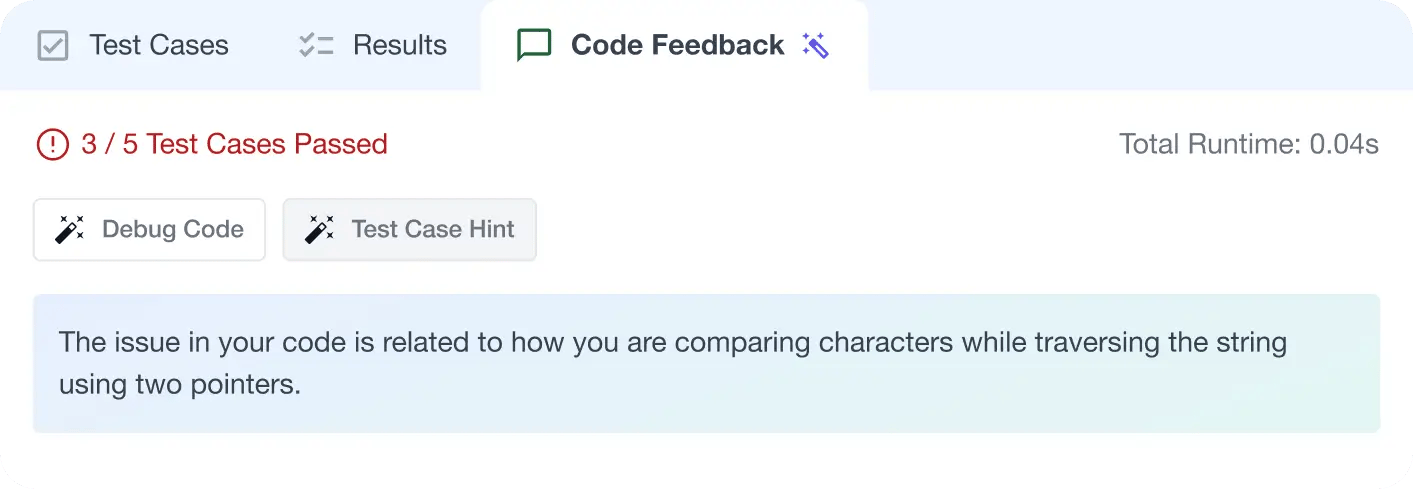

AI Code Mentor

Write better code with AI feedback, smart debugging, and "Ask AI"

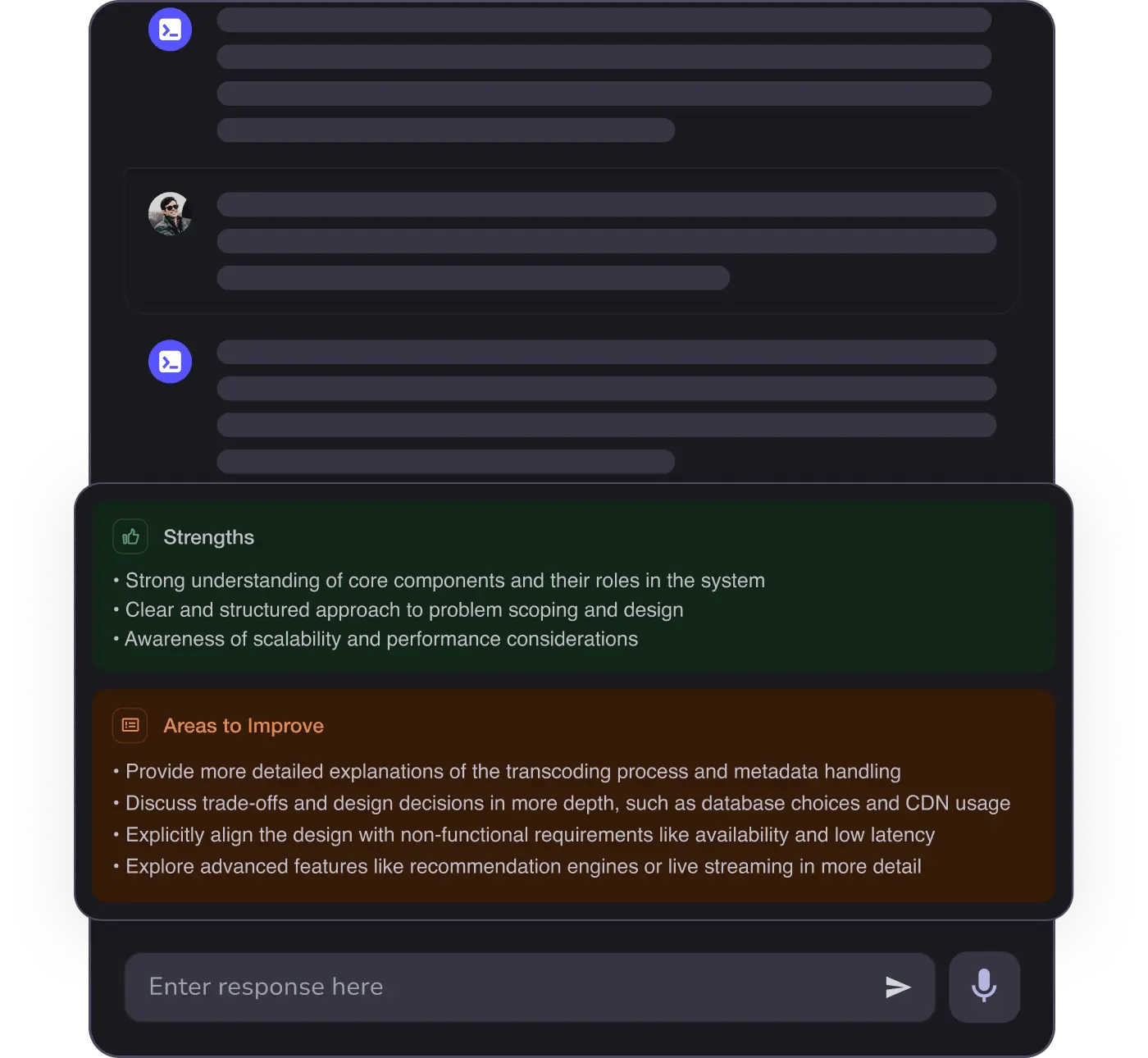

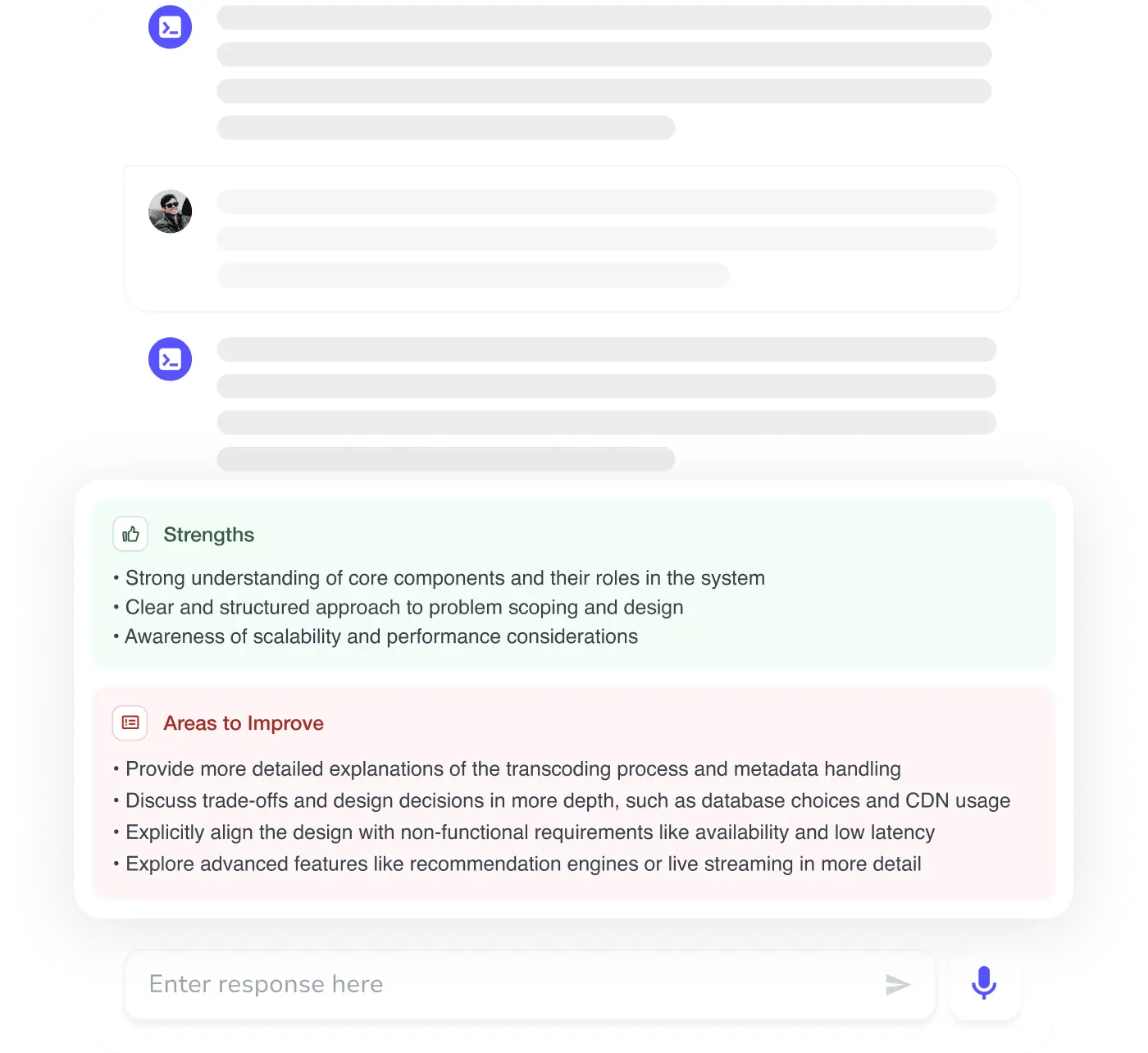

MAANG+ Interview Prep

AI Mock Interviews simulate every technical loop at top companies

Free Resources