Yes. In 2026, System Design is a core skill for software engineers, especially for mid-level and senior roles. Companies increasingly expect candidates to understand scalability, reliability, and real-world architecture decisions. Learning System Design not only helps you pass interviews but also prepares you for building production-grade systems.

Grokking Modern System Design Interview

Everything you need for Grokking the System Design Interview, developed by FAANG engineers. Master distributed system fundamentals and practice real-world interview questions.

- A 45-minute answer structure with RESHADED for any System Design Interview

- An understanding of how to frame open-ended interview problems as specific requirements, constraints, and success criteria

- The ability to design scalable, reliable systems with databases, caches, load balancers, queues, and microservices

- Pattern toolkit: sharding, replication, consistency models, CQRS, and event-driven design

- Capacity and reliability skills: throughput and latency math, bottlenecks, SLIs and SLOs, failure handling

- Communication under pressure: fast diagramming, clear trade-off narratives, effective checkpoints

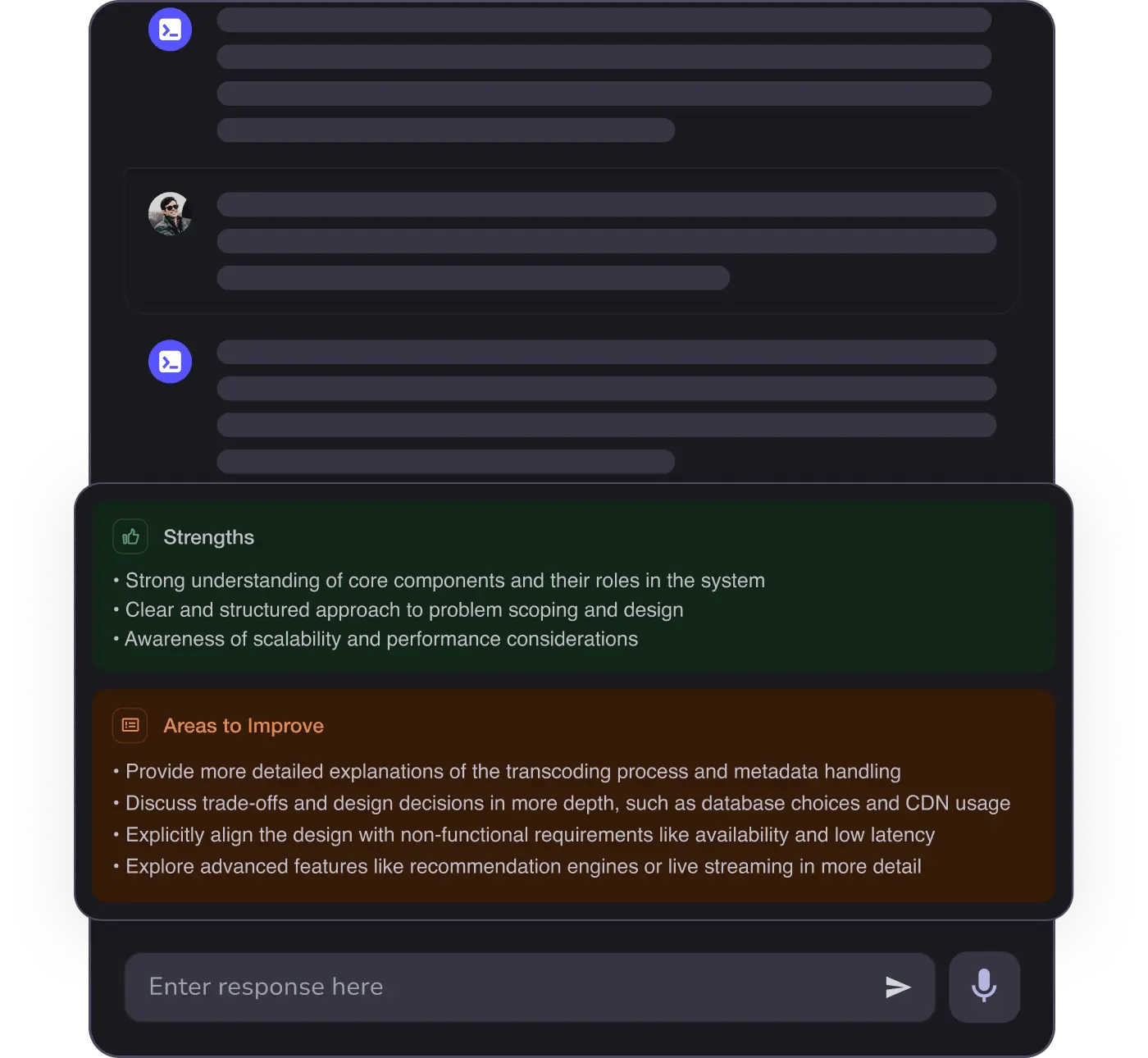

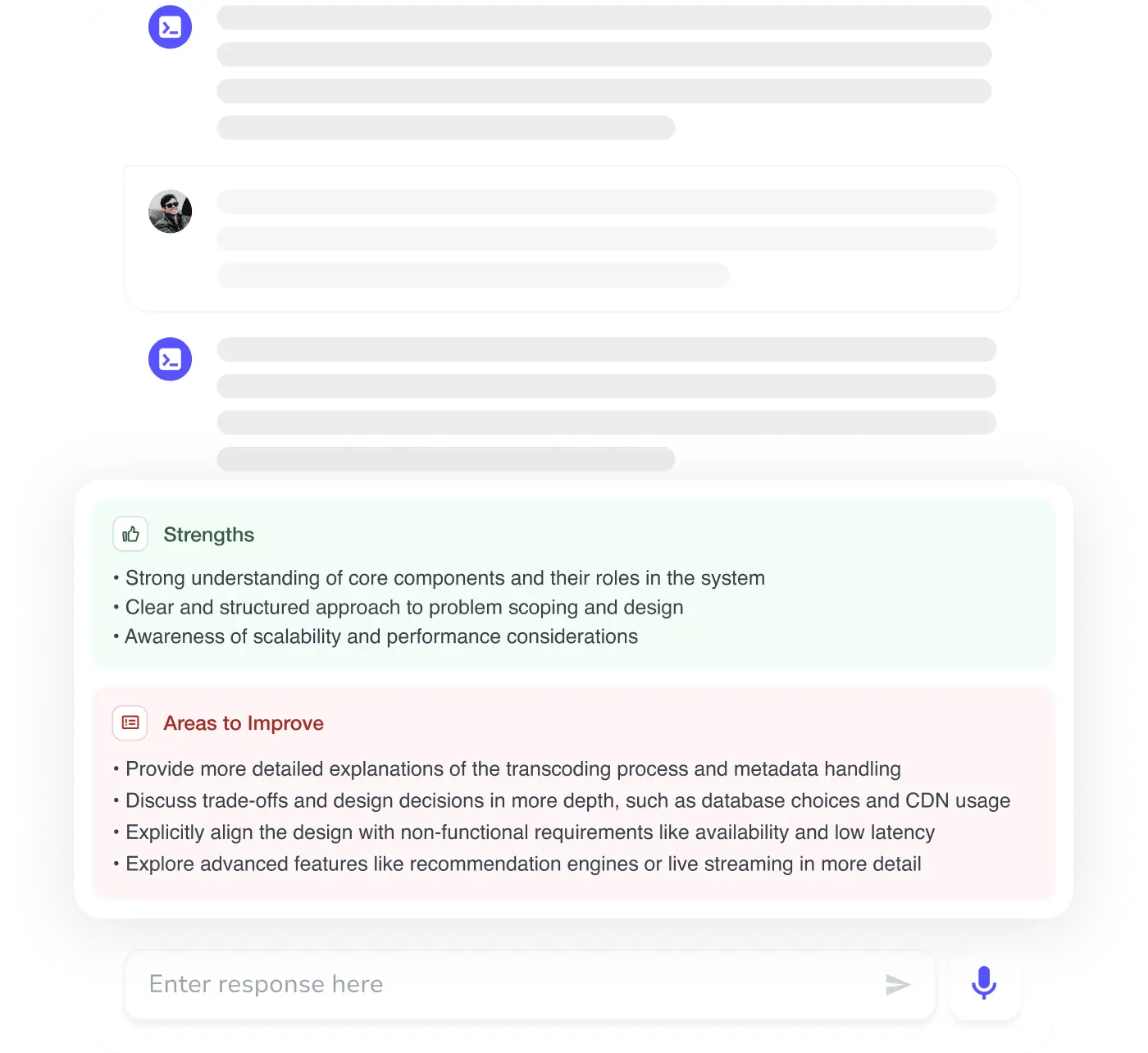

- Mock Interview practice with timed scenarios, model answers, and rubrics to build confidence and spee

Navigate complex system design interviews with confidence, using structured frameworks to communicate your design choices effectively.

Design and implement scalable distributed systems that meet real-world demands, ensuring reliability and performance under load.

Assess and justify design trade-offs in interviews, demonstrating a deep understanding of scalability, reliability, and fault tolerance.

Facilitate technical discussions around system architecture, clearly articulating design decisions and their implications for stakeholders.

System Design skills are non-negotiable

From building blocks to System Design Interview master

13+ real-world case studies; one battle-tested formula

Benchmark your skills with AI Mock Interviews

Curriculum developed by MAANG engineers

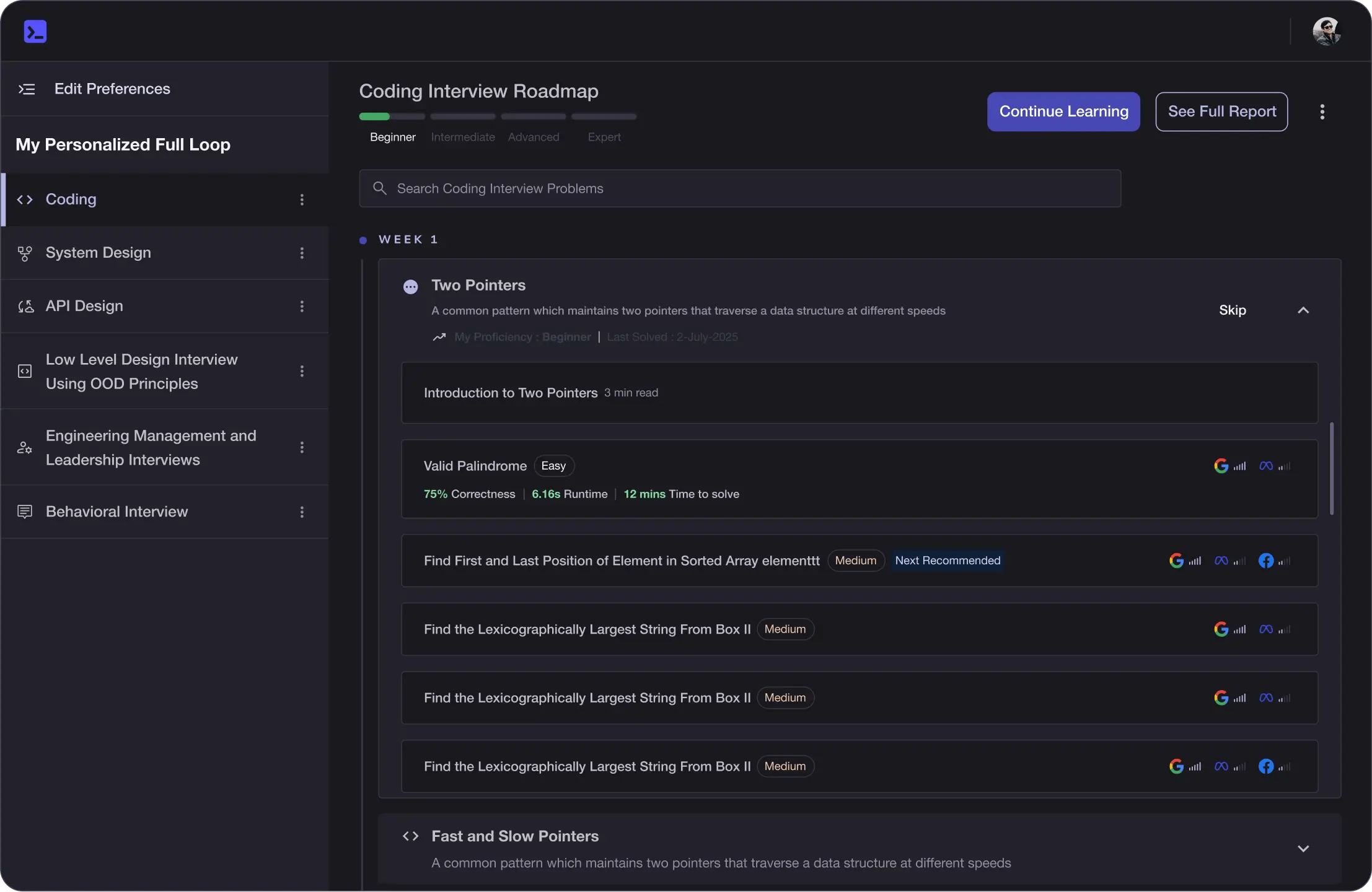

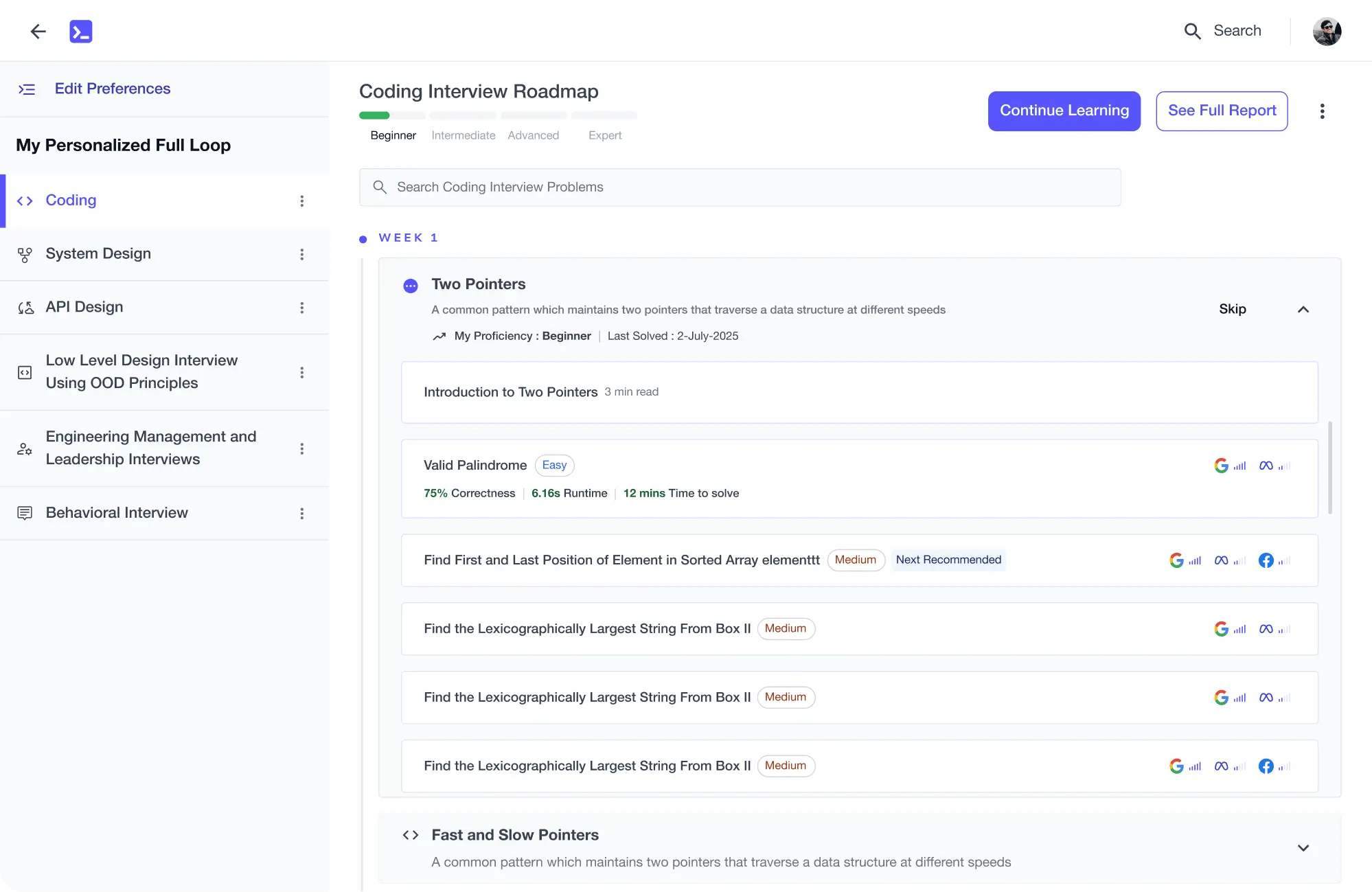

Learning Roadmap

1.

Introduction

Introduction

2.

System Design Interviews

System Design Interviews

3.

Preliminary System Design Concepts

Preliminary System Design Concepts

4 Lessons

4 Lessons

4.

Non-Functional System Characteristics

Non-Functional System Characteristics

7 Lessons

7 Lessons

5.

Back-of-the-Envelope Calculations

Back-of-the-Envelope Calculations

2 Lessons

2 Lessons

7.

Domain Name System

Domain Name System

2 Lessons

2 Lessons

8.

Load Balancers

Load Balancers

3 Lessons

3 Lessons

9.

Databases

Databases

5 Lessons

5 Lessons

10.

Key-Value Store

Key-Value Store

5 Lessons

5 Lessons

11.

Content Delivery Network (CDN)

Content Delivery Network (CDN)

7 Lessons

7 Lessons

12.

Sequencer

Sequencer

3 Lessons

3 Lessons

13.

Distributed Monitoring

Distributed Monitoring

3 Lessons

3 Lessons

14.

Monitor Server-Side Errors

Monitor Server-Side Errors

3 Lessons

3 Lessons

15.

Monitor Client-Side Errors

Monitor Client-Side Errors

2 Lessons

2 Lessons

16.

Distributed Cache

Distributed Cache

6 Lessons

6 Lessons

17.

Distributed Messaging Queue

Distributed Messaging Queue

7 Lessons

7 Lessons

18.

Pub-Sub

Pub-Sub

3 Lessons

3 Lessons

19.

Rate Limiter

Rate Limiter

5 Lessons

5 Lessons

20.

Blob Store

Blob Store

6 Lessons

6 Lessons

21.

Distributed Search

Distributed Search

6 Lessons

6 Lessons

22.

Distributed Logging

Distributed Logging

3 Lessons

3 Lessons

23.

Distributed Task Scheduler

Distributed Task Scheduler

5 Lessons

5 Lessons

24.

Sharded Counters

Sharded Counters

4 Lessons

4 Lessons

25.

Concluding the Building Blocks Discussion

Concluding the Building Blocks Discussion

4 Lessons

4 Lessons

26.

Design YouTube

Design YouTube

6 Lessons

6 Lessons

27.

Design Quora

Design Quora

5 Lessons

5 Lessons

28.

Design Google Maps

Design Google Maps

6 Lessons

6 Lessons

29.

Design a Proximity Service/Yelp

Design a Proximity Service/Yelp

5 Lessons

5 Lessons

30.

Design Uber

Design Uber

7 Lessons

7 Lessons

31.

Design Twitter

Design Twitter

6 Lessons

6 Lessons

33.

Design Instagram

Design Instagram

5 Lessons

5 Lessons

36.

Design WhatsApp

Design WhatsApp

6 Lessons

6 Lessons

37.

Design Typeahead Suggestion

Design Typeahead Suggestion

7 Lessons

7 Lessons

38.

Design a Collaborative Document Editing Service/Google Docs

Design a Collaborative Document Editing Service/Google Docs

5 Lessons

5 Lessons

39.

Design a Deployment System

Design a Deployment System

2 Lessons

2 Lessons

40.

Design a Payment System

Design a Payment System

2 Lessons

2 Lessons

41.

Design a ChatGPT System

Design a ChatGPT System

2 Lessons

2 Lessons

42.

Design a Data Infrastructure System

Design a Data Infrastructure System

3 Lessons

3 Lessons

43.

LLM-Powered Customer Support Bot System Design

LLM-Powered Customer Support Bot System Design

2 Lessons

2 Lessons

44.

AI-Powered Code Assistant System Design

AI-Powered Code Assistant System Design

2 Lessons

2 Lessons

45.

Lessons from System Failures

Lessons from System Failures

4 Lessons

4 Lessons

46.

Concluding Remarks

Concluding Remarks

2 Lessons

2 Lessons

47.

Free System Design Lessons

Free System Design Lessons

14 Lessons

14 Lessons

48.

System Design Case Studies

System Design Case Studies

5 Lessons

5 Lessons

Fahim ul Haq

Software Engineer, Distributed Storage at Meta and Microsoft, Educative (Co-founder & CEO)

Trusted by 3 million developers working at companies

A clear path through System Design

Yichen Wang

Software Engineer @ Microsoft

The course I actually apply at work

Nishal Pattan

Software Engineer @ Microsoft

Built for engineers and managers

Bruno Sampaio Pinho da Silva

Ubisoft

Edward Teixeira Dias Júnior

Volvo Group

Zoddah Wise

Buildria

Mike Rabatin

Learner

Kshitij Tiwari

Arachnomesh Technologies

Abhishek R

Learner

Built for 10x Developers

Free Resources

cheatsheet

cheatsheet

cheatsheet

blog

guide