Service Abstractions in Microservices: Patterns and Anti-Patterns

This blog explains why abstractions are essential in microservices architectures, but also why they often become sources of hidden complexity, coupling, and operational confusion at scale.

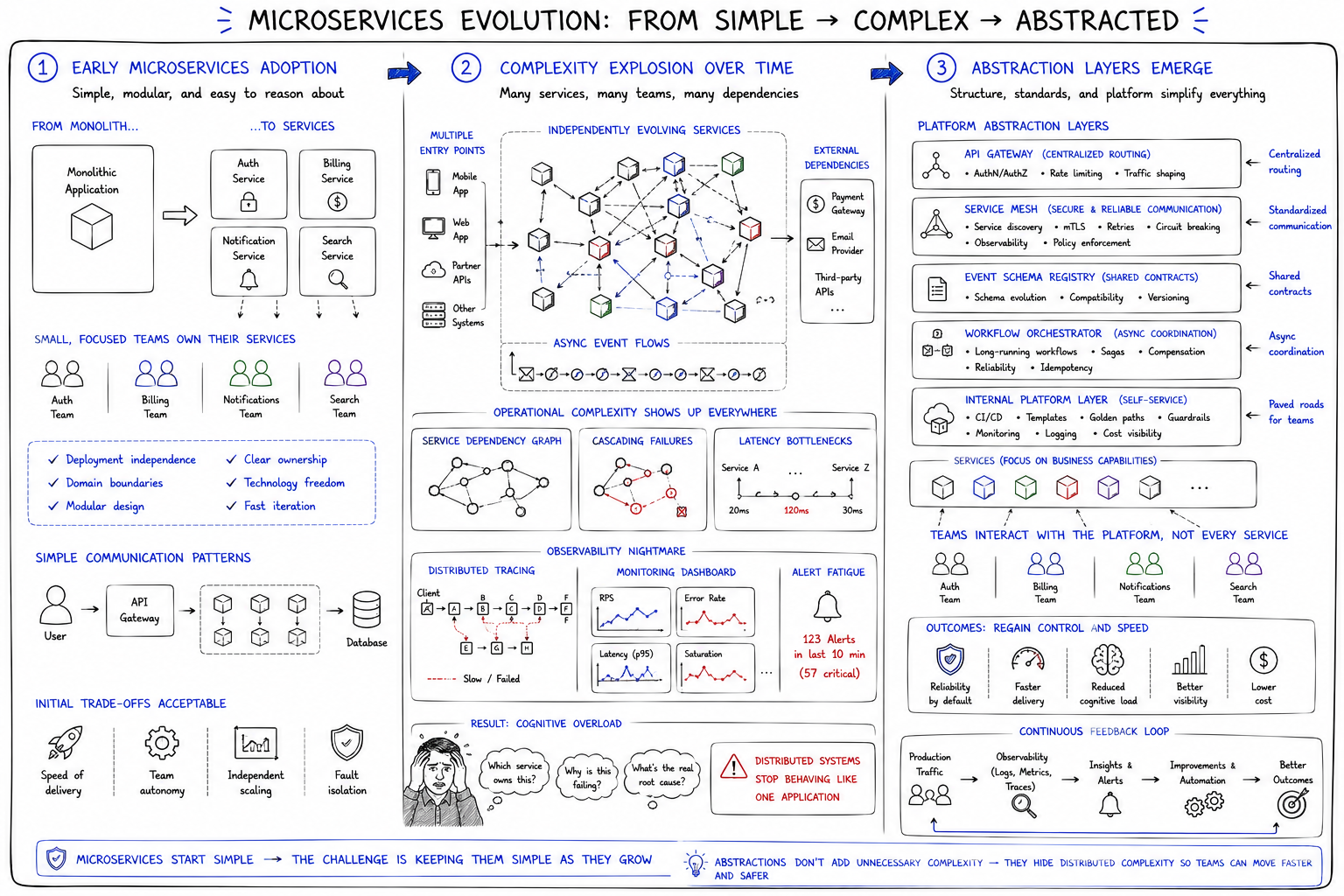

Microservices architectures become complicated long before they become large. Teams usually discover this gradually rather than through a single architectural failure. A service is introduced to isolate a domain boundary or improve deployment independence. Then another service appears to handle authentication, billing, notifications, or search. Soon the system no longer behaves like a single application. It behaves like a distributed environment composed of independently evolving services, teams, APIs, infrastructure layers, and operational dependencies. What initially looked modular starts producing coordination complexity almost immediately.

At that point, abstraction layers stop feeling optional. Teams introduce API gateways to centralize routing. Shared contracts define service expectations. Event schemas coordinate asynchronous workflows. Internal platforms standardize communication patterns. Service meshes abstract networking behavior. Orchestration layers coordinate workflows across domains. None of these abstractions emerge because engineers enjoy adding complexity. They emerge because distributed systems become cognitively unmanageable without mechanisms that reduce how much each team must understand about the entire system simultaneously.

Microservices are one of the most important software architecture trends, but it’s one thing to define an architecture and quite another to implement it. This course focuses on the nitty-gritty details of real-world implementation. You’ll learn recipes for tech stacks that can be used to implement microservices, as well as the pros and cons of each. You’ll start by exploring some fundamental concepts for implementing microservices. Within each concept, you’ll learn about the different technologies used to implement it. The technologies include: Frontend Integration with Edge Side Includes (ESI), asynchronous microservices with Kafka and REST feeds, synchronous microservices with the Netflix stack and Consul, Docker, Kubernetes, Cloud Foundry. Each technology you learn about is described and then demonstrated with real code. By the end of this course, you’ll be a microservice pro. Whether you’re a software engineer or an engineering manager, this course will prove useful throughout your career.

Every abstraction in distributed systems solves one complexity problem while quietly introducing another.

This tension sits at the center of modern microservices architecture. Abstractions help teams reason about large systems, but they also hide operational details that still exist underneath. They simplify local understanding while sometimes increasing systemic opacity. A well-designed abstraction reduces cognitive load without concealing critical behavior. A dangerous abstraction creates the illusion of simplicity while allowing coupling, failure propagation, and operational confusion to accumulate invisibly over time. The difference between healthy and unhealthy microservices architectures often has less to do with how many services exist and more to do with how abstraction boundaries evolve under operational pressure.

What service abstractions actually are#

Service abstractions are mechanisms that allow teams to interact with distributed systems through stable conceptual boundaries rather than direct implementation knowledge. At a technical level, abstractions appear in many forms: APIs, gateways, messaging contracts, orchestration layers, event schemas, service discovery systems, internal platforms, and communication interfaces. But conceptually, they all serve a similar purpose. They reduce how much one part of the system needs to know about another part in order to function.

In monolithic systems, abstraction boundaries often exist primarily inside application code through modules, interfaces, or layered architectures. In microservices environments, abstractions become operational boundaries as much as programming constructs. Service contracts define not only how data moves between systems, but also how teams coordinate ownership, deployments, and operational responsibilities. A service abstraction is therefore both a technical mechanism and an organizational agreement about where responsibility begins and ends.

This becomes particularly important as systems scale organizationally. Independent teams cannot operate efficiently if every infrastructure or application change requires cross-system coordination. Stable abstractions allow teams to evolve implementations internally without continuously breaking downstream consumers.

A billing service can change storage engines.

A recommendation system can alter internal ranking logic.

A notification pipeline can evolve infrastructure independently.

As long as the abstraction layer remains stable, organizational coordination costs remain manageable. The danger is that abstractions can create the illusion that complexity has disappeared rather than merely moved.

A gateway may simplify client interactions while hiding increasingly complicated downstream dependency chains.

An orchestration layer may centralize workflows while obscuring ownership boundaries.

A service mesh may abstract networking complexity while making debugging significantly harder.

The abstraction always simplifies something locally while introducing complexity somewhere else operationally.

This course is about establishing the basic principles of distributed systems. It explains the scope of their functionality by discussing what they can and cannot achieve. It also covers the basic algorithms and protocols of distributed systems through easy-to-follow examples and diagrams that illustrate the thinking behind some design decisions and expand on how they can be practiced. This course also discusses some of the issues that might arise when doing so, eliminates confusion around some terms (e.g., consistency), and fosters thinking about trade-offs when designing distributed systems. Moreover, it provides plenty of additional resources for those who want to invest more time in gaining a deeper understanding of the theoretical aspects of distributed systems.

Why abstractions become necessary at scale#

Microservices architectures eventually force organizations to confront the reality that scaling software systems is often more about scaling coordination than scaling computation. As the number of services and teams increases, the system accumulates communication overhead rapidly. Without abstraction boundaries, every service change risks triggering cascading coordination work across multiple teams, deployment pipelines, and operational domains.

This is why abstractions become organizational tools as much as technical tools. Stable service contracts allow teams to operate semi-independently. Communication interfaces reduce the need for deep cross-team implementation knowledge. Infrastructure abstractions standardize operational behavior across services. Abstractions create manageable surfaces where ownership and responsibility can remain relatively bounded even as the overall system grows significantly more complicated.

Teams need stable interfaces

Services evolve independently

Infrastructure changes constantly

The organizational side of this matters more than many architecture discussions acknowledge. Distributed systems introduce not only technical fragmentation, but also human fragmentation. Teams develop different priorities, deployment schedules, operational practices, and domain expertise. Without carefully managed abstractions, every change becomes a negotiation problem. Architectural boundaries therefore exist partly to control the communication cost of organizational scale itself.

Interestingly, this is also why many microservices architectures grow more abstract over time rather than less. The larger the organization becomes, the stronger the pressure toward standardization, shared tooling, and coordination layers. Internal developer platforms emerge because manually managing service interactions no longer scales operationally. But every additional abstraction layer also increases the distance between engineers and the operational realities underneath the system.

The difference between good abstractions and dangerous abstractions#

Good abstractions reduce cognitive complexity without hiding operationally important behavior. They expose stable interfaces while preserving visibility into latency, failures, dependencies, and ownership boundaries. Strong abstractions simplify reasoning about the system without creating false assumptions about reliability or isolation.

Dangerous abstractions behave differently. They hide critical operational details in ways that make failures harder to reason about. They encourage teams to treat distributed systems as though they behave like local systems. They conceal latency costs, retry behavior, coupling patterns, or dependency chains until those hidden complexities emerge during outages or scaling events. In distributed systems, abstraction mistakes rarely remain isolated. They compound over time because every hidden dependency eventually interacts with another hidden dependency somewhere else in the architecture.

One common failure pattern is abstraction leakage. Teams create interfaces intended to isolate complexity, but operational behavior eventually escapes the abstraction boundary anyway. A supposedly independent service suddenly requires consumers to understand retry semantics, consistency delays, or asynchronous ordering guarantees. The abstraction remains technically intact while operationally failing to simplify anything meaningful.

Distributed systems punish weak abstractions aggressively because networks introduce realities that abstractions cannot eliminate entirely. Latency still exists. Partial failures still occur. Services still evolve independently. Data consistency still behaves probabilistically across asynchronous boundaries. A useful abstraction acknowledges these realities while making them manageable. A dangerous abstraction pretends they are no longer relevant.

Useful abstraction patterns in microservices#

Some abstraction patterns consistently prove valuable because they align technical boundaries with operational realities. API gateways, for example, help centralize authentication, routing, and external client coordination. They reduce duplication across services while providing controlled ingress into distributed environments. But healthy gateways remain relatively thin. Once gateways begin accumulating business logic extensively, they risk becoming centralized bottlenecks that recreate monolithic coordination patterns.

Backend-for-frontend layers often work well because they align abstractions around client-specific needs rather than internal service topology. Mobile applications, web clients, and partner integrations rarely require identical data aggregation behavior. A backend-for-frontend abstraction isolates client concerns without forcing every downstream service to optimize for every possible consumer simultaneously.

Domain-oriented service boundaries remain one of the more resilient abstraction strategies because they align technical decomposition with ownership clarity. Services organized around coherent business capabilities generally evolve more predictably than services organized around generic infrastructure categories. Event-driven communication patterns can also improve decoupling significantly, especially when systems require asynchronous coordination without tightly coupled request chains.

Contract-based interfaces are particularly important operationally because they create explicit agreements around compatibility expectations. Strong contracts reduce accidental coupling while enabling independent service evolution. However, contracts work well only when organizations treat them as carefully governed interfaces rather than informal conventions subject to constant reinterpretation.

Orchestration vs choreography as abstraction strategies#

Orchestration and choreography represent two fundamentally different approaches to abstraction in distributed systems. Orchestration centralizes coordination logic inside dedicated controllers or workflow engines. Choreography distributes coordination implicitly across events and service reactions. Both models attempt to reduce complexity, but they distribute visibility and control differently.

Orchestrated systems usually provide stronger operational visibility because workflows remain centralized conceptually. Engineers can trace requests through explicit execution paths more easily. Failure handling becomes easier to standardize. Workflow state remains observable. The trade-off is that orchestration layers often become operationally critical infrastructure components. Over time, orchestration systems may accumulate excessive business logic and evolve into centralized dependency hubs that constrain service autonomy.

Choreographed systems behave differently. Services react independently to events without centralized coordination. This often improves decoupling and local autonomy because services evolve more independently. But choreography introduces a different operational challenge: understanding overall system behavior becomes significantly harder. Workflows emerge collectively rather than existing explicitly inside orchestrators.

Distributed systems become difficult not when services communicate, but when nobody fully understands how they communicate anymore.

The real challenge is not choosing one model universally. Mature architectures often blend orchestration and choreography strategically depending on visibility requirements, operational complexity, and ownership boundaries. The important question is whether the abstraction strategy preserves enough operational clarity for teams to reason about failures, dependencies, and workflow evolution responsibly.

Shared abstractions and hidden coupling#

One of the more subtle dangers in microservices environments is that shared abstractions frequently reintroduce coupling indirectly. Shared libraries, centralized schemas, internal platforms, and cross-service utility frameworks often begin as attempts to reduce duplication. Initially, these abstractions feel efficient because they standardize behavior across teams.

Over time, however, shared abstractions frequently become coupling mechanisms disguised as simplification layers. A shared library update suddenly affects dozens of services simultaneously. A centralized schema evolution introduces deployment coordination across multiple teams. Internal platforms accumulate assumptions that shape service behavior globally. Teams stop evolving independently because shared abstractions silently synchronize operational constraints across the organization.

The problem is not that shared abstractions are inherently wrong. The problem is that they often create invisible dependency surfaces. Local changes stop remaining local even when the architecture still appears distributed structurally. Teams believe they are independently deployable while operationally depending on shared implementation assumptions underneath.

This is one reason mature organizations increasingly optimize for bounded duplication rather than maximal reuse. Some duplication is operationally healthier than excessive shared abstraction because it preserves autonomy and failure isolation. Distributed systems often tolerate redundant implementation more gracefully than hidden coordination complexity.

Anti-patterns that emerge over time#

Many microservices anti-patterns emerge gradually from decisions that initially appeared reasonable. Distributed monoliths rarely begin as intentionally bad architectures. They emerge when services become operationally dependent despite being technically separate. Synchronous dependency chains expand incrementally until requests require half the organization’s infrastructure stack to remain healthy simultaneously.

Overly generic services create similar problems. Teams sometimes design “shared platform services” intended to support many domains universally. Over time these services accumulate conflicting requirements, excessive configuration complexity, and organizational bottlenecks. Platform layers that initially simplified development begin slowing down evolution because every change now requires centralized coordination.

Every service depends on every other service

APIs become organizational bottlenecks

Platform layers hide failure paths

Abstraction leakage also compounds operationally over time. Services intended to behave independently increasingly require consumers to understand internal retry logic, ordering guarantees, or infrastructure semantics. Eventually the architecture stops behaving like isolated services and starts behaving like a fragmented monolith held together by network calls.

The dangerous part is that these failures rarely appear immediately. Most anti-patterns scale socially before they scale technically. Coordination overhead increases slowly enough that organizations normalize it operationally until outages, deployment friction, or debugging complexity expose how deeply coupled the architecture actually became.

Well-designed vs poorly designed service abstractions#

Dimension | Well-designed service abstractions | Poorly designed service abstractions |

Team autonomy | Teams evolve independently with minimal coordination | Teams require constant synchronization |

Coupling | Explicit and bounded | Hidden and pervasive |

Operational visibility | Failures and dependencies remain observable | Failure paths become opaque |

Scalability | Complexity scales gradually | Coordination costs scale aggressively |

Failure isolation | Problems remain locally contained | Cascading failures spread system-wide |

Cognitive complexity | Engineers reason about bounded domains | Engineers require global system knowledge |

Evolution over time | Services evolve incrementally | Architecture becomes increasingly fragile |

The quality of abstractions determines long-term architectural health more than the number of services themselves. Well-designed abstractions preserve local reasoning and bounded ownership. Poor abstractions force engineers to understand increasingly large portions of the system just to make ordinary changes safely. Once cognitive complexity exceeds organizational capacity, architectural velocity slows dramatically regardless of infrastructure sophistication.

Observability and abstraction boundaries#

Observability becomes significantly more difficult as abstraction layers accumulate. Every abstraction boundary potentially hides latency, retries, failure propagation, and dependency behavior from engineers operating elsewhere in the system. A request may appear simple at the API layer while traversing dozens of downstream services invisibly.

Tracing systems help address this partially by reconstructing distributed execution paths across service boundaries. Monitoring infrastructure surfaces latency patterns, error rates, and dependency health. Ownership metadata clarifies responsibility during failures. But observability tooling alone cannot fully solve abstraction opacity if the architecture itself encourages hidden coordination patterns.

The operational challenge is that abstraction layers often optimize for local simplicity while reducing systemic visibility. Engineers interacting with high-level abstractions may no longer understand which services participate in requests, how retries propagate, or where bottlenecks actually emerge. This becomes especially dangerous during incidents because debugging distributed systems already involves incomplete information under time pressure.

Healthy abstractions preserve observability intentionally. They expose dependency relationships clearly, surface latency behavior transparently, and maintain traceability across boundaries. The goal is not eliminating complexity entirely, but ensuring complexity remains observable where operational decisions happen.

The organizational side of abstraction design#

Microservices architectures often mirror organizational structures more directly than teams initially realize. Conway’s Law appears repeatedly because service boundaries frequently emerge from communication boundaries inside organizations. Teams design abstractions partly around technical domains, but also around ownership, autonomy, reporting structures, and coordination constraints.

Most service boundaries are organizational decisions disguised as technical architecture.

This is why service abstraction discussions cannot remain purely technical. A service boundary influences who deploys what, who responds to incidents, who owns operational risk, and how teams coordinate changes. Poor abstraction boundaries frequently produce organizational friction before technical failure becomes visible.

Mature organizations therefore treat abstraction design partly as communication design. Services should align with ownership clarity, operational responsibility, and domain understanding. Systems become healthier when teams can reason about their boundaries independently without requiring excessive cross-organizational synchronization.

Interestingly, many successful architectures become simpler over time operationally rather than more abstract. Teams eventually realize that excessive indirection increases organizational confusion even when it appears elegant structurally. Architectural maturity often involves reducing unnecessary abstraction layers to restore ownership clarity.

Why abstraction leakage is inevitable#

Abstraction leakage is unavoidable in distributed systems because networks introduce realities that cannot be fully hidden operationally. Latency exists regardless of interface cleanliness. Retries influence behavior regardless of abstraction quality. Partial failures occur even when APIs appear stable. Consistency delays emerge despite asynchronous elegance.

Microservices architectures therefore operate under an important constraint: abstractions can reduce complexity exposure, but they cannot eliminate distributed systems behavior entirely. Eventually operational realities escape abstraction boundaries because the infrastructure underneath still behaves probabilistically.

This becomes particularly obvious during scaling events or outages. Services that appeared independent suddenly reveal hidden coordination dependencies. Retry storms amplify latency unexpectedly. Shared infrastructure assumptions emerge operationally. Teams discover that “loosely coupled” services still depend heavily on each other’s performance characteristics.

The goal of abstraction design is therefore not perfect isolation. Perfect isolation does not exist in distributed systems. The goal is controlled complexity exposure. Healthy abstractions allow teams to reason locally most of the time while still preserving enough visibility into systemic behavior when operational realities inevitably surface.

Misconceptions about service abstractions#

One persistent misconception is that more abstraction automatically improves architecture. In practice, excessive abstraction often increases cognitive complexity faster than it reduces implementation duplication. Every abstraction layer introduces operational behavior that someone must eventually understand during debugging, migrations, or incidents.

Another misconception is that microservices reduce complexity automatically. Microservices redistribute complexity. They trade implementation complexity inside monoliths for coordination complexity across distributed systems. Whether that trade becomes beneficial depends heavily on abstraction quality, organizational maturity, and operational discipline.

There is also a tendency to overestimate what infrastructure abstractions solve. Service meshes improve networking standardization but do not eliminate dependency management problems. Loose coupling does not mean no coupling. Event-driven systems still coordinate behavior operationally even when request chains disappear structurally.

Perhaps the most dangerous misconception is that abstractions remove operational concerns. Distributed systems remain distributed regardless of how elegant the interfaces appear. Networks still fail. Dependencies still evolve. Ownership still matters. Healthy abstractions acknowledge these realities instead of pretending they disappeared.

What mature microservices architectures optimize for#

Mature architectures optimize less for theoretical modularity and more for operational clarity. Experienced teams prioritize observability, bounded ownership, resilience, failure isolation, and cognitive manageability over maximal decomposition or architectural sophistication.

This often leads organizations toward simpler abstraction models than expected. Teams reduce unnecessary orchestration layers. Shared infrastructure becomes more carefully bounded. Service boundaries align more closely with domain ownership. Platform abstractions evolve toward enabling local autonomy rather than enforcing universal standardization aggressively.

The most resilient systems usually emerge not from abstraction maximalism, but from disciplined simplicity. Teams learn that every abstraction carries operational cost. Every hidden dependency eventually surfaces during incidents. Every coordination layer influences organizational behavior.

Architectural maturity therefore often means becoming more selective about abstraction rather than more enthusiastic about it.

TLDR; Abstractions as both architecture and responsibility#

Service abstractions are unavoidable in microservices architectures because distributed systems become impossible to manage operationally without mechanisms that reduce coordination complexity. APIs, gateways, contracts, orchestration layers, and communication boundaries all help teams reason locally inside environments that would otherwise become cognitively overwhelming.

But abstractions are valuable only when they improve operational clarity rather than merely hiding complexity temporarily. Healthy abstractions preserve ownership, visibility, and bounded reasoning. Dangerous abstractions conceal coupling, obscure failures, and create organizational fragility underneath simplified interfaces.

The deeper lesson is that abstraction design is not only an architectural concern. It is also a responsibility management problem. Service boundaries determine who understands what, who owns failures, and how complexity spreads across organizations over time.

The best abstractions don’t remove complexity. They place complexity where teams can actually manage it responsibly.