AI-powered learning

Save this course

Mastering Self-Supervised Algorithms for Learning without Labels

Gain insights into self-supervised learning. Delve into pseudo label generation, similarity maximization, redundancy reduction, and masked image modeling to apply and modify these algorithms on unlabelled datasets.

5.0

31 Lessons

7h

Join 3 million developers at

Join 3 million developers at

LEARNING OBJECTIVES

- An understanding of self-supervised learning and its advantage over unsupervised learning

- Working knowledge of designing your self-supervised learning tasks/objectives

- Hands-on experience implementing and modifying existing self-supervised learning objectives to learn from unlabelled data

- Ability to transfer and evaluate your self-supervised network representations on a downstream task

- Familiarity with core components of self-supervised learning, including pretext tasks, similarity maximization, redundancy reduction, and masked image modeling

Learning Roadmap

1.

Introduction to Self-Supervised Learning

Introduction to Self-Supervised Learning

Get familiar with self-supervised learning, leveraging unlabeled data for adaptable model training.

2.

Pretext Tasks

Pretext Tasks

Unpack the core of self-supervised learning through pretext tasks like rotation, positioning, and puzzles.

3.

Similarity Maximization and Redundancy Reduction

Similarity Maximization and Redundancy Reduction

12 Lessons

12 Lessons

Examine techniques for similarity maximization and redundancy reduction through modern self-supervised learning algorithms.

4.

Masked Image Modeling

Masked Image Modeling

9 Lessons

9 Lessons

Grasp the fundamentals of masked image modeling techniques and their applications in self-supervised learning.

Certificate of Completion

Showcase your accomplishment by sharing your certificate of completion.

Complete more lessons to unlock your certificate

Developed by MAANG Engineers

ABOUT THIS COURSE

This course covers self-supervised algorithms, which are useful for large pools of unlabelled data or when obtaining a high-quality labeled dataset is difficult. These algorithms leverage the supervisory signals from the structure of the unlabeled data to predict any unobserved or hidden property of the input.

You’ll start with the fundamentals of self-supervised learning and then implement your first class of algorithms. You’ll learn to generate pseudo labels and use these labels for training models using supervised learning. Next, you’ll learn about similarity maximization-based self-supervised algorithms. You’ll also look into redundancy reduction, which reduces the redundancy in the feature representations while maximizing the similarity between similar images. Lastly, you’ll learn to implement masked image modeling.

After learning all this, you'll be able to apply the self-supervised models to unlabelled datasets. Furthermore, you’ll be able to implement and modify existing self-supervised algorithms.

ABOUT THE AUTHOR

Puneet Mangla

Data and Applied Scientist at Microsoft Advertising working on Ad quality checks. As a part-time technical writer, I love teaching machine learning concepts through blogs and courses.

Trusted by 3 million developers working at companies

A

Anthony Walker

@_webarchitect_

E

Evan Dunbar

ML Engineer

S

Software Developer

Carlos Matias La Borde

S

Souvik Kundu

Front-end Developer

V

Vinay Krishnaiah

Software Developer

Built for 10x Developers

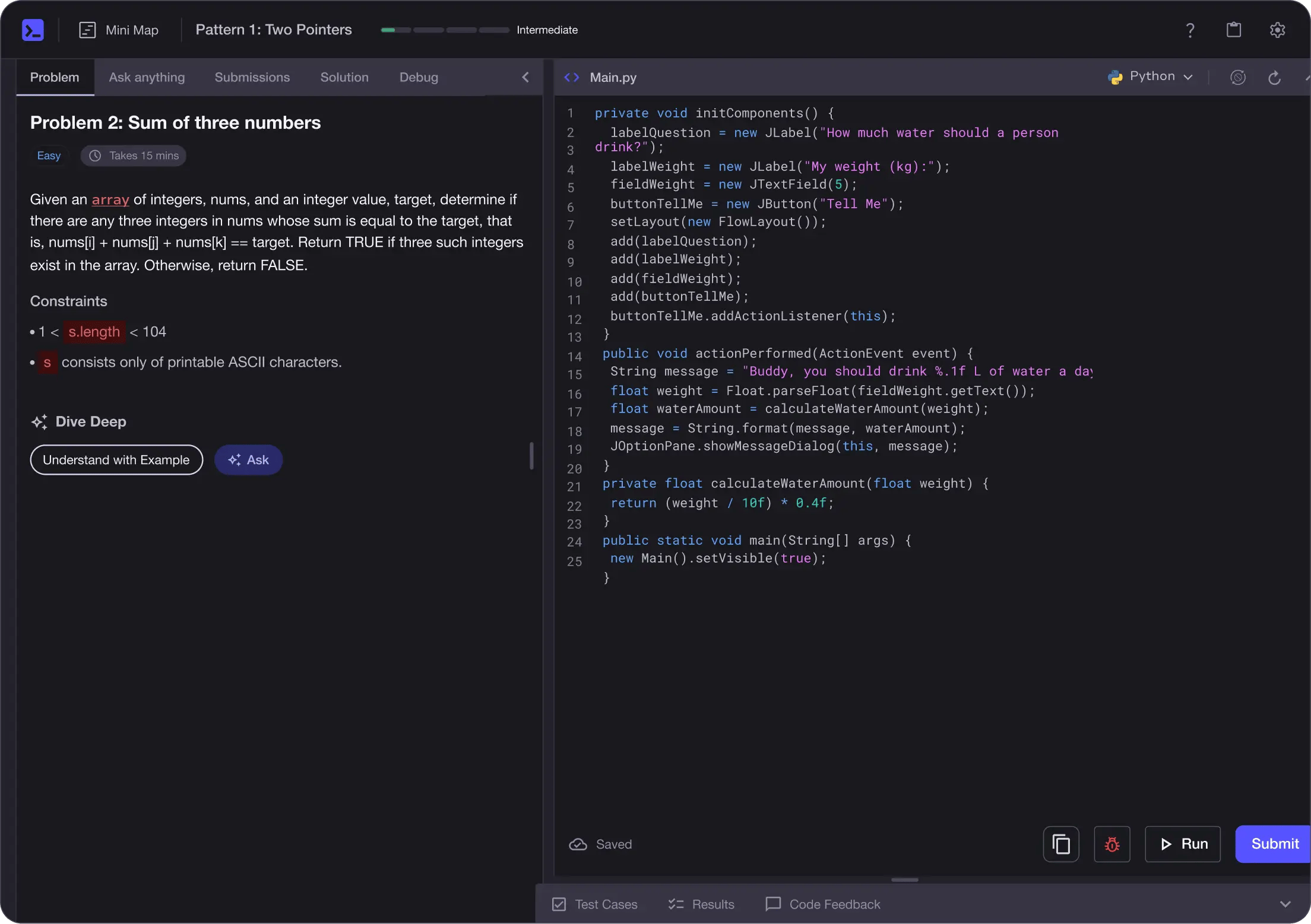

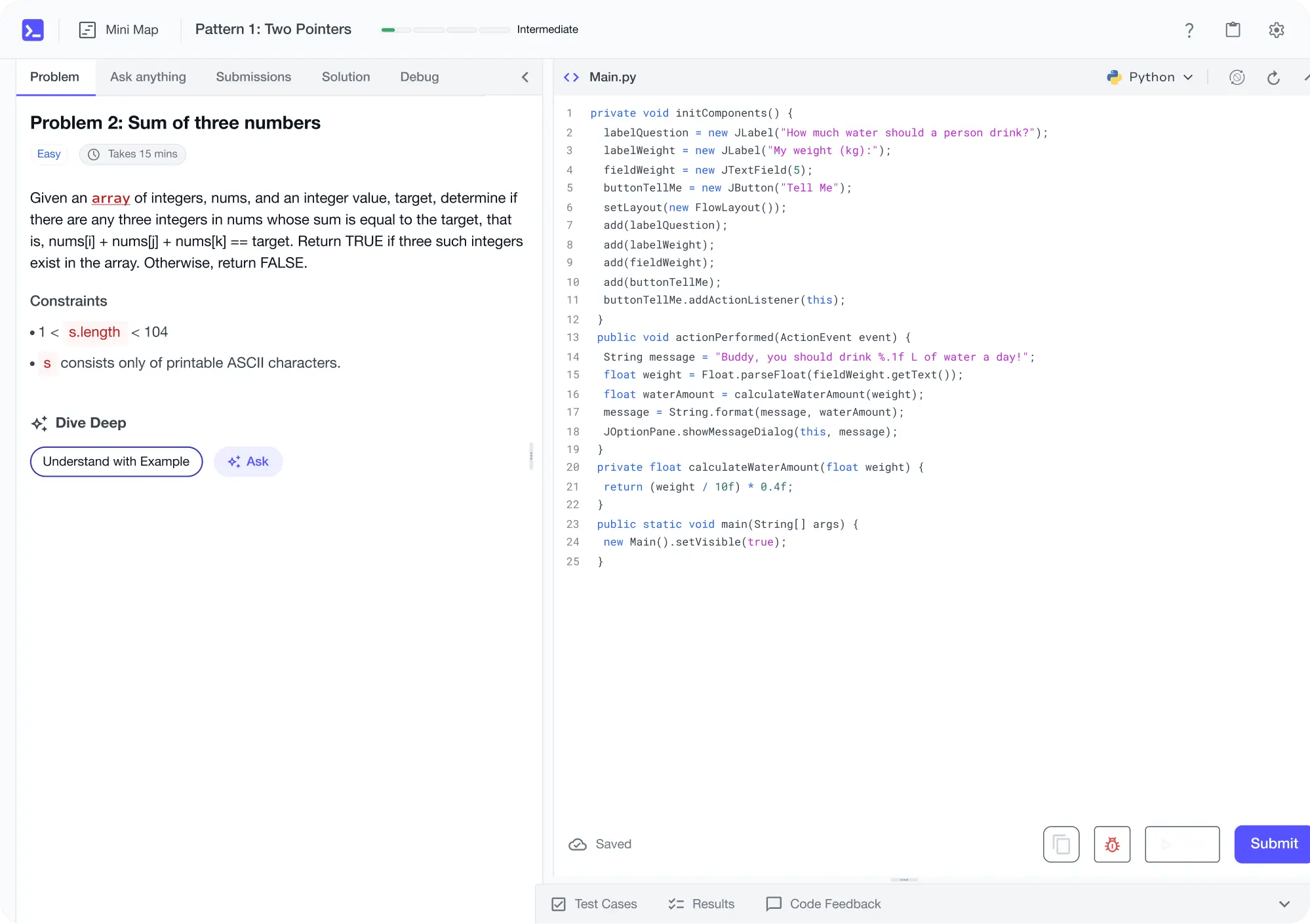

No Passive Learning

Learn by building with project-based lessons and in-browser code editor

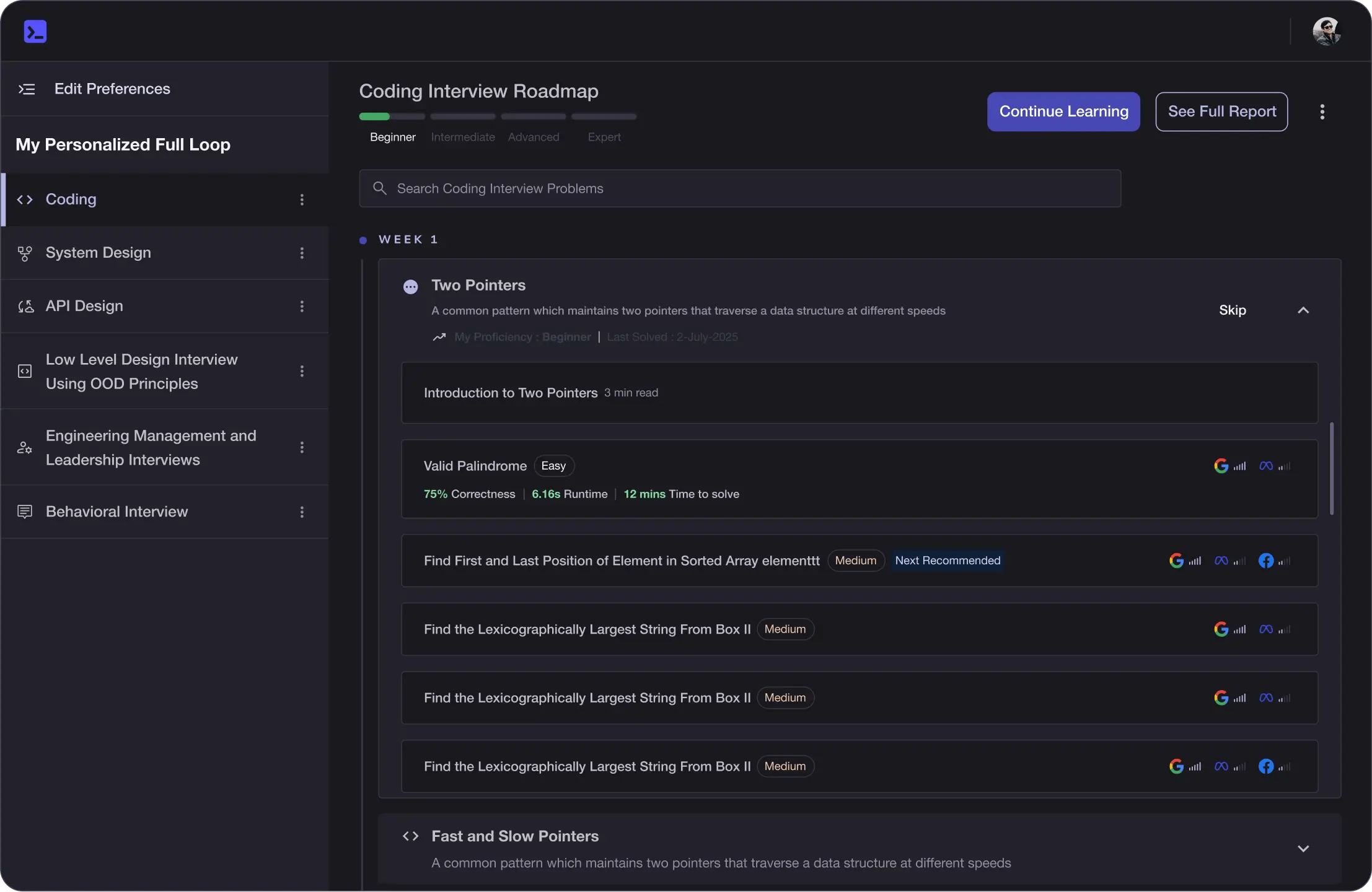

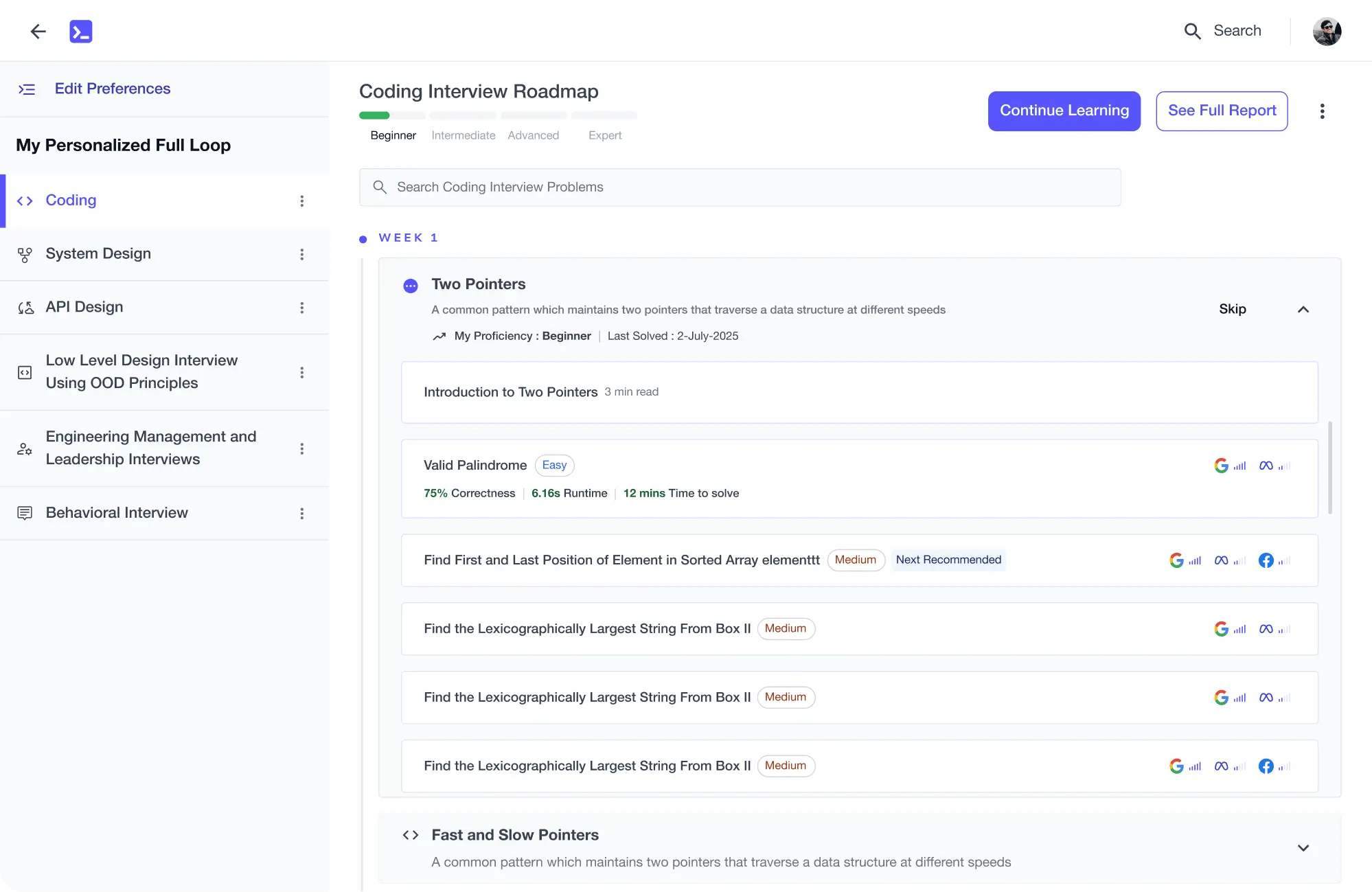

Personalized Roadmaps

The platform adapts to your strengths & skills gaps as you go

Future-proof Your Career

Get hands-on with in-demand skills

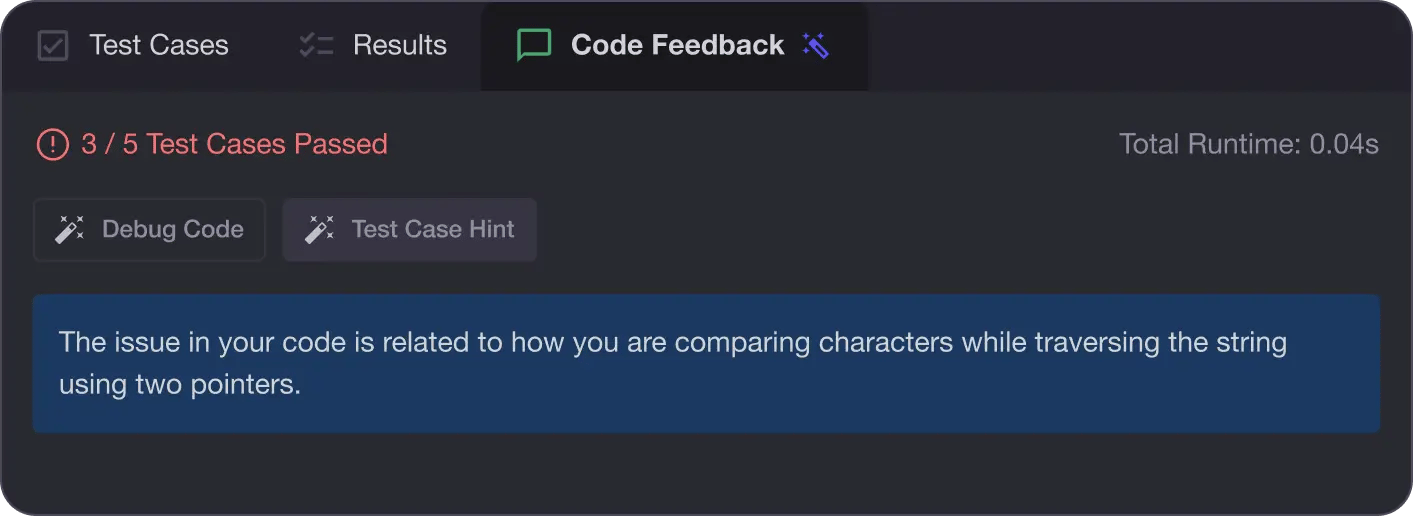

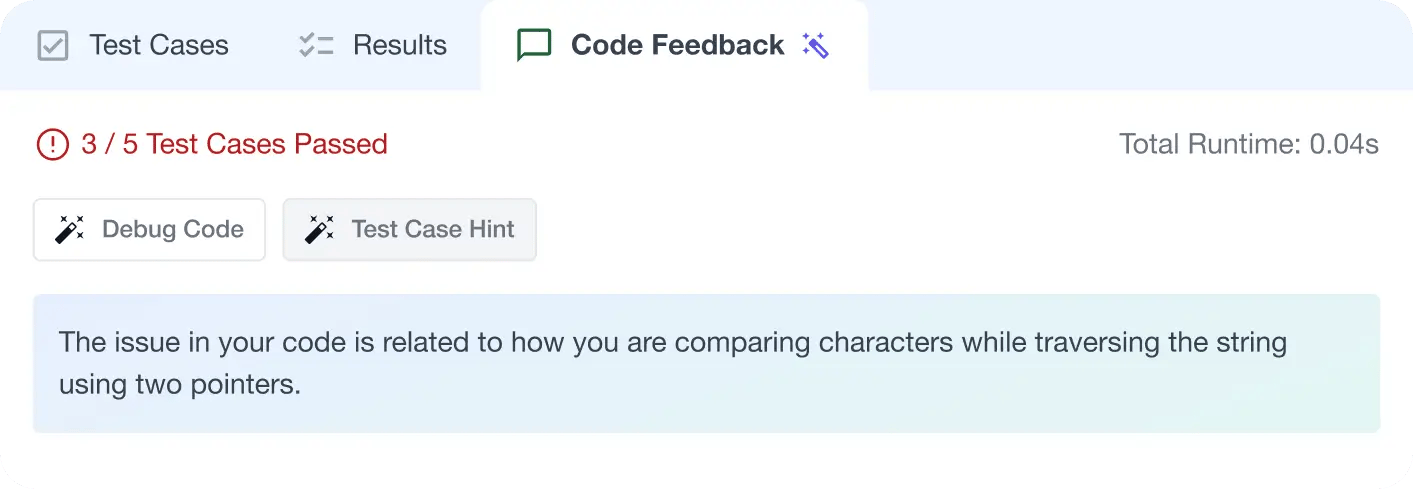

AI Code Mentor

Write better code with AI feedback, smart debugging, and "Ask AI"

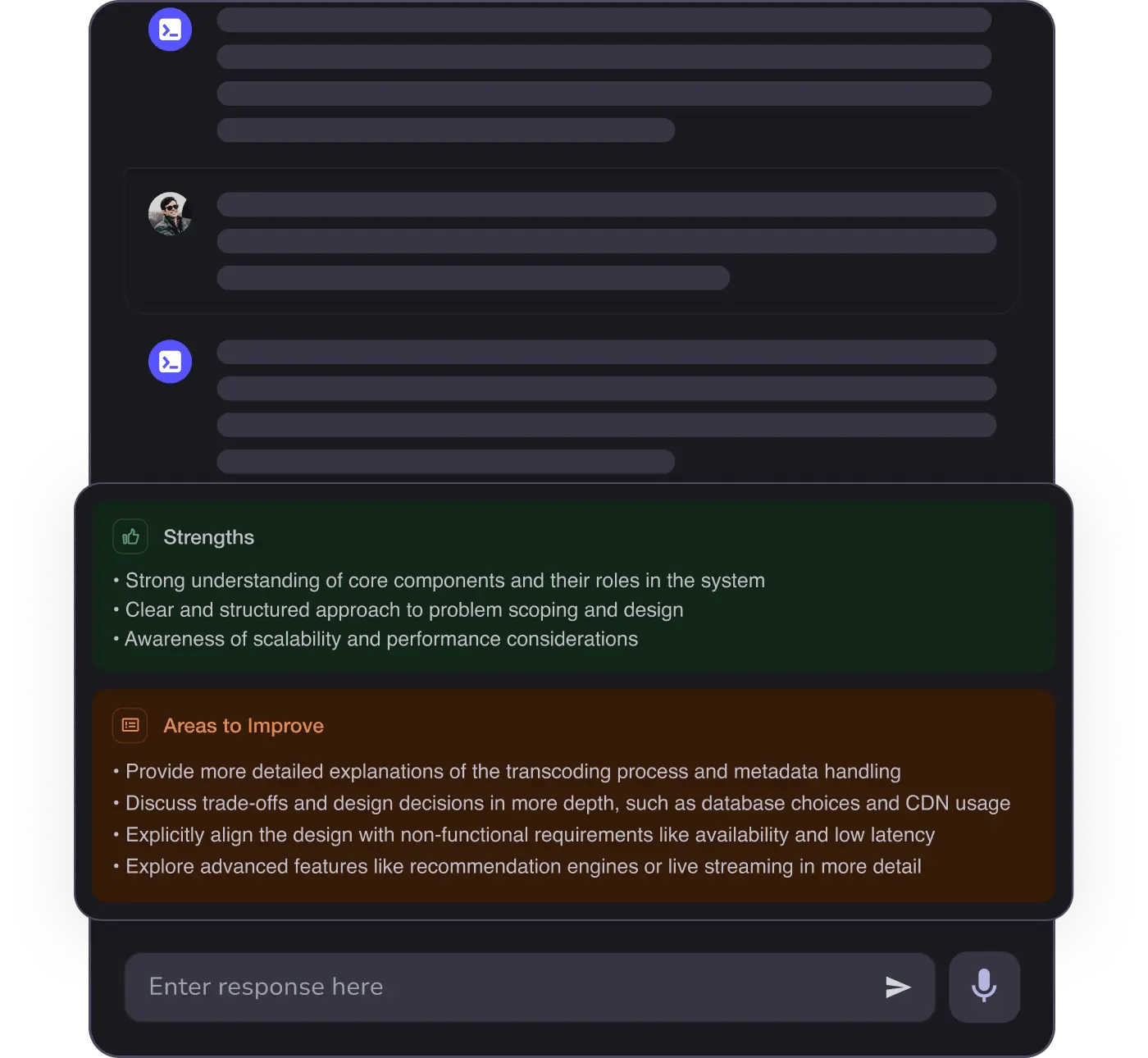

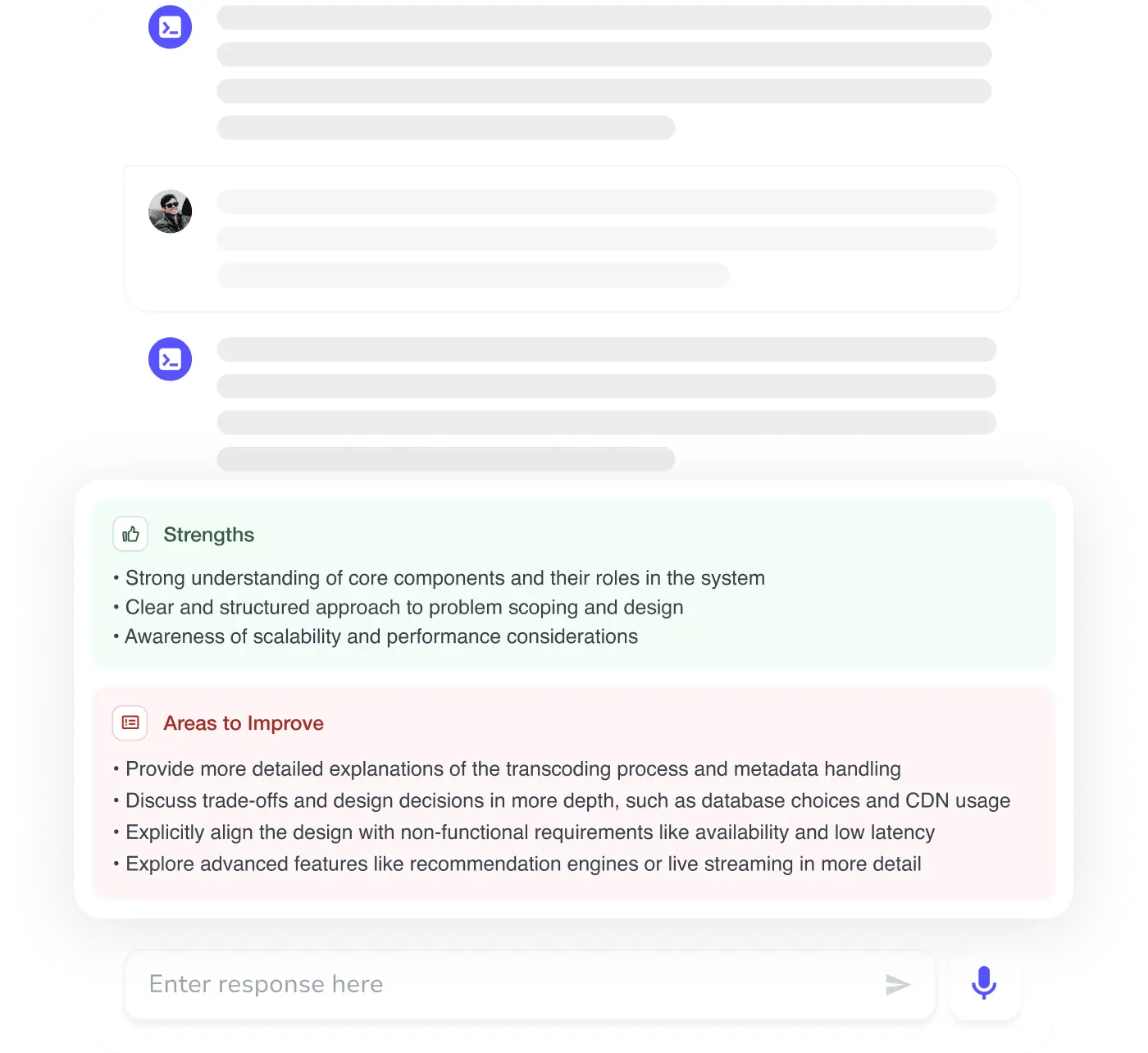

MAANG+ Interview Prep

AI Mock Interviews simulate every technical loop at top companies

Free Resources