AI-powered learning

Trending

Save this course

LLM Evaluation: Building Reliable AI Systems at Scale

Learn to capture traces, generate synthetic data, evaluate agents and RAG systems, and build production-ready testing workflows so your LLM apps stay reliable and scalable.

4.4

16 Lessons

2h

Updated 1 month ago

Join 2.9 million developers at

Join 2.9 million developers at

LEARNING OBJECTIVES

- Understanding of systematic LLM evaluation and the critical role of traces and error analysis

- Hands-on experience capturing and reviewing complete traces to identify system failures

- Proficiency in generating structured synthetic data for edge-case testing and diverse behavior analysis

- The ability to design binary pass/fail evaluations that outperform misleading numeric scales

- The ability to manage prompts as versioned system artifacts within an evaluated architecture

- Working knowledge of specialized evaluation for multi-turn conversations and agentic workflows

Learning Roadmap

1.

Foundations of AI Evaluation

Foundations of AI Evaluation

Learn why impressive demos fail without systematic evaluation, and how traces and error analysis form the foundation of building reliable LLM systems.

2.

Building the Evaluation Workflow

Building the Evaluation Workflow

Learn how to capture complete traces, generate structured synthetic data to expose diverse behaviors, and turn real failures into focused evaluations.

3.

Scaling Evaluation Beyond the Basics

Scaling Evaluation Beyond the Basics

3 Lessons

3 Lessons

Learn how to design evaluations that avoid misleading metrics, treat prompts as versioned system artifacts, and separate guardrails from evaluators.

4.

Evaluating Real Systems in Production

Evaluating Real Systems in Production

3 Lessons

3 Lessons

Learn how to evaluate full conversations, turn recurring failures into reproducible fixes, and debug RAG systems using four simple checks.

5.

Wrap Up

Wrap Up

4 Lessons

4 Lessons

Learn how to make evaluation an ongoing practice, use metrics wisely, and keep your AI system reliable as it scales.

Certificate of Completion

Showcase your accomplishment by sharing your certificate of completion.

Complete more lessons to unlock your certificate

Developed by MAANG Engineers

ABOUT THIS COURSE

This course provides a roadmap for building reliable, production-ready LLM systems through rigorous evaluation. You’ll start by learning why systematic evaluation matters and how to use traces and error analysis to understand model behavior.

You’ll build an evaluation workflow by capturing real failures and generating synthetic data for edge cases. You’ll avoid traps like misleading similarity metrics and learn why simple binary evaluations often beat complex numeric scales. You’ll also cover architectural best practices, including where prompts fit and how to keep guardrails separate from evaluators.

Next, you’ll evaluate complex systems in production: scoring multi-turn conversations, validating agent workflows, and diagnosing common RAG failure modes. You’ll also learn how tools like LangSmith work internally, including what they measure and how they compute scores. By the end, you’ll integrate evaluation into development with CI checks and regression tests to keep AI stable as usage and complexity grow.

ABOUT THE AUTHOR

Khayyam Hashmi

Computer scientist and Generative AI and Machine Learning specialist. VP of Technical Content @ educative.io.

Trusted by 2.9 million developers working at companies

A

Anthony Walker

@_webarchitect_

E

Evan Dunbar

ML Engineer

S

Software Developer

Carlos Matias La Borde

S

Souvik Kundu

Front-end Developer

V

Vinay Krishnaiah

Software Developer

Built for 10x Developers

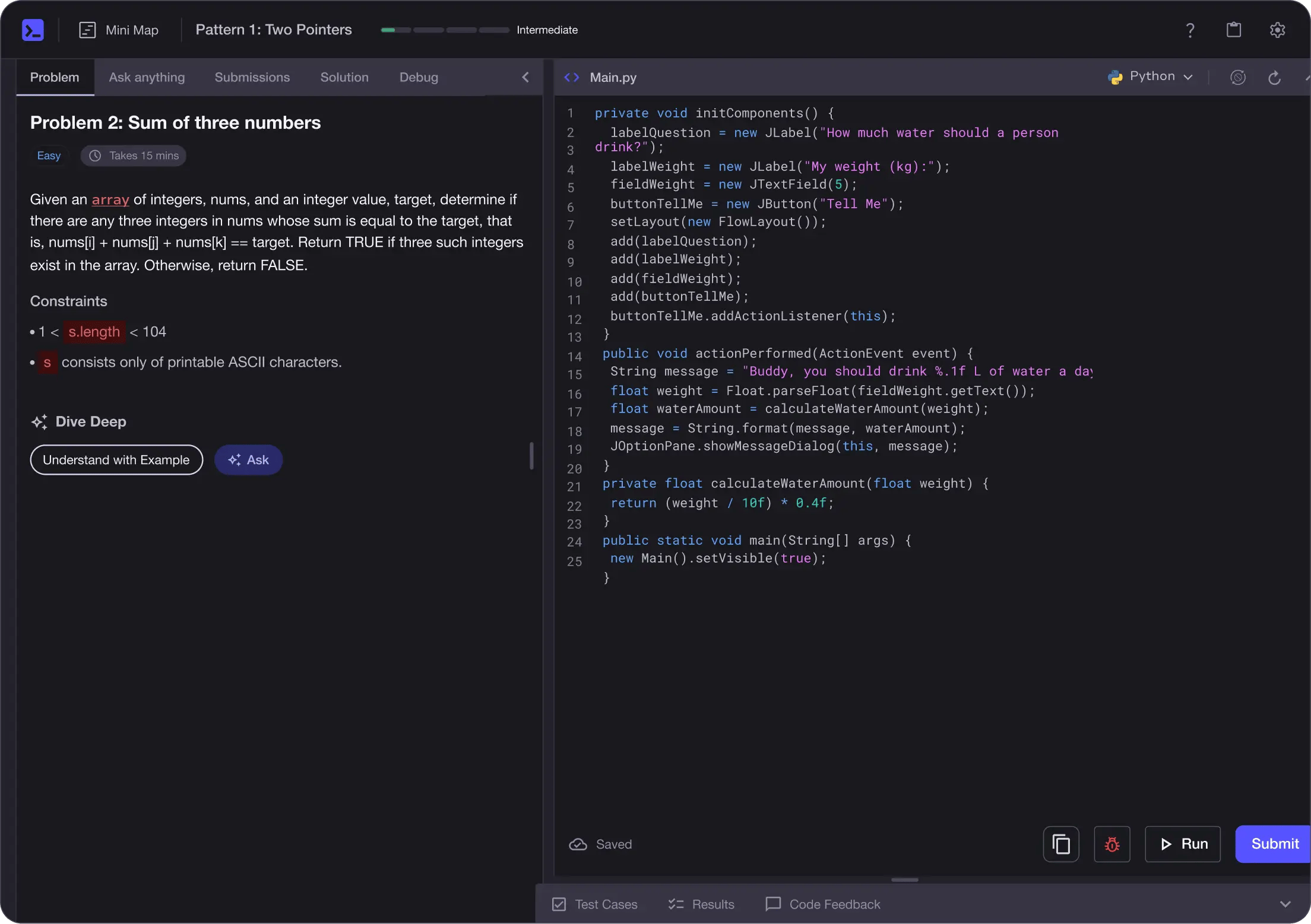

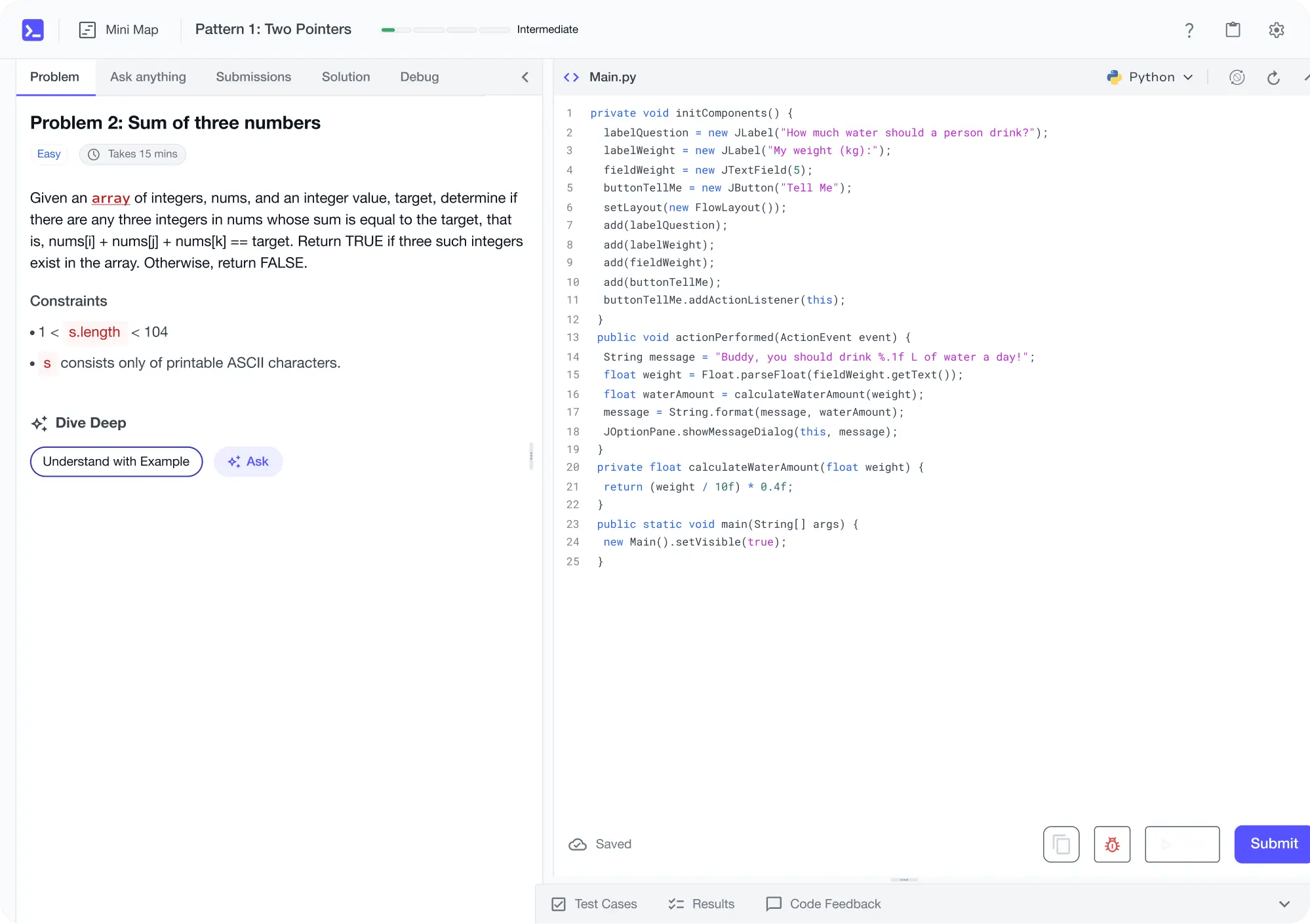

No Passive Learning

Learn by building with project-based lessons and in-browser code editor

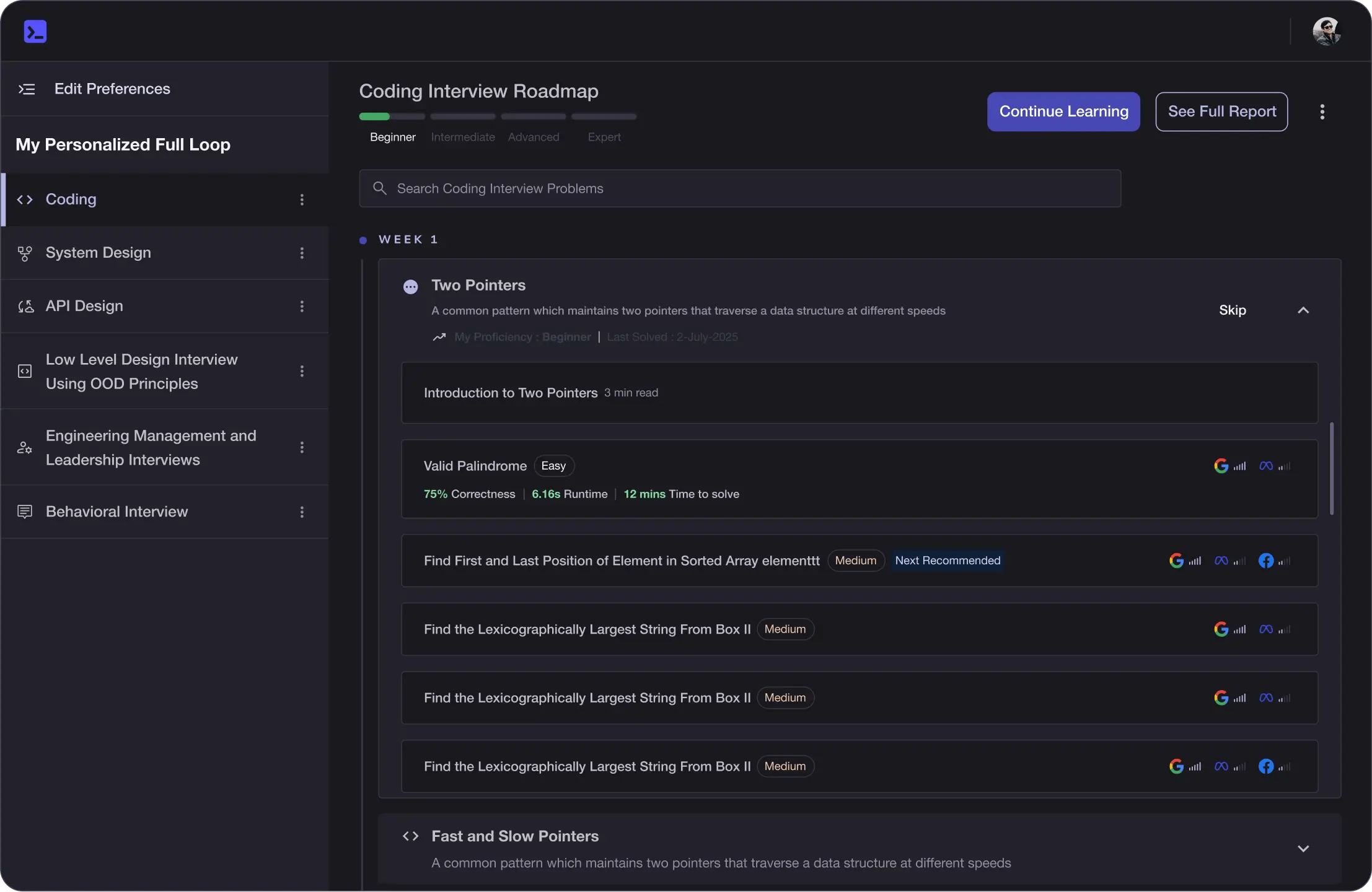

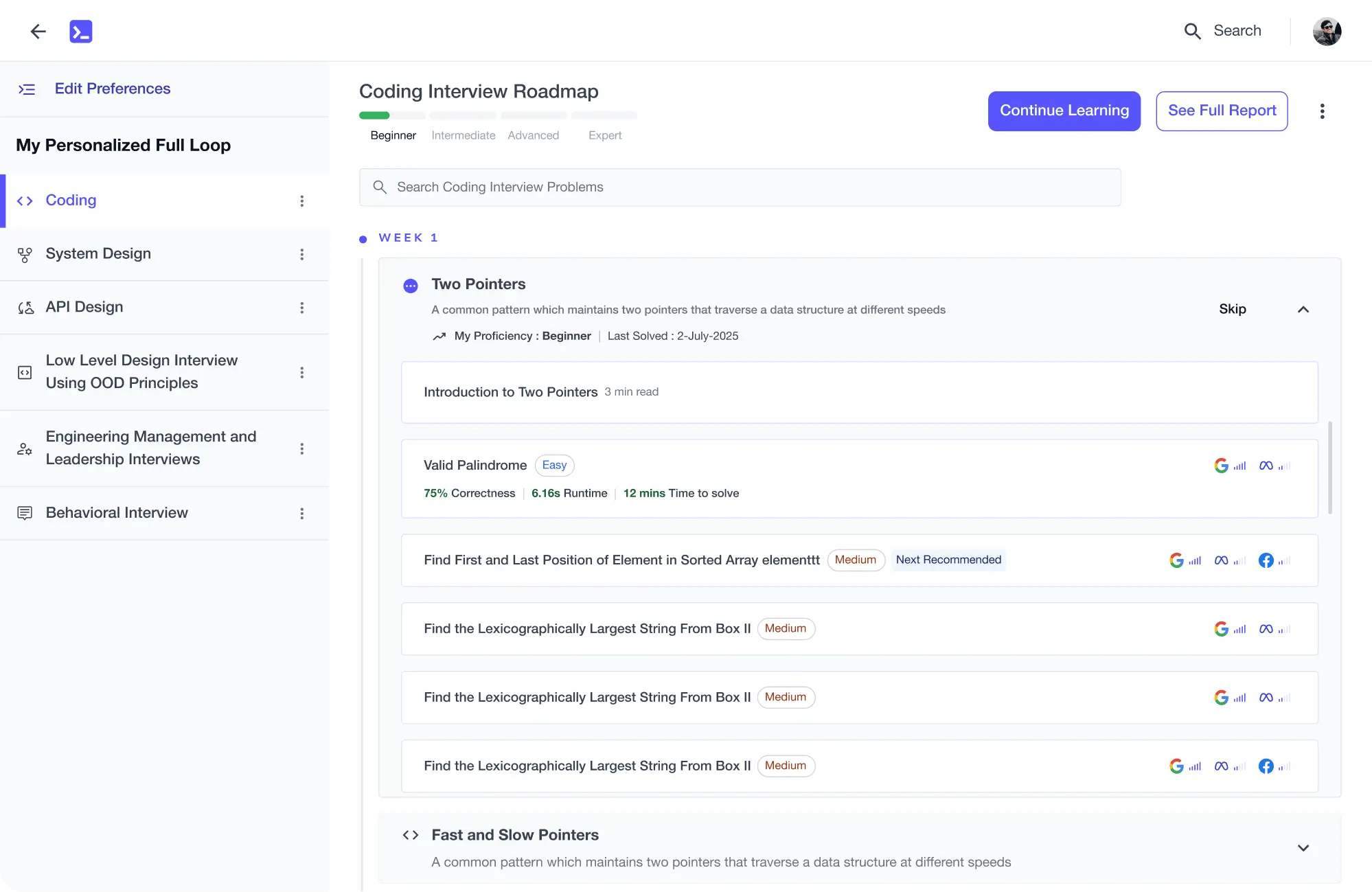

Personalized Roadmaps

The platform adapts to your strengths & skills gaps as you go

Future-proof Your Career

Get hands-on with in-demand skills

AI Code Mentor

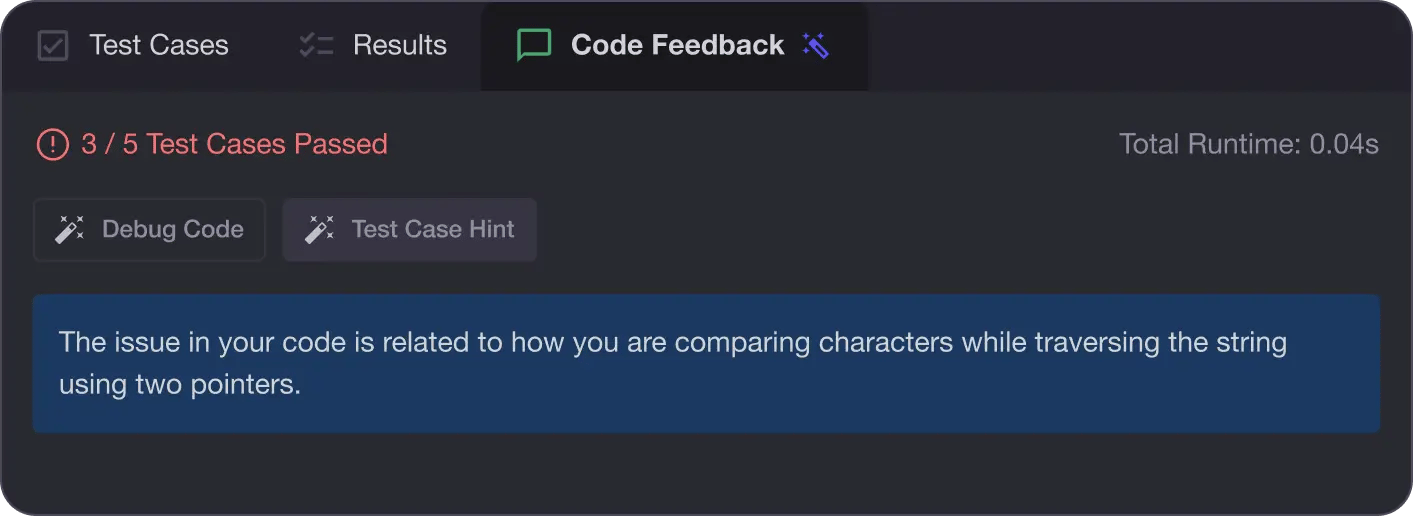

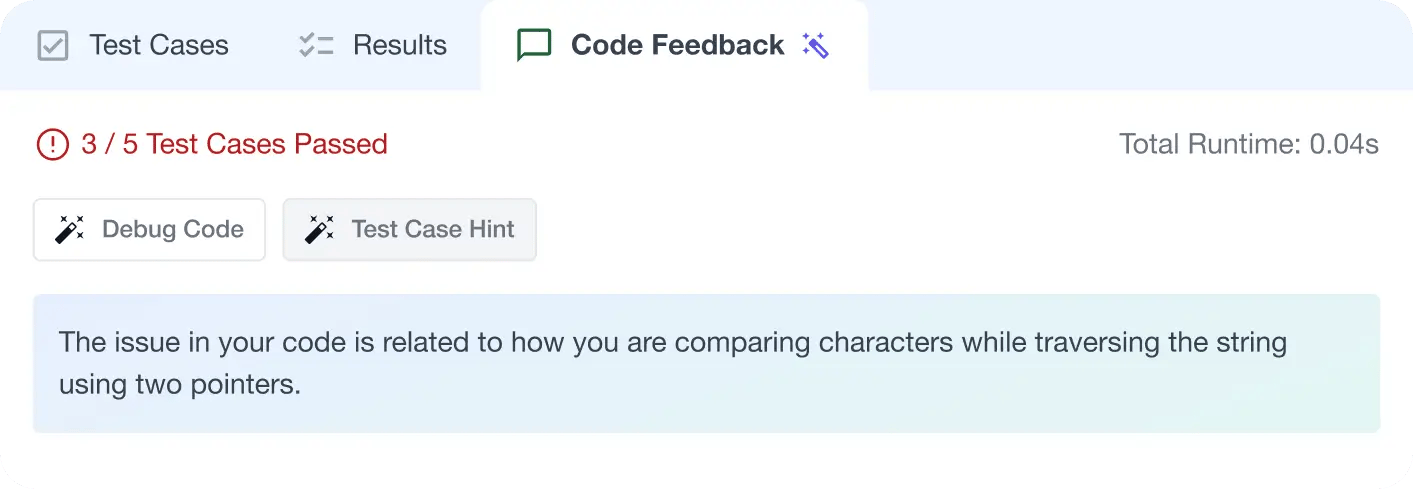

Write better code with AI feedback, smart debugging, and "Ask AI"

MAANG+ Interview Prep

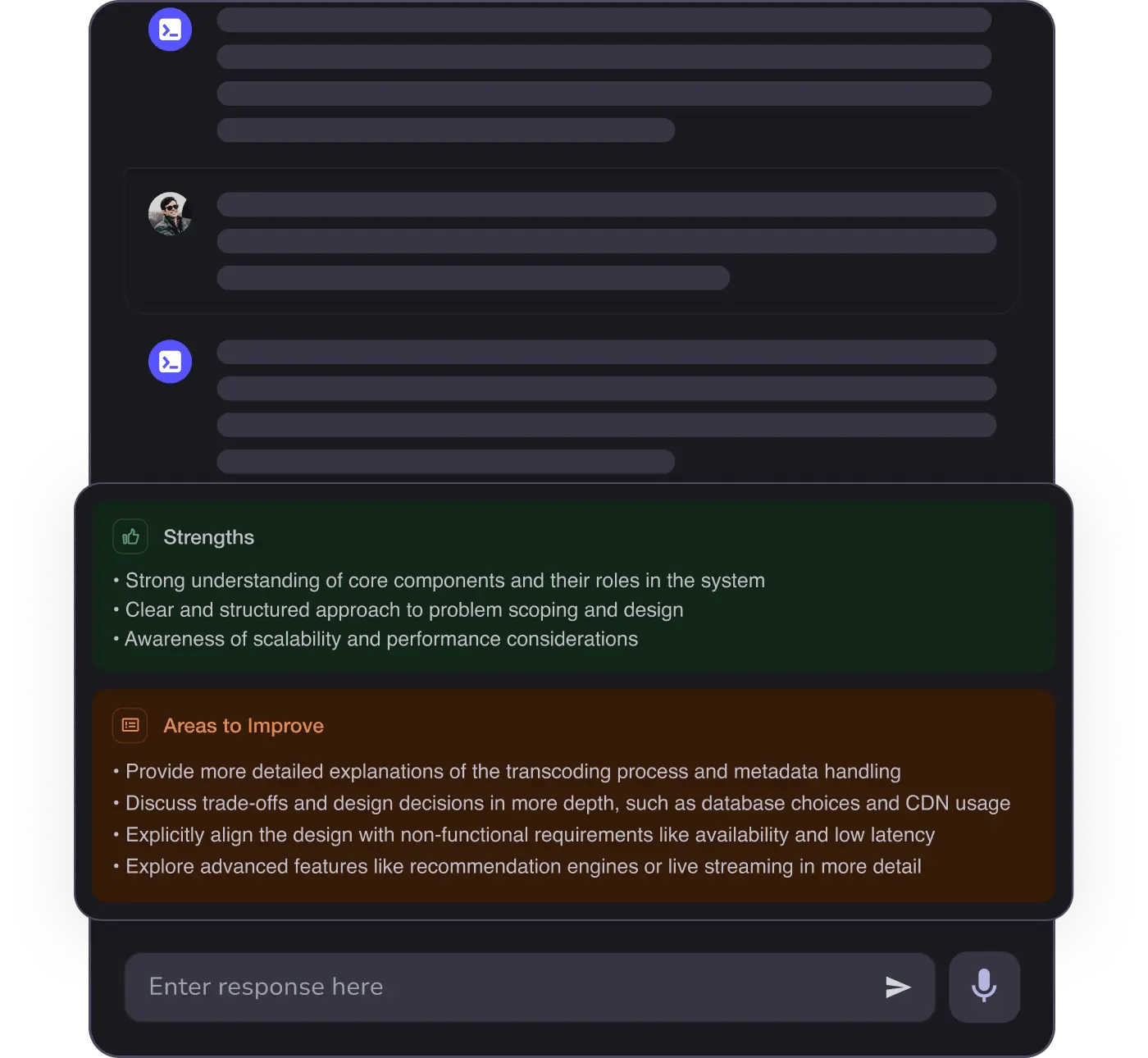

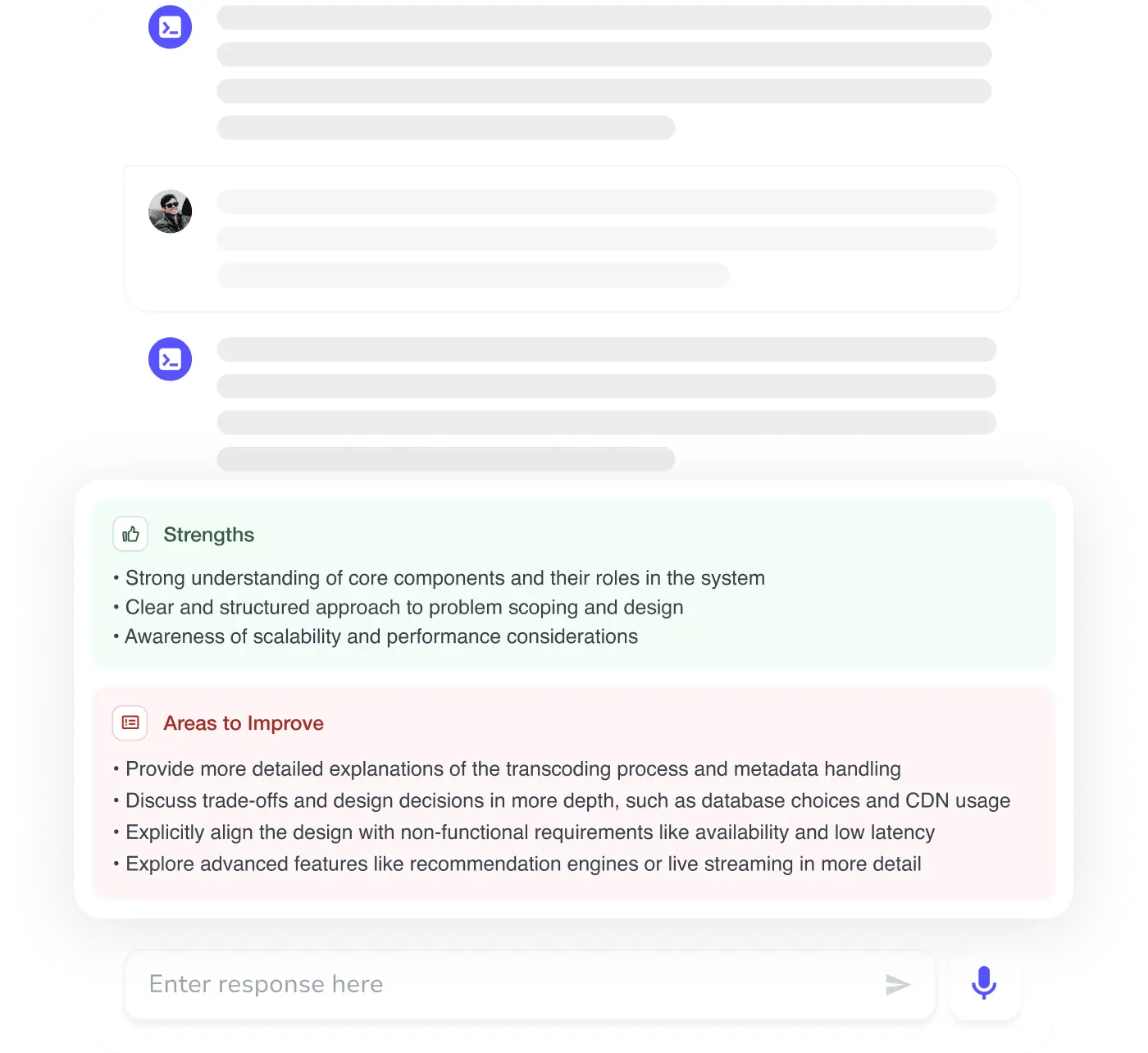

AI Mock Interviews simulate every technical loop at top companies

Free Resources