AI-powered learning

Save this course

Transferring Data with ETL

Master ETL processes, explore data extraction from MySQL, PostgreSQL, and MongoDB, and learn about scheduling and automating ETL pipelines using tools like Apache Airflow and Python.

57 Lessons

2 Projects

10h

Join 3 million developers at

Join 3 million developers at

LEARNING OBJECTIVES

- An understanding of the extract, transform, and load steps in an ETL pipeline

- Hands-on experience implementing and orchestrating the ETL pipelines

- Understanding of databases, data warehousing, data processing, and data ingestion

- Hands-on experience with ETL tools such as Python, SQL, Apache Spark, and Apache Airflow

Learning Roadmap

1.

Introduction

Introduction

Get familiar with building ETL pipelines, their stages, and practical data transformation examples.

Getting StartedETL Pipeline StagesWhat Is an ETL Pipeline?A New Paradigm—ELTETL Example—ExtractionETL Transformation Example: Addressing Data Quality IssueETL Transformation Example: Handling Missing Values and DataETL Transformation Example: Sorting and Finalizing the DataETL Example—LoadETL Example—SchedulingBatch vs. Stream ProcessingData WarehouseExamples and Use CasesQuiz: ETL Pipelines

2.

E: Extract

E: Extract

Get started with techniques for data extraction from various sources including databases, APIs, and web scraping.

IntroductionData Extraction Methods OverviewExtracting Data with Web ScrapingWeb Scraping Exercise: Reading the DataWeb Scraping Exercise: DataFrames to CSVExtraction Using a REST APIExercise: Extracting Data with a REST APIFull Extraction From MySQL DatabaseIncremental Extraction From MySQL DatabaseExtraction From MySQL’s Binary LogExtract From PostgreSQL DatabaseExtraction From Google BigQueryExercise: DatabasesQuiz: Extracting Data

3.

T: Transform

T: Transform

11 Lessons

11 Lessons

Master the steps to transform raw data into usable formats through cleaning, structuring, anonymizing, and aggregating.

4.

L: Load

L: Load

9 Lessons

9 Lessons

Grasp the fundamentals of loading transformed data into repositories, hosting options, and loading strategies.

5.

Orchestration

Orchestration

8 Lessons

8 Lessons

Take a closer look at orchestrating ETL pipelines with Apache Airflow, deployment, and task management.

Certificate of Completion

Showcase your accomplishment by sharing your certificate of completion.

Complete more lessons to unlock your certificate

Developed by MAANG Engineers

ABOUT THIS COURSE

ETL stands for extract, transform, and load. It’s a collection of processes that combine data from various sources and load them into data warehouses or other data repositories. ETL is crucial for providing data used for business intelligence and analytics.

In this course, you’ll experiment with extracting data from various database solutions such as MySQL, PostgreSQL, and MongoDB. You’ll use query and scripting languages like SQL, Python, and Apache Spark to process data and load it to data repositories or cloud solutions like Google’s GCP. Finally, you’ll learn how to schedule your ETL pipelines using cronjobs or automate and monitor them using open-source tools like Apache Airflow and Python’s pandas library.

After completing this course, you’ll have a strong grasp of various methods, tools, and techniques for transferring data from a source to its destination using ETL pipelines.

Trusted by 3 million developers working at companies

A

Anthony Walker

@_webarchitect_

E

Evan Dunbar

ML Engineer

S

Software Developer

Carlos Matias La Borde

S

Souvik Kundu

Front-end Developer

V

Vinay Krishnaiah

Software Developer

Built for 10x Developers

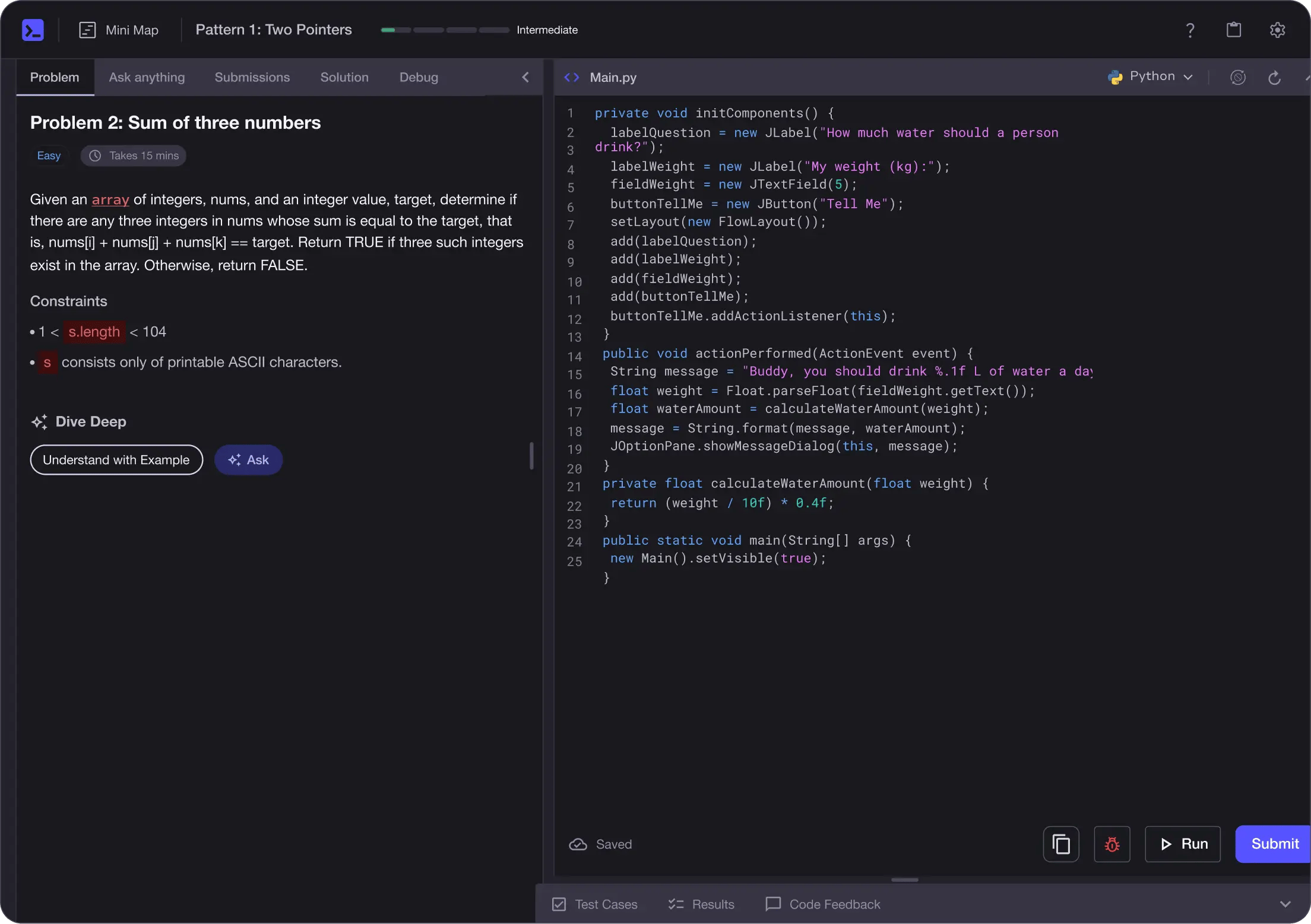

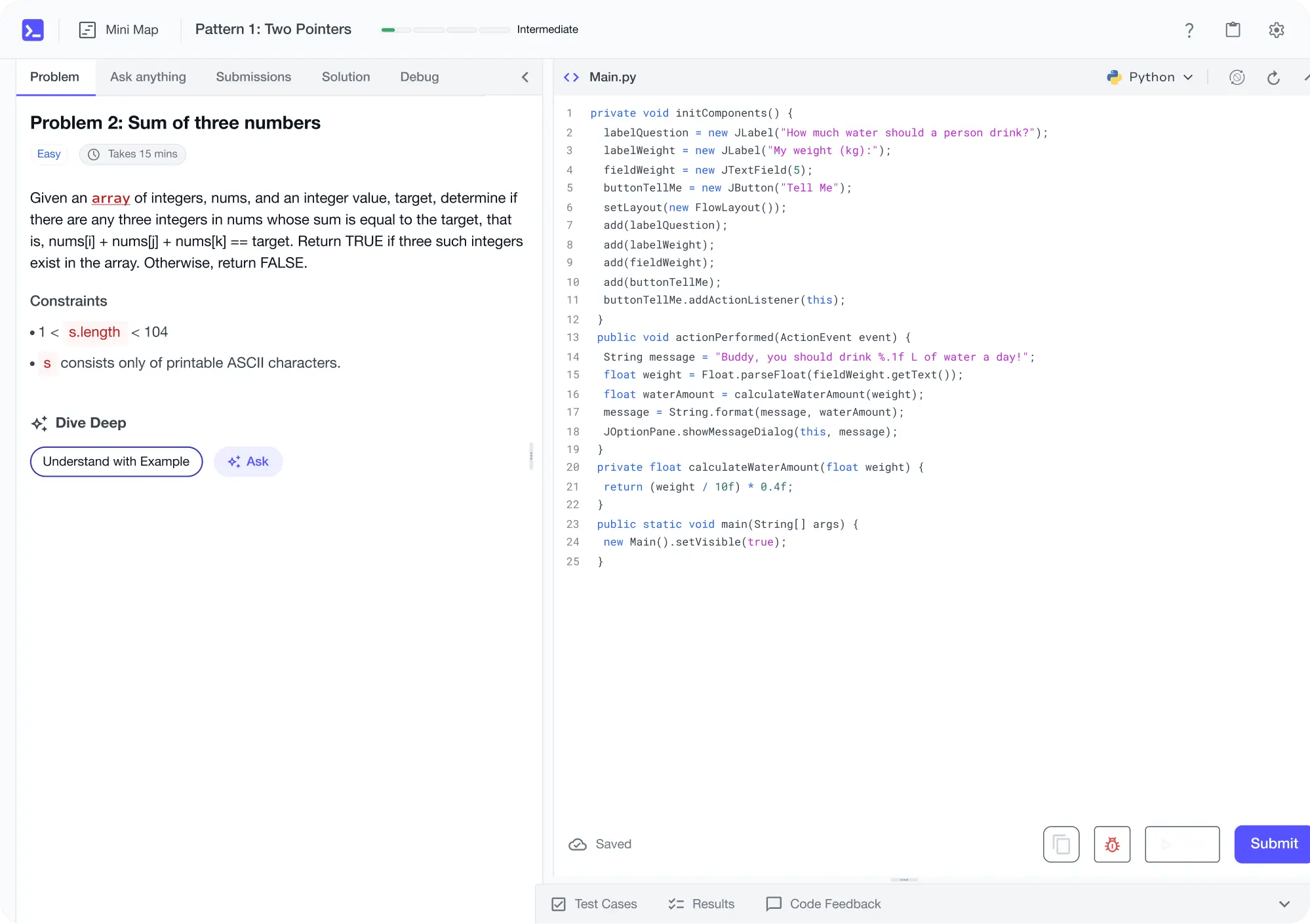

No Passive Learning

Learn by building with project-based lessons and in-browser code editor

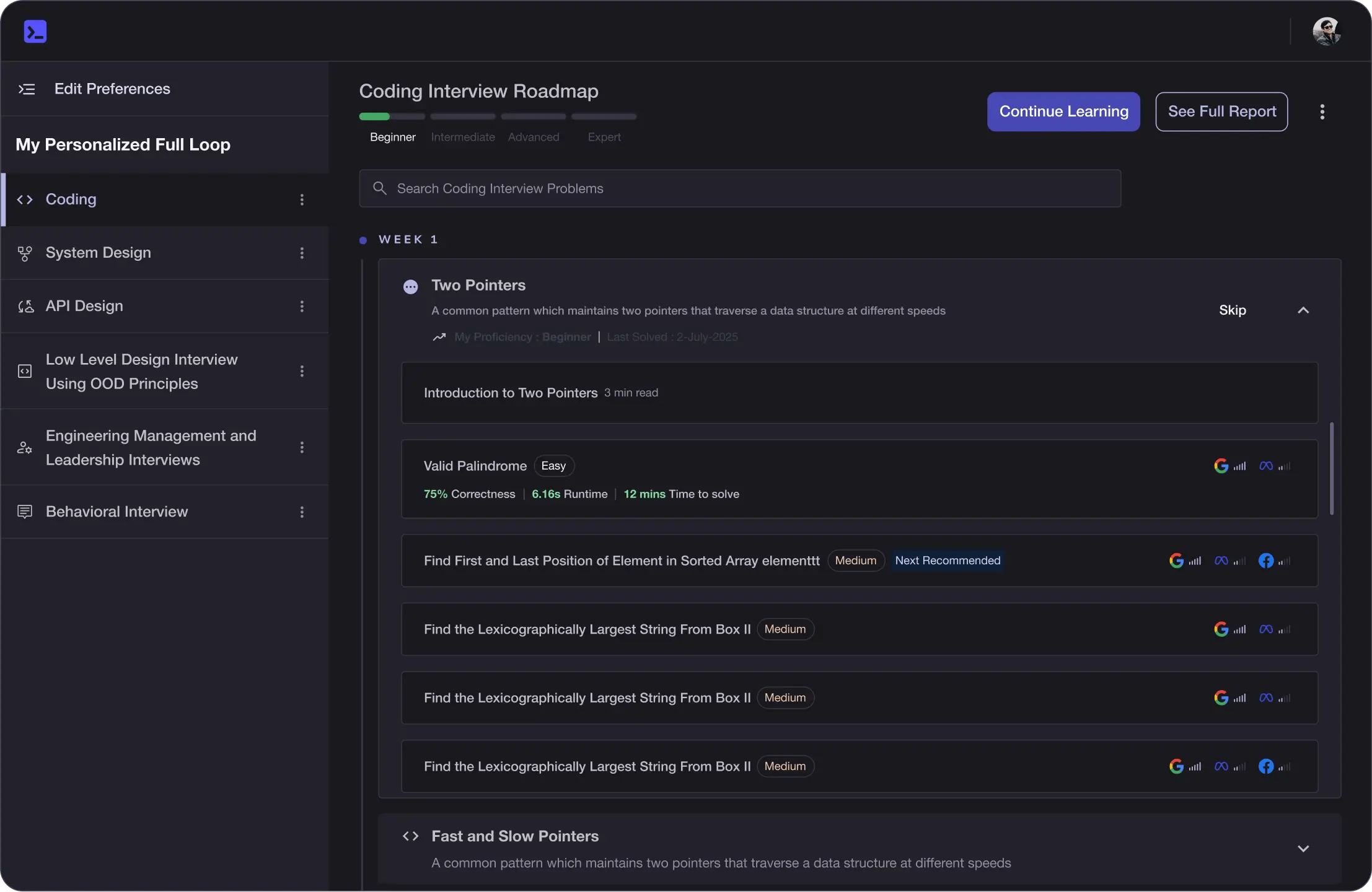

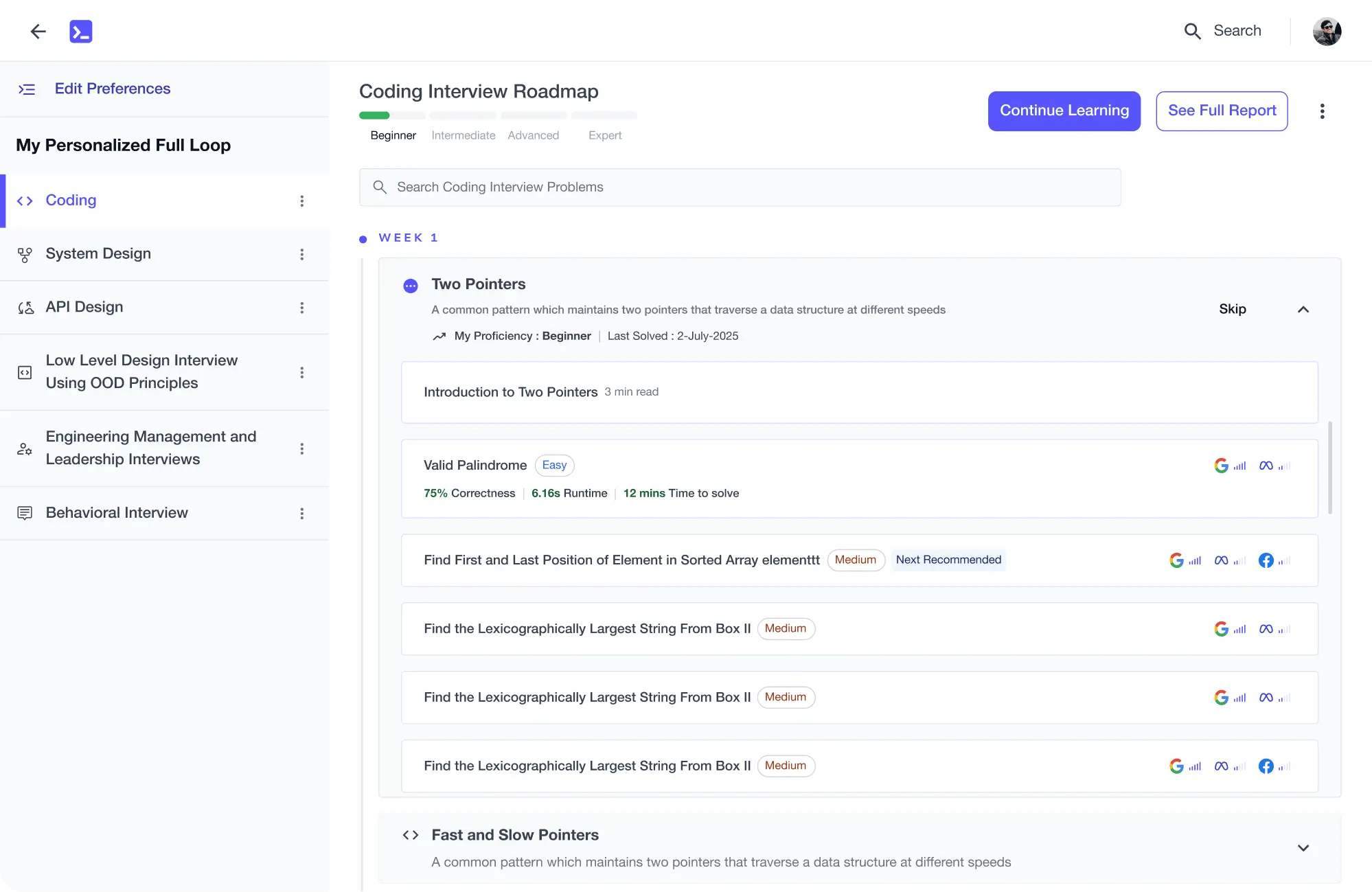

Personalized Roadmaps

The platform adapts to your strengths & skills gaps as you go

Future-proof Your Career

Get hands-on with in-demand skills

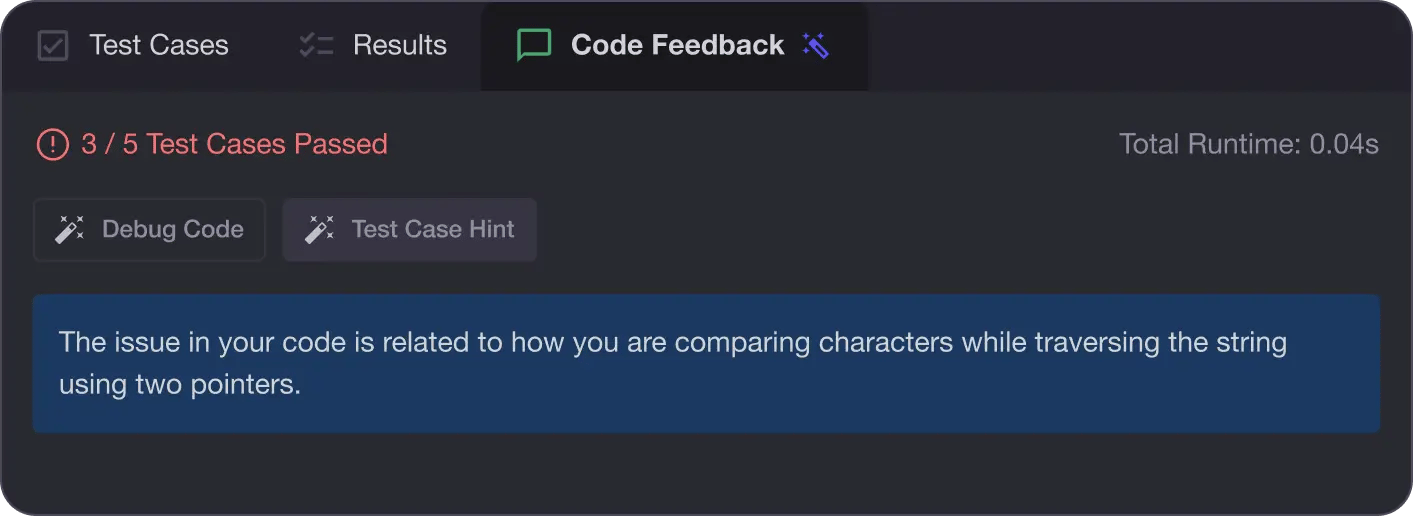

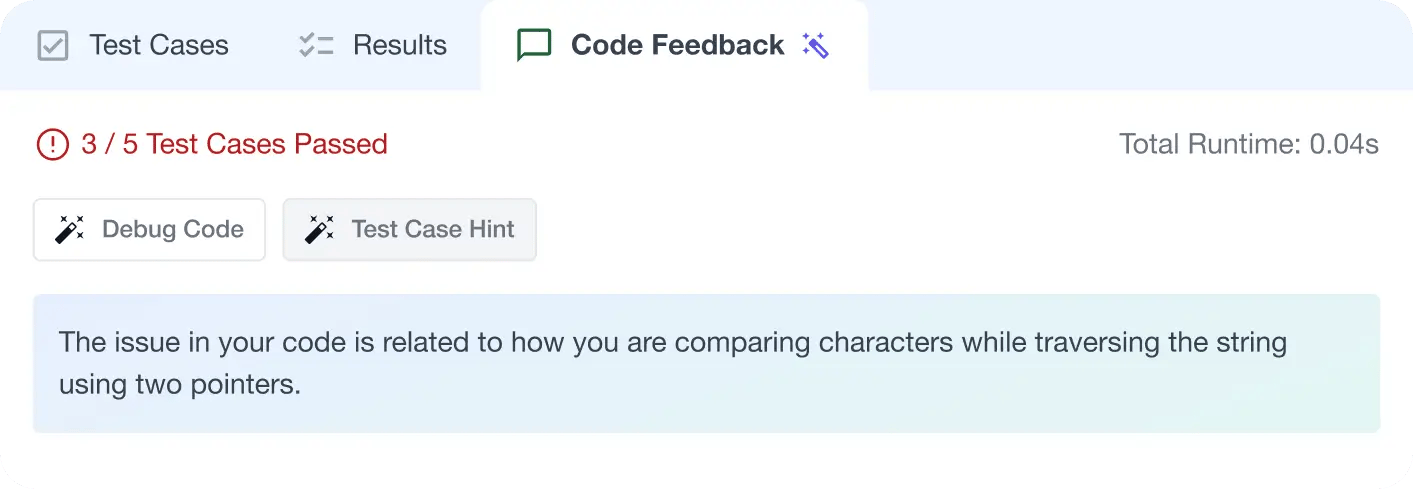

AI Code Mentor

Write better code with AI feedback, smart debugging, and "Ask AI"

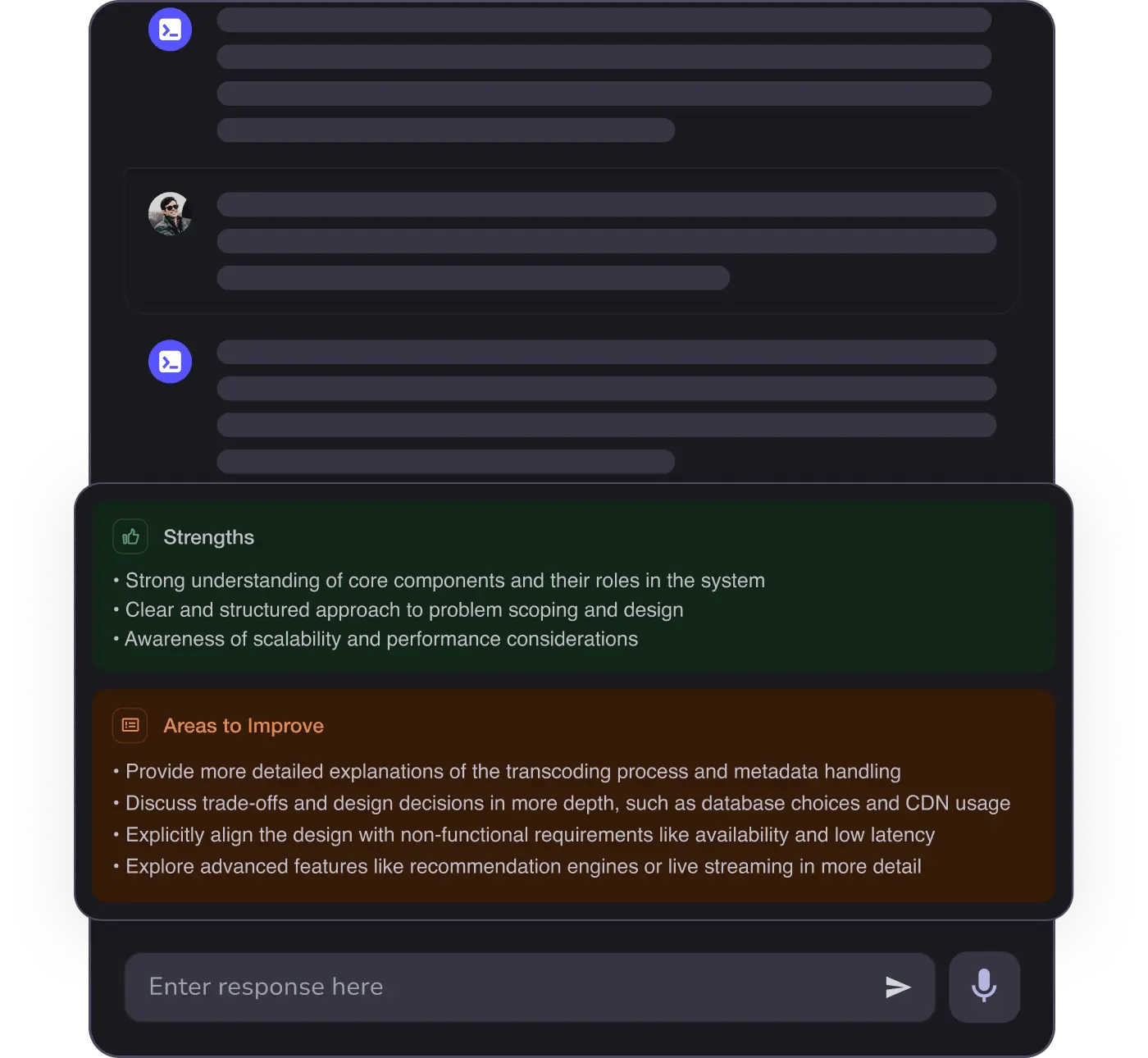

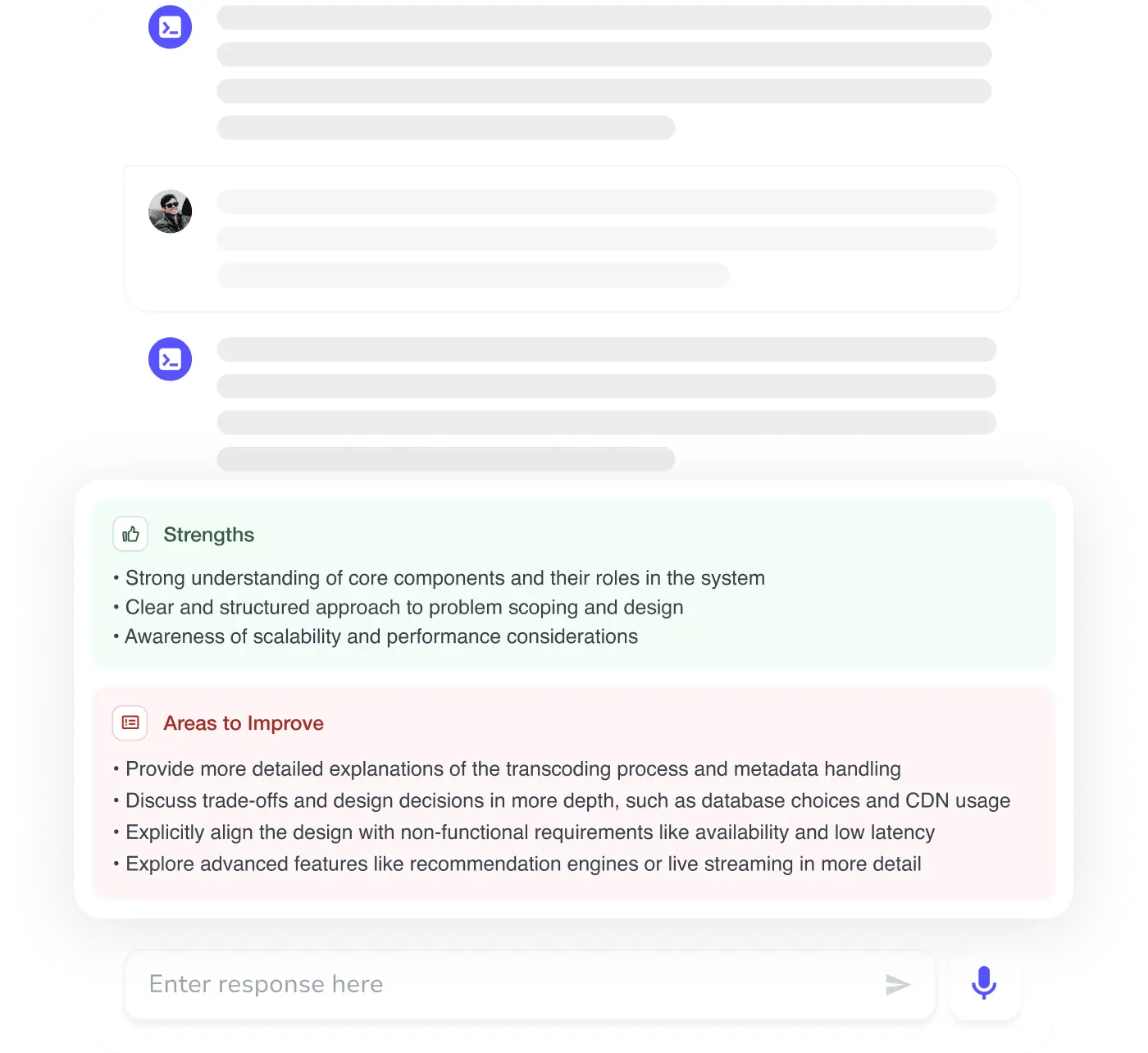

MAANG+ Interview Prep

AI Mock Interviews simulate every technical loop at top companies

Free Resources