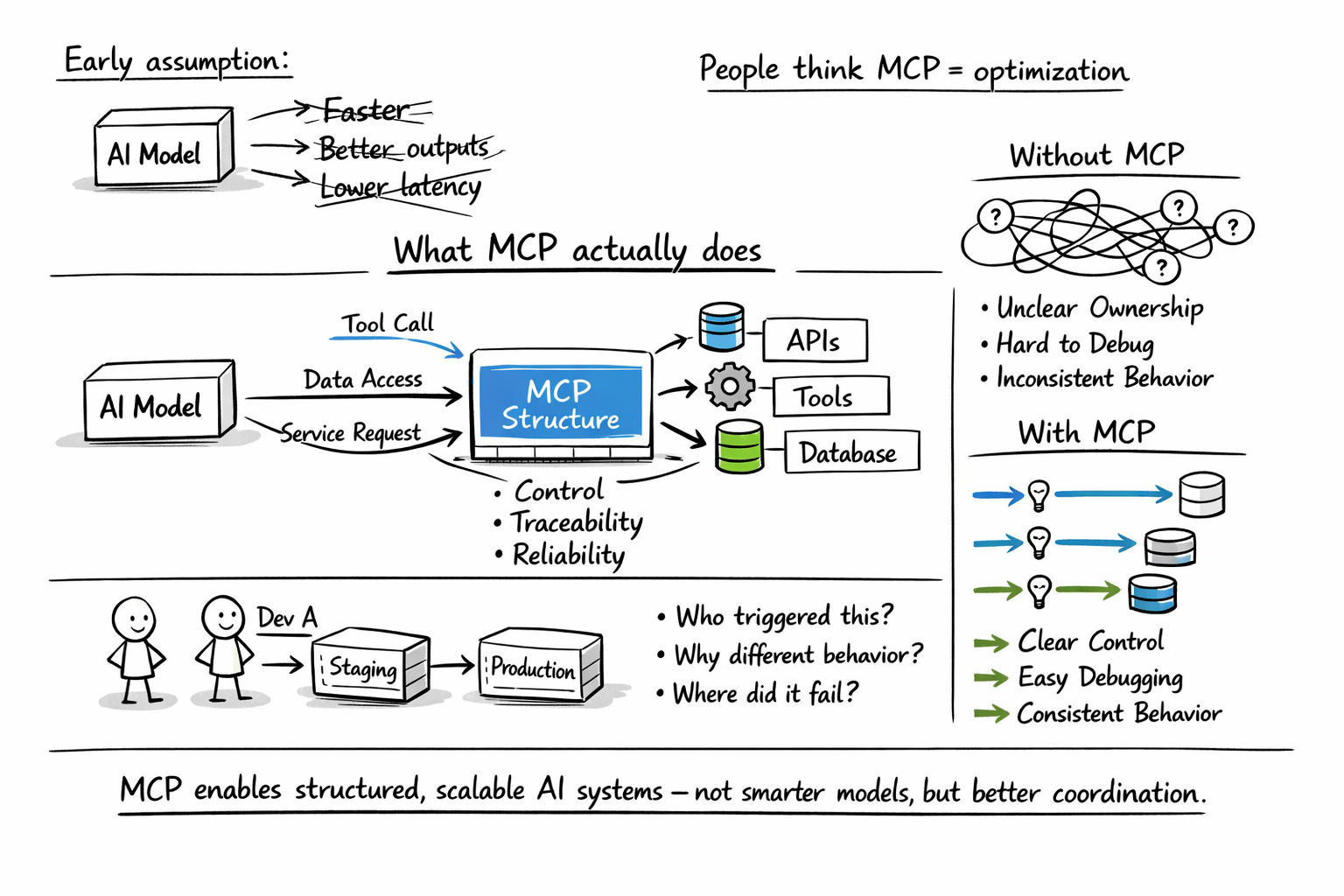

When people first encounter MCP (Model Context Protocol), they often assume it’s another optimization layer for improving model performance or reducing latency. In reality, MCP addresses a very different class of problems—ones that only become visible once your AI system grows beyond a simple prototype. It does not make your model smarter, nor does it reduce token usage or magically improve outputs. Instead, MCP fundamentals introduce structure into how AI systems interact with tools, services, and infrastructure, which becomes critical as complexity increases.

If you have only worked on small AI experiments, this distinction may not feel important yet. However, the moment your system starts integrating multiple APIs, handling sensitive operations, or supporting multiple developers, the lack of structure becomes painfully obvious. Questions start to emerge that are not about model accuracy but about control, traceability, and reliability. Who triggered a specific action? Why did a tool behave differently in staging versus production? Where exactly did a failure occur? These are not theoretical concerns—they are operational realities.

When building AI systems that evolved from lightweight assistants into internal platforms, the benefits of MCP become clear only when scale and coordination enter the picture. The difference between loosely connected AI pipelines and structured MCP-driven systems is not subtle at that point. It becomes the difference between something that barely holds together and something that can be maintained and extended with confidence over time.

MCP is one of those technologies where theoretical knowledge is widespread, yet production-grade implementation experience remains limited. The protocol itself is well-designed, but the documentation assumes a lot, the ecosystem is young, and most engineers I talk to have the same question: where do I actually start? That's why I built this course. As someone who has spent years researching and teaching AI systems (from neural networks and adaptive systems to the production-ready GenAI architectures we cover in other courses on Educative) I wanted to create the resource I wish existed when I first started working with MCP: a clear, structured, hands-on path from zero to a working MCP application. You'll start with the fundamentals: why agentic systems need a protocol like MCP in the first place, and how MCP's architecture (Host, Server, Client) solves the integration problems that have plagued AI tooling. From there, you'll build. You'll code an MCP server, wire up a client, add prompt and resource capabilities, and see your agent dynamically discover and use tools through the protocol. Everything runs in-browser. No local setup required. This course is designed for engineers who want to move beyond surface-level understanding and actually build with MCP. By the end, you’ll know how to implement the protocol, debug it, and understand where it fits in real GenAI architectures. If you’re serious about working with agentic systems and want a practical, grounded entry point into MCP, this course gives you a clear place to start and the confidence to go further.

What MCP actually changes in a real workflow#

To understand the value of MCP, it helps to first look at how most AI systems are built without it. In many implementations, tool usage is handled through custom glue code layered on top of model outputs. The model generates structured text, often in JSON form, which is then parsed by an orchestration layer. That orchestration layer triggers backend logic, executes API calls, and attempts to log activity while enforcing permissions in an ad hoc manner. Each new tool or integration requires touching multiple parts of the system, which gradually increases complexity.

This approach works well in the early stages because it is flexible and quick to implement. However, as the number of integrations grows, the system becomes harder to reason about. Tool schemas are often embedded directly into prompts, which makes them fragile and difficult to maintain. Logging is inconsistent, and authorization checks are scattered across different layers of the application. Over time, even small changes can introduce unexpected side effects, and debugging becomes increasingly time-consuming.

MCP fundamentally changes this workflow by introducing a structured contract between the AI system and the tools it interacts with. Instead of embedding tool logic into orchestration code, tools are exposed through a dedicated server with clearly defined schemas. The AI client dynamically discovers available capabilities and invokes them through a standardized protocol. Execution, authentication, and logging are no longer scattered concerns—they are centralized at a single boundary. This shift may not seem dramatic at first glance, but it significantly reduces complexity as the system evolves.

Loosely connected systems vs MCP-driven systems#

The contrast between traditional AI integrations and MCP-based systems becomes more pronounced when you examine their structure in detail. In loosely connected systems, the model prompt effectively defines how tools behave, which creates tight coupling between reasoning and execution. Each tool often requires custom parsing logic, and authorization checks are implemented inconsistently across different parts of the application. Environment-specific configuration, such as API endpoints or credentials, is frequently embedded directly in the application code, making transitions between development and production environments more difficult.

In MCP-driven systems, these responsibilities are reorganized into a more coherent architecture. Tools are registered on a server with explicit schemas, which makes their behavior predictable and easier to manage. The AI client does not assume what tools are available; instead, it queries capabilities dynamically. Authorization is enforced at a clear boundary, and environment-specific configuration is handled at the server level rather than scattered throughout the codebase. This decoupling allows the reasoning layer to remain stable even as tools evolve.

Dimension | Traditional AI integration | MCP-based integration | Practical impact |

Tool invocation | Custom JSON parsing | Standardized protocol | Less fragile orchestration |

Authorization | Mixed into app logic | Centralized at server | Stronger policy control |

Logging | Scattered across services | Unified at tool boundary | Better observability |

Environment handling | Hard-coded endpoints | Server-level abstraction | Easier dev-to-prod transitions |

Extensibility | Tight coupling | Decoupled tool evolution | Faster iteration at scale |

What this comparison reveals is that the primary advantage of MCP is not performance but architectural clarity. By separating concerns and enforcing structure, MCP makes systems easier to understand, extend, and operate. This clarity becomes increasingly valuable as the number of tools and integrations grows.

A narrative example: where MCP made a difference#

A practical example illustrates this shift more clearly than any abstract explanation. A few months ago, I worked on an internal AI assistant designed for a backend engineering team. Initially, the system was simple and focused on a narrow set of tasks, such as summarizing pull requests, retrieving logs, and answering basic questions about system health. The implementation was straightforward, and tool calls were handled directly within the orchestration layer.

As the system gained traction, new capabilities were added. It began querying staging metrics, creating Jira tickets, posting summaries to Slack, and even triggering CI re-runs. Each new feature introduced additional logic, often implemented in slightly different ways. Some tools included permission checks, while others did not. Logging varied from one integration to another, and debugging required tracing requests across multiple services. The system still functioned, but it became increasingly difficult to maintain.

The turning point came when failures started to surface more frequently, and diagnosing them required significant effort. At that stage, we introduced MCP and moved tool definitions to a dedicated server. Each tool was defined with a clear schema, and authentication was handled consistently. Logging became centralized, which made it easier to trace actions and identify issues. The AI client no longer needed to understand how tools were implemented—it simply invoked them through the protocol.

The impact was not immediate in terms of speed or performance, but it was profound in terms of reliability and clarity. Adding new tools no longer required modifying the reasoning layer, and disabling tools did not involve rewriting prompts. Most importantly, we gained visibility into system behavior, which reduced confusion and made debugging far more manageable. This is the kind of improvement that only becomes visible once a system reaches a certain level of complexity.

The Model Context Protocol (MCP) is emerging as a foundational layer for building reliable, context-aware AI systems. As LLM-powered applications grow more complex, the limitation is how effectively you manage context, orchestrate tools, and ensure consistent behavior across systems. Mastering MCP is quickly becoming essential for anyone serious about production-grade AI. I built this course from my work in adaptive AI systems and intelligent orchestration, where managing context across distributed components is often the defining challenge. A consistent pattern I observed was that developers could build isolated AI features, but struggled to design systems that maintain coherence, memory, and control at scale. MCP provides that missing structure, and this course is designed to make it practical. You’ll learn the Model Context Protocol (MCP) through its architecture, lifecycle, and communication patterns, then apply it in hands-on projects including single- and multi-server systems. You’ll integrate MCP with frameworks like LlamaIndex, implement retrieval-augmented generation (RAG), and build observability through authentication, logging, and debugging, culminating in a multimodal Image Research Assistant. Developers are already using MCP to build scalable AI systems. If you want to move from demos to production-ready architectures, this is where you start.

Collaboration and team boundaries#

Another significant benefit of MCP emerges when multiple teams are involved in building and maintaining an AI system. In loosely connected architectures, responsibilities are often blurred, which creates friction between different roles. AI engineers may hesitate to modify infrastructure-related code, while backend engineers may be reluctant to touch model orchestration logic. This overlap can slow down development and introduce inconsistencies.

MCP introduces clearer boundaries between these responsibilities. AI engineers can focus on reasoning, prompt design, and tool selection, while backend engineers are responsible for defining and maintaining tools. Platform teams can enforce policies such as authentication and logging at the server boundary. This separation of concerns reduces cognitive load and allows each team to operate more effectively within its domain.

In addition to improving collaboration, MCP also enables reuse across teams. Different teams can expose their own MCP servers while relying on the same client logic, which creates a shared contract for interacting with tools. This consistency makes it easier to scale development efforts and integrate new capabilities without disrupting existing workflows.

Performance and scaling implications#

It is important to clarify that MCP does not inherently make your system faster. Its value lies in how it changes the way you approach scaling and operational control. In traditional systems, scaling is often reactive, with solutions implemented only after issues arise. For example, when tool usage increases, downstream services may become overwhelmed, and rate limiting or concurrency controls are added in an ad hoc manner.

With MCP, tool execution passes through a centralized boundary, which provides a natural point for enforcing control mechanisms. This makes it easier to implement features such as rate limits, concurrency caps, timeouts, and usage analytics. These controls are essential for maintaining stability as your system grows and interacts with more services. Instead of reacting to problems, you can design for them proactively.

This centralized control surface also improves visibility into system behavior. By collecting consistent logs and metrics at the tool boundary, you gain insights into how your AI system is being used and where potential bottlenecks exist. This level of observability is difficult to achieve in loosely connected systems, where data is scattered across multiple services.

When MCP may not be necessary#

Despite its advantages, MCP is not always the right choice for every project. For small-scale applications with limited scope, the additional complexity introduced by MCP may not be justified. If your system consists of a single-purpose chatbot that interacts with one API and operates in a controlled environment, a simpler architecture may be sufficient.

MCP requires running an additional service and managing concerns such as authentication, configuration, and monitoring. For early-stage prototypes, this overhead can slow down development and make experimentation more cumbersome. In such cases, a lightweight approach may be more appropriate until the system’s requirements become clearer.

The key consideration is not the current state of your system but its likely trajectory. If you anticipate that your AI application will grow in complexity, interact with multiple systems, or involve multiple teams, introducing MCP earlier can prevent future challenges. The decision is less about immediate benefits and more about preparing for long-term scalability and maintainability.

A concise view of what changes#

At a high level, MCP introduces a set of architectural shifts that reshape how AI systems are built and operated. It separates reasoning from execution, which reduces coupling and improves flexibility. It formalizes tool schemas, turning them into explicit contracts rather than implicit assumptions embedded in prompts. It centralizes authorization and logging, which enhances control and observability. Finally, it isolates environment-specific differences, making it easier to move between development, staging, and production.

These changes may not appear dramatic in isolation, but together they create a more robust and manageable system. They transform loosely connected components into a cohesive architecture that can scale with confidence. Importantly, these benefits are not cosmetic—they address fundamental challenges that arise as AI systems become more integrated with real-world infrastructure.

So what are the main benefits of using MCP in AI development?#

From a practical standpoint, the primary benefit of MCP is structural integrity. It does not improve the intelligence of your models or eliminate the need for careful system design. Instead, it provides a framework for organizing complexity in a way that supports long-term growth and reliability. By introducing clear boundaries between reasoning and execution, MCP enables better maintainability, collaboration, and operational safety.

As your AI system evolves, these qualities become increasingly important. What starts as a simple integration can quickly grow into a critical component of your infrastructure, and without the right structure, it can become difficult to manage. MCP addresses this challenge by turning loosely connected services into governed systems with clear contracts and centralized control.

Ultimately, the value of MCP lies in its ability to impose discipline on AI system design. It ensures that as your system grows, it remains understandable, extensible, and reliable. In that sense, MCP is less about convenience and more about building systems that can endure beyond the initial prototype stage. And in real-world engineering, that is often the difference between a temporary solution and a sustainable platform.

Happy learning!