Reasoning models vs LLM in modern AI systems

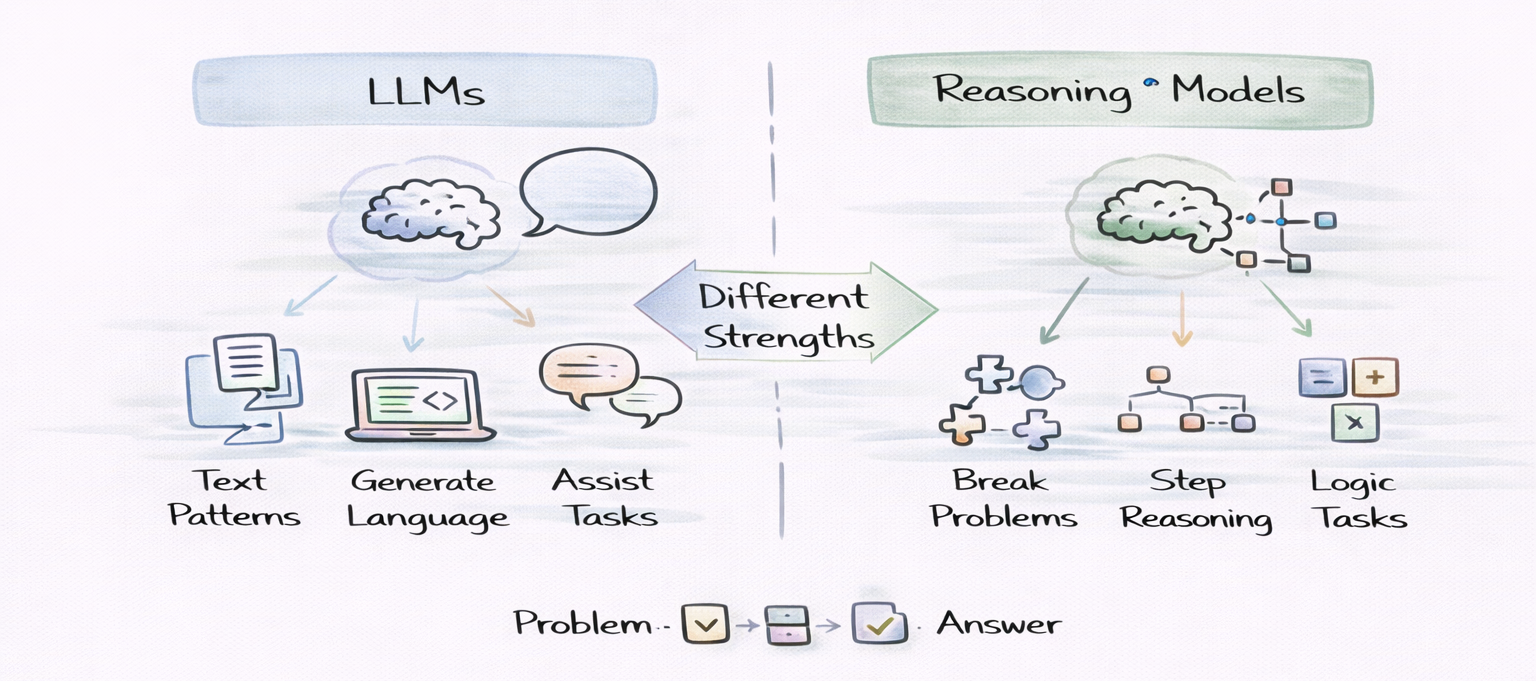

This blog explains how LLMs generate language through pattern prediction, while reasoning models emphasize step-by-step problem solving to handle complex tasks more effectively.

Modern artificial intelligence systems have advanced rapidly over the past decade. Among the most influential developments in this period has been the rise of large language models, which now power chatbots, coding assistants, research tools, and productivity applications across many industries. These systems have demonstrated impressive capabilities in generating natural language, summarizing information, and assisting with complex workflows.

However, as researchers push the boundaries of artificial intelligence, new architectures are emerging that emphasize structured reasoning rather than purely predictive language generation. This shift has led developers and AI practitioners to explore reasoning models vs LLM as an important distinction when evaluating modern AI systems.

Understanding the difference between these approaches helps clarify how artificial intelligence handles complex tasks such as mathematical reasoning, planning, logical analysis, and multi-step decision making. While traditional language models rely heavily on pattern recognition learned from training data, reasoning-focused systems aim to break down problems into intermediate steps before arriving at a conclusion.

LLM fundamentals #

LLMs are neural networks trained on massive datasets of text collected from books, websites, research papers, and code repositories. These models are typically built using transformer architectures, which allow them to analyze relationships between words across long sequences of text.

During training, the model repeatedly attempts to predict the next token in a sequence. A token may represent a word, a part of a word, or a punctuation symbol. By performing this prediction task across enormous amounts of data, the model gradually learns complex patterns in language.

Because of this training process, large language models can perform a wide variety of natural language tasks, including:

Natural language generation

Code completion and debugging assistance

Question answering

Document summarization

These capabilities arise because the model learns statistical relationships between words and concepts. When given a prompt, the system generates a response by predicting which tokens are most likely to follow based on patterns learned during training.

While this approach enables impressive language generation, it does not necessarily guarantee strong reasoning abilities. Many language models generate responses based primarily on learned patterns rather than structured logical analysis.

In this course, you will learn how large language models work, what they are capable of, and where they are best applied. You will start with an introduction to LLM fundamentals, covering core components, basic architecture, model types, capabilities, limitations, and ethical considerations. You will then explore the inference and training journeys of LLMs. This includes how text is processed through tokenization, embeddings, positional encodings, and attention to produce outputs, as well as how models are trained for next-token prediction at scale. Finally, you will learn how to build with LLMs using a developer-focused toolkit. Topics include prompting, embeddings for semantic search, retrieval-augmented generation (RAG), tool and function calling, evaluation, and production considerations. By the end of this course, you will understand how LLMs actually work and apply them effectively in language-focused applications.

Reasoning model explanation #

Reasoning models represent an emerging category of AI systems designed to perform multi-step problem solving. Instead of generating an answer immediately, these models attempt to simulate structured thinking processes.

In reasoning-oriented systems, the model analyzes a problem by generating intermediate reasoning steps. These steps may include identifying relevant information, decomposing the task into smaller components, and evaluating possible solutions.

Several characteristics distinguish reasoning models from traditional language models.

First, reasoning models emphasize step-by-step reasoning generation. Instead of providing a direct response, they often produce intermediate explanations that demonstrate how the final answer was derived.

Second, reasoning models attempt to decompose complex problems into smaller logical components. By analyzing each step individually, the model can build toward a final conclusion more reliably.

Third, reasoning models may evaluate intermediate reasoning steps before producing a final answer. This iterative process allows the system to refine its solution as it progresses.

These capabilities allow reasoning-oriented systems to tackle tasks that require logical analysis, planning, and structured problem-solving.

Comparing reasoning models and LLMs#

The distinction between reasoning models and traditional language models becomes clearer when comparing their capabilities and behaviors.

Feature | Large Language Model | Reasoning Model |

Core capability | Language generation | Multi-step reasoning |

Problem solving | Pattern recognition | Structured logical analysis |

Output style | Direct answers | Step-by-step explanations |

Accuracy on complex tasks | Moderate | Often improved through reasoning |

The discussion around reasoning models vs LLM often centers on how future AI systems should evolve. Some researchers believe that improving reasoning abilities is essential for solving complex problems that require structured analysis rather than simple pattern prediction.

While traditional LLMs can generate impressive responses, reasoning-focused systems aim to improve reliability when solving tasks that involve multiple steps.

Example reasoning workflow #

A simple scenario illustrates how these two types of systems may approach a problem differently.

Direct language model approach#

A traditional language model may attempt to answer a question immediately by relying on patterns learned during training. For example, when asked a mathematical question, the model may generate a response based on previously seen examples without explicitly computing intermediate steps.

Reasoning-based approach#

A reasoning-oriented model may instead generate a sequence of intermediate steps before producing the final answer.

For example, when solving a mathematical problem, the model might:

Identify the relevant variables in the problem

Determine the formula needed to solve the task

Compute intermediate values step by step

Verify the logic before producing the final result

This structured reasoning process improves transparency and often leads to more reliable answers.

Why reasoning capabilities are becoming important#

Many real-world applications require artificial intelligence systems to perform tasks that involve complex decision-making. While language generation is useful, it is often insufficient for problems that require logical analysis or multi-step reasoning.

Mathematical problem solving is a clear example. Many mathematical questions require multiple calculations and intermediate steps before arriving at the correct answer. Scientific research also benefits from reasoning-based AI systems. Researchers may use AI tools to analyze experimental results, explore hypotheses, or interpret scientific literature.

Strategic planning systems represent another domain where reasoning capabilities are essential. AI systems used in logistics, operations, or financial modeling must analyze multiple constraints and evaluate potential outcomes. Software debugging and architecture analysis also require structured reasoning. Developers increasingly rely on AI tools to analyze code behavior and propose solutions to complex problems.

These challenges highlight why discussions about reasoning models vs LLM are becoming increasingly relevant in AI research and development.

Real-world applications #

Reasoning-oriented AI systems are already being explored in several practical domains. AI-assisted research tools use reasoning capabilities to analyze scientific papers, generate hypotheses, and help researchers navigate complex technical topics.

Advanced code generation systems rely on structured reasoning to analyze program behavior, identify bugs, and suggest improvements to existing codebases. Automated scientific hypothesis testing represents another emerging application. AI systems can analyze datasets, evaluate relationships between variables, and propose new experimental directions.

Decision support systems in engineering and finance also benefit from reasoning capabilities. These systems analyze large volumes of data and provide recommendations based on structured analysis. These applications demonstrate why understanding reasoning models vs LLM is increasingly important for developers working with AI-powered tools.

Future directions for reasoning in AI#

Artificial intelligence research continues to explore new architectures designed to improve reasoning capabilities.

Chain-of-thought prompting encourages models to produce intermediate reasoning steps before generating a final answer. This technique often improves performance on tasks requiring logical analysis.

Tree-of-thought reasoning frameworks extend this concept by exploring multiple possible reasoning paths before selecting the best solution.

Tool-augmented reasoning models integrate external tools such as calculators, databases, or code interpreters that assist the model during problem solving.

Hybrid systems are also emerging that combine traditional language model capabilities with specialized reasoning modules. These systems aim to provide both fluent language generation and reliable problem-solving.

These developments suggest that future AI systems may integrate both language modeling and reasoning capabilities within unified architectures.

Are reasoning models separate from LLMs?#

In many cases, reasoning models are built on top of large language model architectures. The difference lies in how the model is trained or prompted. Reasoning models emphasize structured problem solving and intermediate reasoning steps, whereas traditional LLMs focus primarily on generating fluent language.

Do reasoning models replace language models?#

Reasoning models are not intended to replace language models. Instead, they extend the capabilities of existing models by improving their ability to solve complex problems. Many modern systems combine language generation with reasoning techniques.

Why do reasoning prompts improve LLM responses?#

Prompts that encourage reasoning guide the model toward generating intermediate steps. These steps help organize the model’s analysis and reduce the likelihood that it will skip important parts of the solution process.

Will future AI systems focus more on reasoning?#

Many researchers believe that improving reasoning abilities is essential for building more reliable AI systems. Future architectures are likely to combine language modeling, reasoning mechanisms, and external tools to handle increasingly complex tasks.

Final words#

Artificial intelligence systems have evolved dramatically in recent years, with large language models becoming a central technology in modern AI applications. However, many complex tasks require structured reasoning rather than simple pattern recognition.

Understanding reasoning models vs LLM helps developers appreciate how AI systems are evolving from purely predictive language generation toward architectures capable of structured reasoning and complex problem solving. As research continues to advance, reasoning capabilities are likely to play a central role in the next generation of AI systems.

Happy learning!