How to prevent data leakage in machine learning

This blog shows how to prevent data leakage in machine learning by properly splitting data, avoiding leakage in features and preprocessing, and using clean evaluation workflows.

When developers and data scientists begin building machine learning models, they quickly discover that model performance depends heavily on the quality and integrity of the data used during training. As practitioners gain experience with predictive modeling workflows, many start searching how to prevent data leakage because unexpected results during model evaluation often signal that something has gone wrong in the data preparation pipeline.

Machine learning models learn patterns from historical data and apply those patterns to make predictions about future observations. However, subtle mistakes in the way datasets are prepared, split, or processed can introduce hidden information into the training process. When this happens, models may appear highly accurate during evaluation but fail to perform well when deployed in real-world systems.

Data leakage is one of the most common causes of misleading machine learning results. Preventing it requires careful dataset design, disciplined preprocessing procedures, and a structured machine learning workflow that maintains strict separation between training and evaluation data.

This blog explains the causes of leakage and provides practical guidance on how to prevent data leakage when building reliable machine learning models.

ML System Design interviews reward candidates who can walk through the full lifecycle of a production ML system, from problem framing and feature engineering through training, inference, and metrics evaluation. This course covers that lifecycle through five real-world systems that reflect the kinds of problems asked at companies like Meta, Snapchat, LinkedIn, and Airbnb. You'll start with a primer on core ML system design concepts: feature selection and engineering, training pipelines, inference architecture, and how to evaluate models with the right metrics. Then you'll apply those concepts to increasingly complex systems, including video recommendation, feed ranking, ad click prediction, rental search ranking, and food delivery time estimation. Each system follows a consistent structure: define the problem, choose metrics, design the architecture, and discuss tradeoffs. The course draws directly from hundreds of recent research and industry papers, so the techniques you'll learn reflect how ML systems are actually built at scale today. It is designed to be dense and efficient, ideal if you have an ML System Design interview approaching and want to go deep on production-level thinking quickly. Learners from this course have gone on to receive offers from companies including Snapchat, Meta, Coupang, StitchFix, and LinkedIn.

What is data leakage in machine learning?#

Data leakage occurs when information that would not normally be available during prediction unintentionally influences the model during training. In other words, the model gains access to data that indirectly reveals the correct answer.

This situation usually arises during dataset preparation, feature engineering, or preprocessing. If information from the test dataset or future observations becomes part of the training data, the model may learn patterns that would not exist in real-world scenarios.

The result is artificially high performance during evaluation. The model appears to perform extremely well because it has effectively seen information related to the outcome in advance. However, once deployed in production environments where that information is not available, the model’s accuracy drops significantly. Understanding this concept is essential before exploring how to prevent data leakage, because recognizing the problem is the first step toward building trustworthy machine learning systems.

Overview of data leakage prevention#

Concept | Description |

Data leakage | When training data includes information that would not be available at prediction time |

Impact | Artificially high model performance during evaluation |

Detection | Monitoring unexpected performance improvements |

Prevention | Proper data splitting and preprocessing workflows |

Each of these concepts highlights an important part of the machine learning development process. Data leakage refers to the unintended exposure of information during training that should only appear during evaluation or after the prediction event. This exposure undermines the validity of the training process.

The impact of leakage is often seen in unusually high model accuracy during testing. These results may appear promising at first, but they do not reflect real-world performance. Detection typically occurs when practitioners notice discrepancies between training performance and production performance. Suspiciously high evaluation metrics may indicate hidden data contamination.

Prevention requires disciplined workflows that separate training and evaluation data and apply preprocessing steps correctly.

Common causes of data leakage#

Several common mistakes can introduce leakage into machine learning pipelines.

Improper train-test splits: Leakage often occurs when datasets are split incorrectly or when data used during testing accidentally appears in the training dataset. If the model encounters examples during training that later appear in evaluation data, it may memorize those examples rather than learning general patterns.

Target leakage: Target leakage happens when input features contain information that directly reveals the target variable. For example, a model predicting whether a customer will cancel a subscription might include variables that only become available after cancellation occurs. The model then learns to rely on those variables rather than learning genuine predictive relationships.

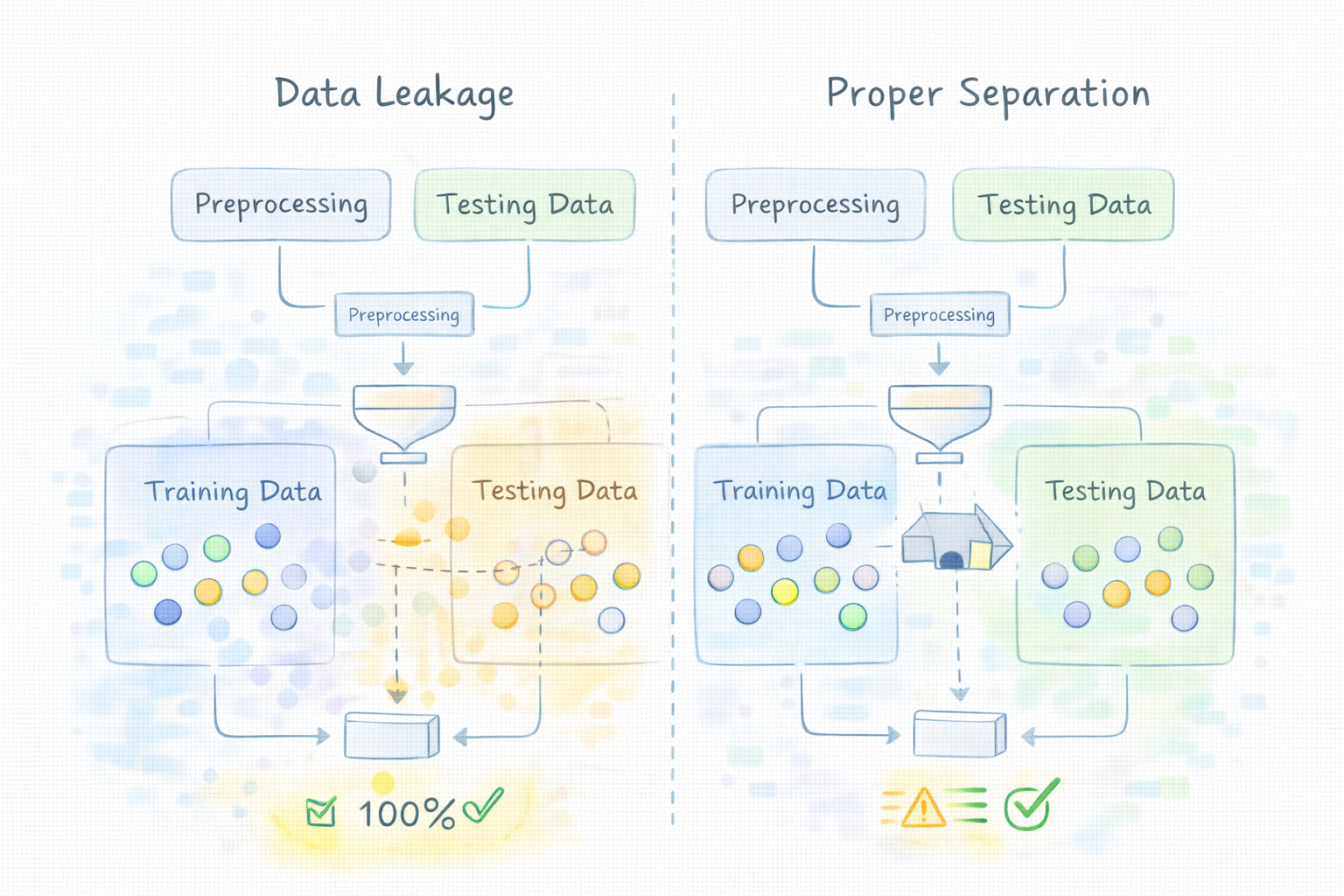

Data preprocessing mistakes: Many preprocessing operations, such as normalization, scaling, or feature selection, rely on statistics computed from the dataset. If these operations are performed before splitting the data into training and testing sets, information from the test set indirectly influences the training process.

Temporal leakage: In time-based datasets, leakage can occur when future data points are used to predict past events. For example, using future sales figures to train a model predicting earlier demand would introduce information that would not be available during real-world predictions.

Understanding these sources of leakage helps practitioners design workflows that support how to prevent data leakage in practice.

Best practices for preventing data leakage#

Preventing leakage requires careful planning at every stage of the machine learning pipeline.

Perform train-test splits before preprocessing#

One of the most important rules in machine learning workflows is splitting datasets into training and testing subsets before performing preprocessing operations. By separating the data early, developers ensure that transformations such as scaling or feature selection are calculated only using the training data.

Separate feature engineering from the target variable#

Feature engineering should focus only on information that would be available before the prediction event occurs. Developers must review input variables carefully to ensure that none of them indirectly reveal the target outcome.

Use cross-validation correctly#

Cross-validation is commonly used to evaluate machine learning models more reliably. However, it must be implemented carefully so that each validation fold remains independent from the training folds. Improper cross-validation can still introduce leakage if preprocessing steps are applied incorrectly.

Maintain strict data pipelines#

Automated data pipelines can help enforce consistent data preparation practices. By clearly defining each step in the data processing workflow, teams can reduce the likelihood of accidental data contamination.

These practices form the foundation of how to prevent data leakage in modern machine learning systems.

Machine learning workflow checklist to prevent leakage#

Developers can follow a structured workflow to reduce the risk of leakage during model development.

Split the dataset into training and testing sets firstThe first step in any machine learning project should be separating the dataset into training and evaluation subsets. This ensures that testing data remains untouched during model training.

Apply preprocessing steps only to the training dataTransformations such as normalization or feature scaling should be fitted using the training dataset. This prevents test data from influencing the model during training.

Fit preprocessing transformations using the training datasetAfter fitting preprocessing transformations on the training data, those same transformations can be applied to validation and test data without recalculating parameters.

Apply the same transformations to validation and test dataUsing identical preprocessing steps ensures that the model receives consistent input formats while still maintaining proper separation between training and evaluation datasets.

Evaluate models using clean validation datasetsThe final evaluation step should use datasets that have not been exposed to the training process. This ensures that evaluation metrics accurately reflect real-world model performance.

Following this checklist helps teams systematically implement how to prevent data leakage across machine learning pipelines.

Real-world example of preventing data leakage#

Consider a company building a machine learning model to predict customer churn. The goal of the model is to identify users who are likely to cancel their subscription in the near future. During dataset preparation, developers might include variables such as customer activity levels, account age, and billing history. However, if the dataset also includes features that are only available after cancellation occurs, such as final billing adjustments or account closure flags, the model may inadvertently learn from those variables.

A correct workflow prevents this issue by ensuring that features only contain information available before the prediction event. Additionally, the dataset is split into training and testing subsets before any preprocessing operations are applied. By maintaining this structured pipeline, the team avoids leakage and produces a model that performs reliably when deployed in production systems.

Why is data leakage harmful in machine learning?#

Data leakage is harmful because it produces misleading evaluation results. When models learn from information that should not be available during prediction, they appear to perform better than they actually will in real-world environments. This discrepancy can lead to unreliable models being deployed in production systems.

Can data leakage occur in small datasets?#

Yes, data leakage can occur in datasets of any size. In fact, smaller datasets can sometimes make leakage harder to detect because the effects may appear as unusually high model performance rather than obvious data contamination.

Does cross-validation automatically prevent leakage?#

Cross-validation improves model evaluation but does not automatically prevent leakage. If preprocessing steps are applied before cross-validation splits are created, leakage can still occur. Proper workflow design remains essential.

What tools help detect leakage during model development?#

Developers often use tools such as feature importance analysis, data validation pipelines, and monitoring of evaluation metrics. Unexpectedly high accuracy or unusually strong correlations between features and targets may indicate potential leakage.

Final words#

Data leakage is one of the most common challenges faced by developers building machine learning systems. When information that should remain hidden during training accidentally enters the model development process, evaluation metrics become misleading and models fail to generalize effectively.

Understanding how to prevent data leakage helps developers design robust data pipelines, apply proper validation techniques, and carefully manage feature engineering workflows. By maintaining strict separation between training and evaluation data, machine learning practitioners can build reliable predictive models that perform consistently in real-world environments.